Introduction

In Stanley Kubrick’s cult movie 2001, A Space Odyssey (1968), the hero goes through customs on presentation of his voice.Science fiction and daily experience of safely recognizing people by their voices let us suppose that speaker recognition is a resolved problem, both for human being and for machines.

The human being has a good ability to identify familiar voices and the results of automatic speaker recognition are fair in controlled conditions, but these circumstances are rarely reached in real forensic cases. The speaker recorded from the telephone line in a kidnapping or blackmail case is often stressed or afraid, and unfamiliar to the police.Drug dealers are regularly wiretapped, but because of their nomadic, illegal activity, and as they suspect they are listened to by the police, they use phone boxes or cellular telephones in noisy environments, like cars, streets or bars.

Everyone knows of forensic speaker recognition, but the main areas of forensic science that include voice are: (1) speaker recognition, (2) speaker profiling, (3) intelligibility enhancement of audio recordings, (4) transcription and analysis of disputed utterances, and (5) authenticity or integrity examination of audio recordings.As a primary investigation, authenticity or integrity examination of audio recordings can precede each of the other tasks (1) to (4).

The Human Voice

Nature of voice and production of speech

Voice results from an expiratory energy used to generate noises and/or to move the vocal cords, which generate voiced sounds.This behavior is one of the basic methods of communicating by common codes; these codes are languages.

Speech production is composed of two basic mechanical functions: phonation and articulation. Phonation is the production of an acoustic signal.Articulation includes the modulation of the acoustic signal by the articulators, mainly the lips, the tongue and the soft palate, and its resonance in the supraglottic cavities, oral and/or nasal.The phonemes produced are divided into voiced and unvoiced consonants and vowels; they can be characterized in the time domain, in the spectral domain and in the time-spectral domain.

The frequency range of the normal speech signal is 80-8000 Hz, with a dynamic range of 60-70 dB.The average fundamental frequency of vibration of the vocal cords (P0), called pitch, is between 180 and 300 Hz for females, between 300 and 600 Hz for children and between 90 and 140 Hz for males.

Perception of voice and speech

Speech perception is generally described as a five-stage transformation of the speech signal in a message: peripheral auditory analysis, central auditory analysis, acoustic-phonetic analysis, phonological analysis and higher order analysis (lexical, syntactic and semantic).The human ear is primarily designed to perceive the human voice.The accepted range for perception is between 16 and 20 000 Hz, with extremely good sensitivity between 500 and 4000 Hz. The recognized limit in the intensity domain is between 130 and 140 dB.

Voice as Evidence Collection of evidence

The evidence does not consist of speech itself, but of a transposition, obtained by a transducer, that converts acoustic energy into another form of energy: mechanical, electrical or magnetic.This transposition is recorded on a storage medium, on which it is coded by an analog or digital information-coding method.

In analog recording, the strength and shape of the transduced audio waveform bears a direct relationship to the original sound wave.By contrast, in a digital recording, the transduced waveform is sampled, and each sample is translated into a binary number code, such as that used by computers. Table 1 lists a variety of audio recording devices (but certainly not all), according to the type of storage medium and coding method used.

Quality of Evidence Types of evidence

In most cases, evidence is recorded off the telephone lines, either in cases of wiretapping or anonymous calls.In anonymous calls, the message is generally short, from seconds to minutes; where it is a monolog, it may be a prerecorded message, voluntarily modified by a filtering or editing procedure. From a lexical point of view, the themes are targeted, i.e. abuse, extortion, obscenity, threats. In wiretapping procedures, the recordings can reach hundreds of hours.There are conversations, diversified from a lexical point of view, and some utterances may refer to internal codes of groups or organizations.

Speaker variability and simulation

Disputed utterance When making an anonymous call, the voice can be unintentionally altered by the speaker because of the peculiar psychological conditions of stress and/or fear created by the act of committing a crime.State of health, tobacco and psychotropic substances also affect the voice.The speaker may also deliberately modify the voice, speech and/or language.

In wiretapping procedures, speakers are generally unaware of the fact that they are being recorded.In such cases, there are usually no unintentional or deliberate alterations of voice, speech and/or language, as described above.But the spontaneity pursuant to this ignorance leaves the speakers with a substantial adaptability of their discourse in the context, in their mood and in the relationship they establish with their interlocutor.

Control recording The control should be recorded in similar conditions to the disputed utterance and should be as representative as possible of the speaker.

Table 1 Audio recording devices

| Magnetic tape | Tapeless magnetic | Non-magnetic | |

| Analog | Micro cassette recorder | Wire recorder (obsolete) | Phonograph using: |

| Compact cassette recorder | - wax or tin foil cylinder or disc | ||

| Reel-to-reel recorder | (obsolete) | ||

| Eight-track player | - shellack disk (obsolete) | ||

| Camcorder: analog audio | - vinyl record (increasingly obsolete) | ||

| component of video tape | - motion picture camera: film audio | ||

| Answering machines | track (nearly obsolete with respect to | ||

| home use) | |||

| Digital | Digital audio tape recorder (DAT) | Sampler, computer or digital | Audio recorder, sampler, computer or |

| Digital compact cassette recorder | audio workstation using: | answering machine using | |

| (DCC) | - floppy disk | semiconductor memory (memory | |

| Stationary head recorders | - hard disk | chips) | |

| (e.g., DASH recorders) | - removable ‘hard’ cartridge | Optical laser unit using: | |

| Camcorder: digital audio | - magneto-optical cartridge | - CD (compact disc), CD-ROM (read | |

| component of videotape | only memory), CD-I (interactive) | ||

| Computer using tape drive | - CD-R (recordable) | ||

| backup: helical scan unit (e.g. | - CD-RW (rewritable) | ||

| DAT drive) or stationary head | - DVD (digital versatile disk) | ||

| unit | - Mini disk |

Nevertheless, this procedure is often made months or years after the disputed utterance and the human voice changes with time.Moreover, the suspect can be uncooperative and it could prove difficult to get the suspect to simulate the supposed psychological conditions of the disputed utterance.Such circumstances can prevent or restrict effective speaker recognition procedures.

Transmission channel distortion

On telephone lines, the speech bandwidth is reduced to the frequency range between 300 and 3400 Hz: some high frequency speaker-dependent features, as well as pitch, are not transmitted.The auditor perceives the pitch as being all the same, through the integer multiples of F0, called harmonics.

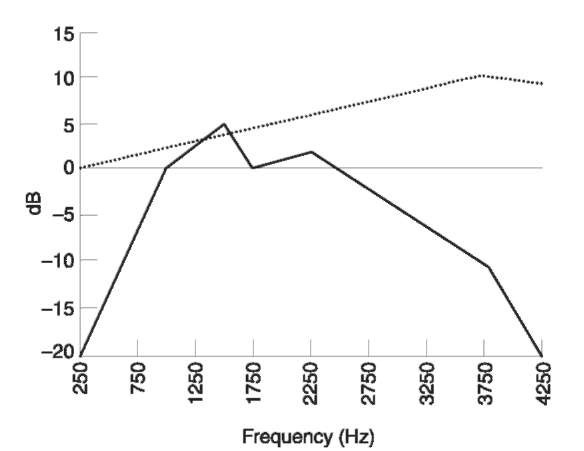

The dynamic range of the telephone line is only from 30 to 40 dB, the signal suffers from nonlinear distortion and additive noise is picked up and transmitted by the handset microphone.This implies a number of other potential degradations of the speech signal, depending on the characteristics of the microphone (Fig. 1) and the telephone network (Fig. 2).This network can be fixed or mobile, and the transmission and coding system of the information can be analog, digital (mainly in developed countries) or mixed.The quality of digital networks depends particularly on the coding algorithms and the transmission rate for information.

Recording system distortion

The recording equipment is the only link in the chain that it is possible to control, but it is unfortunately often the weakest.Either the device is not suited to the task or it is improperly maintained or used, which can prevent any useful analysis of the voice.Even if the transmission is digitized, the recordings for law enforcement are still exclusively realized on analog recorders at low tape speed and with correspondingly low quality.

Figure 1 Comparison of frequency responses for two types of handset microphone: electret (••••); carbon (—).

Figure 2 Comparison of transmission quality for two types of mobile network: digital (••••); analog (—).

Admissibility

As the conditions of admissibility for audio recording evidence depend dramatically on the criminal law of each country, this topic is not treated here.

Speaker Recognition

Types of speaker recognition

Speaker recognition refers to any process that uses some features of the speech signal to determine if a particular person is the speaker of a given utterance. Three kinds of approach can be distinguished: (1) speaker recognition by /istening (SRL), (2) speaker recognition by visual comparison of spectrograms (SRS) and (3) automatic speaker recognition (ASR).

SRL involves the study of how human listeners associate a particular voice with a particular individual or group, and indeed to what extent such a task can be performed.SRS comprises efforts to make decisions on the identity or nonidentity of voice based on visual examination of speech spectrograms.ASR relies on computer methods, based on information theory, pattern recognition and artificial intelligence.

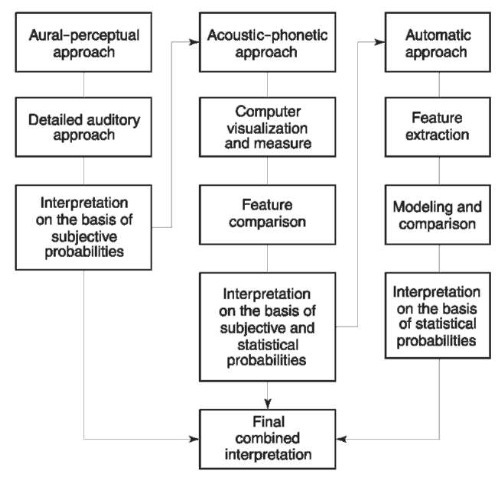

Each of the above approaches is currently used in forensic science: SRL, practiced either by experts, phoneticians or speech scientists, or by nonexperts, principally victims or witnesses; SRS, practiced by experts; and ASR methods, integrated into semiautomatic or computer-assisted systems.The current tendency is to integrate the results of SRL and ASR and to use spectrography only for visualization purposes (Fig. 3); only a few laboratories currently master all approaches.

Procedure and methods

Listening is the initial task.It can also be the only task if the disputed utterance and the control recording obviously differ; for example, if the pitch, the dialect or other grossly audible characteristics of the speaker are not the same.Therefore, a speaker recognition procedure is more likely to be engaged when close auditory similarities exist between the evidence and the control recording, or when voice disguise is suspected.

Figure 3 Combined approach for forensic speaker recognition.

When engaged, the recognition process consists of three stages: (1) feature extraction, (2) feature comparison, and (3) classification.Foreign language samples and degraded samples whose signal-to-noise ratio (SNR) is less than 25 dB should be examined only with great caution.

Feature extraction

As no speaker-specific feature is presently known, feature extraction presupposes knowledge of the aspects of the acoustic signal yielding parameters that depend most obviously on the identity of the speaker. Moreover, repetitions of the same material by the same speaker vary from utterance to utterance.The existence of intraspeaker variability makes speaker recognition closely analogous to the identification of handwriting.

The ideal parameters would:

• exhibit a high degree of variation from one speaker to another (high interspeaker variability);

• show consistency throughout the utterances of a single speaker (selectivity);

• preferably be insensitive to emotional state or health and to communication context (low intra-speaker variability);

• withstand attempted disguise or mimicry (resistance);

• occur often in speech (availability);

• be neither lost nor reduced in telephone transmission channel recording process (robustness);

• not be prohibitively difficult to extract (measur-ability).

Feature comparison

Through her or his utterances, the speaker may be represented in a feature space where the number of dimensions relates to the number of parameters ex-tracted.A distance, appropriate to the parameters, is measured to estimate the closeness of evidence and control.This condition is implicitly accomplished when using a subjective approach.The comparison of the disputed utterance and a control recording leads the scientist either to a numerical assessment, which describes the distance between them, or to a subjective opinion taking into account similarities and differences.

Classification

The numerical value given by ASR expresses a random match probability of the set of features compared, in so far as the range of samples is representative of the population modeled.A subjective opinion, such as given by SRS and an aural-perceptual approach, expresses a subjective probability of the frequency of the set of values.The results of these two last kinds of approach are controversial because a subjective opinion can differ significantly from statistical probabilities, as well as among experts.The acoustic-phonetic approach combines both aspects: numerical and subjective.

Speaker Recognition by Listening (SRL)

Recognition of familiar and unfamiliar speakers are probably two independent and unrelated abilities. Identifying a familiar speaker is essentially a pattern recognition process where a unique voice pattern matches an individual.For discrimination between unfamiliar speakers, the process of matching auditory parameters involves greater feature analysis as well as overall pattern recognition.Human beings perform better identification when the voice is familiar; however, in most cases the perpetrator is unfamiliar to the listener, whether or not expert.

Recognition by nonexperts

Experimental investigations conducted in presenting voice lineups to nonexpert listeners reveal that the accuracy of discrimination of unfamiliar voices depends on several factors: the size and the auditory homogeneity of the voices presented in the voice lineup; the age and sex of the speakers and the listeners; the quantity of the speech sample initially heard; the delay between the initial hearing of the disputed utterance and the recognition procedure; the existence of a voice disguise; the quality of both the transmission channel and the recording equipment; and the presence or absence of concordant visual testimony.

Even if all these factors are favorable, the great variability of individual performance restricts the use of this type of investigation to indicative value, the uncertainty of which is similar to that of any testimony.

Recognition byexperts

This analysis combines aural-perceptual and phonetic-acoustic approaches.Most of the features considered are based upon the physiological mechanisms of the speech production or psychoacoustic knowledge.

Aural-perceptual approach The aural-perceptual approach consists of a detailed auditory analysis. It applies to the parameters (1) of voice, such as height, timbre, fullness; (2) of speech, such as articulation, diction, speech rate, pauses, intonation, speech defects; and (3) of language, such as dynamics, prosody and style.It also includes linguistic observations on syntactic, idiomatic or even paralinguistic features, such as breathing patterns.The main results of this type of analysis are documented in a transcript using the international phonetic alphabet (IPA).

Phonetic-acoustic approach Thanks to computer visualization techniques and specific signal-processing algorithms, the acoustic phonetic examination permits the quantification or the more precise description of several of the parameters studied in auditory analysis.In the spectral domain, the trajectory and the relative amplitude and bandwidth of the formants are studied (the formants, or formant frequencies, are zones of the vowels where the harmonic intensities are focused).The spectral distribution of the energy, pitch and pitch contour are analyzed as well.In the time domain, the study focuses on the duration of the segments, rhythm and cycle-to-cycle variation of the vibration period of the vocal cords, called jitter.

Speaker Recognition byVisual Comparison of Spectrograms (SRS)

Technology

The sound spectrograph is an instrument that shows the variation of the short-term spectrum of the speech wave.In each spectrogram, the horizontal dimension is time, the vertical dimension represents frequency, and the darkness represents intensity on a compressed scale.This speaker recognition technique, widely used in the USA, is based on the assumption that intraspeaker variability is less than or differs from interspeaker variability, and that ‘voiceprint’ examiners can reliably detect this difference by visual comparison of spectrograms.

The Kersta method

In 1962, Kersta first proposed the SRS method under the name of ‘voiceprint’ identification.He claimed that the speech spectrogram of a given individual is as permanent and unique as fingerprints and would allow the same level of certainty for forensic identification.

In 1970, Bolt and collaborators denied these assertions, observing that, contrary to fingerprints, the pattern similarity of speech spectrograms depends primarily on acquired movement patterns used to produce language codes, and only partially and indirectly on the anatomical structure of the vocal tract. The details of the pattern are just as variable as the overall pattern and are affected by growth and habits. Therefore, the differences between fingerprints and speech spectrograms are more significant than similarities, and the inherent complexity of spoken language brings SRS closer to aural discrimination of unfamiliar voices than to fingerprint discrimination. The authors concluded that the term ‘voiceprint’ was a fallacious analogy to fingerprints and that considerable research was necessary to establish the validity and the reliability of the SRS.

The Tosi study: an attempted validation of the Kersta method

In 1972, Tosi and collaborators produced the only large-scale study, to date, for the determination of accuracy for subjects performing speaker identification tasks based on sound spectrograms.They conclude that ‘if trained ”voiceprint” examiners used listening as well as spectrograms for speaker identification, even under true forensic conditions, they would achieve lower error rates than the experimental subjects had realized under laboratory conditions’.

This extrapolation, as well as the claim that the scientific community had accepted the method, was invalidated by Bolt and collaborators in 1973.They stated that the study of Tosi had improved the understanding of some of the problems of SRS by indicating the influence of several important variables for the accuracy of identification.However, they further concluded that in many practical situations the method lacked an adequate scientific basis for estimating reliability, and that laboratory evaluations showed an increase in error as the conditions for evaluation moved towards real forensic situations.

The National Academy of Sciences (NAS) report

As a result of the wide controversy on the reliability of the SRS and the admissibility of the resulting testimony in the USA, the NAS authorized the conduct of a study, in 1976, at the request of the Federal Bureau of Investigation (FBI).The committee concluded that the technical uncertainties concerning the SRS were significant and that forensic applications should be allowed with the utmost caution.It took no position for or against forensic use, but recommended that, when in testimony, the limitations of the method should be clearly and thoroughly explained to the fact finder, whether judge or jury.

Since the publication of the NAS report, mixed legal activity has been seen in the USA, some cases favoring admissibility and others disallowing SRS. Since the Daubert decision calling for a ‘reliability standard’, opponents of SRS might call for disallowance in jurisdictions where it had previously been admitted.Significantly, Daubert is binding only on federal jurisdictions, and the long-term impact of the cases on the USA evidentiary regimes is unknown.

Automatic Speaker Recognition (ASR)

Automatic speaker recognition methods can be divided into text-dependent and text-independent methods.Text-independent methods are predominant in forensic applications where predetermined key words cannot be used.

Feature extraction

During the 1970s, research was devoted to discovering, in the speech signal, the determining factors taking place in human speaker recognition, and to selecting statistically the most relevant parameters. Several semiautomatic systems based on the extraction of explicit acoustic phonetic events and basic statistical measures were developed for law enforce-ment.SASIS (semiautomatic speaker identification system), developed by Rockwell International in the USA, and AUROS (automatic recognition of speaker by computer), developed by Philips GmbH and the BundesKriminalAmt (BKA) in Germany, have been abandoned because of their lack of results in the forensic context or the difficulty in applying them (only usable by phoneticians).SAUSI (semiautomatic speaker identification system) is under development in the University of Florida, but its applicability to ordinary forensic cases remains to be established.

As early as 1980, the methods based on explicit localization of acoustic events in the speech signal came into use and a consensus has since emerged on the use of derived parameters of the short-term spectrum of the speech signal, such as linear prediction coefficients (LPC) and cepstral coefficients (CC). CAVIS (computer assisted foice identification system) was developed at the Los Angeles County Sheriff’s Department from 1985.It is based on the extraction of temporal and spectral features and on a statistical weighting and comparison procedure. CAVIS was discontinued in 1989 because the laboratory results, although encouraging, did not match those performed under forensic conditions.

Feature comparison

At present, research focuses on methods able to exploit efficiently whole sets of parameters for the comparison of the control recording and the disputed utterance.Thus, the advances are mainly due to improvement in techniques for making speaker-dependent feature measures and models and do not derive from a better understanding of speaker characteristics or their means of extraction.

In forensic applications, the control recording is used as training data to model the speaker, and the disputed utterance is used as the test for the compari-son.Different approaches have been used to model the LPC and CC parameters, principally vector quantization (VQ), ergodic hidden Markov models (HMMs), artificial neural networks (ANNs) and autoregressive vector models (ARVs).The most recent evaluation of the methods realized by the National Institute of Standards (NIST) in the USA shows that the performance of gaussian mixture models (GMMs) surpasses the results of all other methods for text-independent applications.

Normalization techniques

The most significant factor affecting ASR performance is the variation in the signal characteristics from sample to sample, those due to the speaker, those due to transmission and recording conditions, and those due to background noise.Training conditions, test segment duration, sex and handset variation between training and test data affect performance particularly, just as all specific forensic degradations, as cited above.Spectral equalization has been confirmed to be effective in reducing linear channel effects and long-term spectral variation, but normalization can be improved using more recent techniques, such as spectral subtraction combined with statistical missing-feature compensation.How-ever, the current lack of consistency in the algorithms and the low quality of the audio recording evidence restrict the use of ASR for forensic purposes.

Interpretation of the Results

Forensic identification

The identification process seeks individualization in forensic science.This individualization process can be seen as a reduction process, beginning with the entire suspect population and ending with a single person. In forensic speaker recognition the suspect population can be defined as the population speaking the language of the evidence.The reduction factor depends upon the closeness of the disputed utterance to the control recording, measured by several methods as discussed above.To identify a speaker means that the chance of observing, in the suspect population, an utterance from another speaker presenting the same closeness of the evidence is zero.

Evidentiary value of data

The results of the several analyses are generally insufficient to reduce the suspect population to one person only.Therefore, the classical discrimination or classification tasks, either speaker verification or identification, are inadequate to interpret the evidence because they lead to a binary decision of identification or nonidentification.Moreover, a probabilistic model (the bayesian model) allows for revision based on new information of a measure of uncertainty about the truth or falsehood of an issue.It allows the scientist to evaluate the evidentiary value of data, for instance the comparison of control recording and audio recording evidence, without making a binary decision on the identity of the speaker.

Speaker Profiling

Speaker profiling is a classification task, performed mostly by phoneticians, where a recording of the voice of a perpetrator is the only lead in a case.The classification specifies the sex of the individual, the age group, dialect and regional accent, peculiarities or defects in the pronunciation of certain speech sounds, sociolect and mannerisms.

Intelligibility Enhancement of Audio Recordings

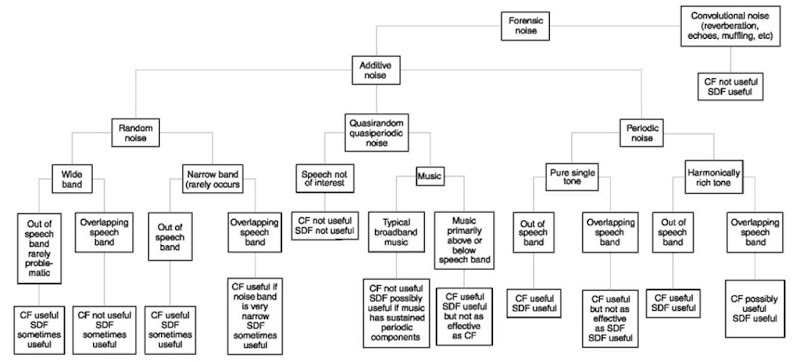

A recording can be degraded by a variety of different types of noise, each of which may suggest a different enhancement procedure.Enhancement techniques fall into two main categories: canonical filters, such as various types of band pass, and simple comb filters. A second and more effective class of enhancement techniques relies on ‘signal-dependent filters’.These filters are microprocessor-based and use digital signal processing techniques, such as adaptive filtering and spectral subtraction (Fig. 4).

A careful distinction must be made between sound quality, i.e. attractiveness and ease of listening, and intelligibility, i.e. the degree of understanding of spoken language.It is never advisable to decrease intelligibility in order to gain in sound quality.In some situations, background noise or obtrusive noise can be successfully attenuated.However, in many cases, especially where improper recording techniques result in low recorded speech levels or where broad-spectrum random noises are the primary masking sounds, enhancement procedures can be frustrat-ingly ineffective.

Figure 4 Types of forensic noise and usefulness of enhancement techniques in increasing intelligibility. CF, canonical filtering; SDF, signal-dependent filtering. Copyright © 1993 by Lawyers Cooperative Publishing Company, a division of Thomson Legal Publishing Inc.

Forensic noise is any undesired background sound that interferes with the audio signal of interest, generally speech.It can usually be classified as either additive or convolutional.Additive noise can be attributed to specific noise sources, such as traffic, background music, microphone noise, channel noise, and ambient random noise in cases where the recording level was too low.Convolutional noise, such as reverberations, acoustic resonance and muffling, is the result of the effect of the acoustic environment on the speech sample and on additive noise.Due to the time dispersive and spectral modification effects of these kinds of noise, they can have a more deleterious effect on intelligibility than additive noises.

Transcription and Analysis of Disputed Utterances

The transcription of disputed utterances consists of converting spoken language into written language.If the intelligibility of the speech is optimal, the task can be achieved by a lay person of the same mother tongue as the unknown.If the intelligibility is altered by the speaker or transmission channel distortion, decoding necessitates knowledge of phonetics, linguistics and intelligibility enhancement techniques.

After enhancement or filtering, the sample is first listened to in its entirety to locate particularly difficult passages, learn proper names, and note idiosyncratic characteristics.Proper names are often difficult to decode because there is little or no external information inherently associated with a proper name.If the sample is a conversation, the structure of the dialogue is analyzed in assigning each utterance to a speaker.In sections where the speech is audible but not intelligible, or where there are multiple speakers, the approximate number of inaudible or missing words is counted.

Secondly, the difficult passages are subjected to phonetic and linguistic analysis.The study of linguistic stress and nonlinguistic gestures, especially as highlighted with spectrography, enhances the meaning of the message and knowledge of the word boundaries.Furthermore, they afford suprasegmental information on the sentences and word structure.The analysis of the phoneme placement and manner, as well as the coarticulation, i.e. the fact that each phoneme uttered affects all others near it, provides seg-mental information on the phonemic composition of words.Once all the possible ambiguities have been resolved, uncertainties have to be clearly mentioned in the final transcript, as the potency of written words is greater than that of spoken words.

Authenticity and Integrity Examination of Audio Recordings

An audio recording used as evidence improves the overall means by which information is communicated to the trier of fact, to the degree that it presents a fair and accurate aural record.However, the ongoing growth of digital technology and the possibility of turning to an audio recording professional create falsifications that are difficult or impossible to detect, and even fabrication of recordings.The debate about the ability to falsify and detect falsifications should be relaunched because it was resolved before digital audio recording and editing became commonplace.

Some types of falsification are inherently more difficult to detect than others.The type of recording medium used, the overall noise level and the type of editing attempted all affect the chances of successfully detecting certain signs or magnetic and electronic signatures.If the recording is originally made in a digital, tapeless format, many of these detection techniques may no longer apply.Thus an audio recording made in a digital, tapeless format will be inherently much more flexible, and thus much more susceptible to alteration, than a similar analog magnetic tape recording.

Certain editing functions are easier to perform than others: the deletion of a syllable is generally much easier to perform than the insertion of that same syllable or a word would be, in part because of coarticulation.Similarly, as the complexity and magnitude of editing increases, the likelihood of its being detected also increases.However, if only a few short, but crucial, deletions or insertions have been made, these would be more difficult to detect and define.