INTRODUCTION

Traditionally, information technology (IT) evaluation, pre-implementation appraisals, and post-implementation reviews have been characterised as economical, tangible, and hard in nature. The literature review on IT evaluation shows a great bias towards using economical and tangible measures that represent the management’s view of what is ‘good’ and ‘bad’, which had been described as narrow in scope and limited in use. Smithson and Hirschheim (1998) explain that “there has been an increasing concern that narrow cost benefit studies are too limited and there is a need to develop a wider view of the impact of a new system.” Ezingeard (1998) emphasises the importance of looking at the impact of IS on both the overall system and the whole organisation.

The concern of IT evaluation is to measure whether the IT solution meets its technical objectives and to what extent. In such activity, instrumentation is highly appreciated and particularly chosen. Product oriented, instrument led, and unitary are the main characteristics of such a perception. Mainly evaluation was seen as a by-product of the decision-making process of information systems development and installation. Most of the evaluation tools and techniques used were economic based, geared to identifying possible alternatives, weighing the benefits against the costs, and then choosing the most appropriate alternative. St. Leger, Schnieden, and Walsworth-Bell (1992) explain that evaluation is “the critical assessment, on as objective a basis as possible, of the degree to which entire services or their component parts fulfil stated goals.” Rossi and Freeman (1982) advocate that “evaluation research is the systematic application of the practice of social research procedures in assessing the conceptualisation and design, implementation, and utility of social intervention programs.”

Post-implementation evaluation has been described by Ahituv, Even-Tsur, and Sadan (1986) as “probably the most neglected activity along the system life cycle.” Avison and Horton (1993) report that “evaluation during the development of an information system, as an integral part of the information systems development process, is even more infrequently practised.” In acknowledging all of the above concerns about the evaluation of IT interventions, the author presents in this article a measures identification method that aims at identifying those measures or indicators of performance that are relevant to all the stakeholders involved in such interventions.

POST IMPLEMENTATION REVIEW

Many researchers concerned with IT evaluation, mainly post-implementation reviews, have identified an urgent need to migrate from this ‘traditional’ and economical view towards using a mixed approach to IT evaluation. Such an approach will allow IT evaluators to mix between ‘hard’ and ‘soft’ measures, as well as economical and non-economical measures (Chan, 1998; Ezingeard, 1998; Bannister, 1998; Smithson & Hirschheim, 1998; Avison 7 Horton, 1993). Furthermore, there is a need to shift towards utilising an approach that reflects the concerns of all involved stakeholders rather than a Unitarian approach (Smithson & Hirschheim, 1998). Any systemic approach to IS evaluation must take into account two main issues regarding the collective nature of IS: choosing the relevant measures of performance, and equal account for economical as well as non-economical measures.

Choosing the Relevant Measures of Performance

Abu-Samaha and Wood (1999b) show that: “the main methodological problem in evaluating any project is to choose the right indicators for the measurement of success, or lack of it. These indicators will obviously be linked to aims but will also be relevant to the objectives chosen to achieve these aims, since if the wrong objectives have been chosen for the achievement of an aim, then failure can be as much due to inappropriate objectives as to the wrong implementation of the right objectives.”

Willcocks (1992), in a study of 50 organisations, gives 10 basic reasons for failure in evaluation practice; amongst these reasons are inappropriate measures and neglecting intangible benefits. Ezingeard (1998) shows that “…it is difficult to decide what performance measures should be used.” On the other hand, a different set of indicators or measures of performance will be chosen at each level or layer of the IT intervention (product, project, and programme), which adds more to the relevance of the chosen measures.

Another important aspect of choosing indicators or measures of performance is to choose the relevant measures that add value to a particular person or group of persons. Smithson and Hirschheim (1998) explain:

“There are different stakeholders likely to have different views about what should be the outcome of IS, and how well these outcomes are met. Who the different stakeholders are similarly need[s] to be identified.”

The measures identification method proposed by the author in this article provides such relevance through the identification of stakeholders and the subsequent ‘human activity system’ analysis. This is done by exploring the particular worldview, which is unique for each stakeholder, and the identification of the relevant criteria for efficacy, efficiency, and effectiveness of each stated transformation process. Such investigation would allow for the identification of the most relevant measures or indicators of performance for the stated stakeholder(s).

Equal Account for Economical as well as Non-Economical Measures

Post-implementation reviews have had a tendency to concentrate on ‘hard’, ‘economical’, and ‘tangible’ measures. Chan (1998) explains the importance of bridging the gap between ‘hard’ and ‘soft’ measures in IT evaluation, realising that “this in turn requires the examination of a variety of qualitative and quantitative measures, and the use of individual, group, process, and organisation-level measures.” Avison and Horton (1993) warn against confining post-implementation reviews to monitoring cost and performance and feasibility studies on cost-justification, saying that “concentration on the economic and technical aspects of a system may cause organisational and social factors to be overlooked, yet these can have a significant impact on the effectiveness of the system.” Fitzgerald (1993) suggests that a new approach to IS evaluation, which addresses both efficiency and effectiveness criteria, is required.

The approach described in this article gives an equal account to tangible as well as intangible benefits of IT intervention by identifying efficacy and effectiveness measures along with efficiency measures. The measures identification method proposed by the author provides a better understanding of the context of evaluation which would give a better account of the content of evaluation.

SOFT EVALUATION

The approach advocated here brings together formal work in evaluation (Patton, 1986; Rossi & Freeman, 1982) with a qualitative process of investigation based on Soft Systems Methodology (Checkland & Scholes, 1990) in order to allow us to make judgements about the outcomes of an implementation from a number of different viewpoints or perspectives. The performance measures identification method proposed in this article operates through three stages.

Stage One: Stakeholder Analysis

The first stage of the proposed method is to identify the intra- and inter-organisational stakeholders involved in the intervention. A stakeholder, as defined by Mitroff and Linstone (1993), is any “individual, group, organisation, or institution that can affect as well as be affected by an individual’s, group’s, organisation’s, or institution’s policy or policies.” Mitroff and Linstone (1993) explain that an “organisation is not a physical ‘thing’ per se, but a series of social and institutional relationships between a wide series of parties. As these relationships change over time, the organisation itself changes.” Mitroff and Linstone’s view of an organisation is synonymous to Checkland and Howell’s (1997), which negates the ‘hard goal seeking machine’ organisation.

Stakeholder analysis can be seen as a useful tool to shed some light on the subjective process of identifying relevant measures of performance for evaluation. A number of questions can be asked at this stage such as where to start, who to include, and who to leave out. The value of the investigation will be of greater importance if all relevant stakeholders are identified and included in the evaluation effort. It is obvious at this stage that some stakeholders will be of greater importance than others because of the power base that they operate from, and such stakeholders are to be acknowledged. At the same time, however, other relevant stakeholders should not be undermined for lack of such power.

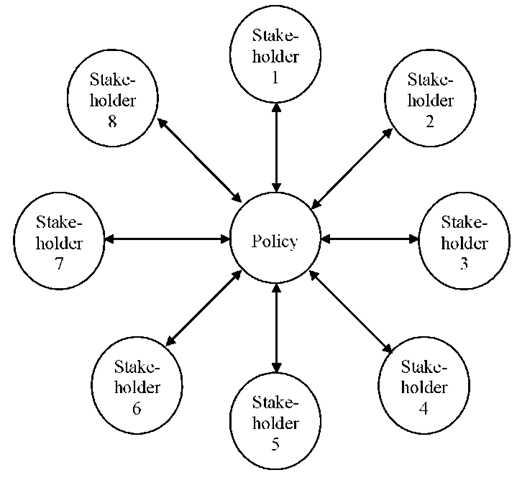

While Mitroff and Linstone (1993) do not describe the process through which stakeholders may be identified, they recommend the use of a stakeholder map, as shown in Figure 1. They explain that “a double line of influence extends from each stakeholder to the organisation’s policy or policies and back again-an organisation is the entire set of relationships it has with itself and its stakeholders.” On the other hand, Vidgen (1994) recommends the use of a rich picture, being a pictorial representation of the current status, to support this activity.

Figure 1. Generic stakeholder map (Adopted from Mitroff & Linstone, 1993, p. 141)

Stage Two: Human Activity Systems Analysis

The second stage of the proposed method constitutes the systemic organisational analysis of the problem situation or subject of evaluation. SSM is based upon systems theory, and provides an antidote to more conventional, ‘reductionist’ scientific enquiry. Systems approaches attempt to study the wider picture: the relation of component parts to each other, and to the whole of which they are a part (‘holism’) (Avison & Wood-Harper, 1990; Checkland, 1981; Checkland & Scholes, 1990; Lewis, 1994; Wilson, 1990). SSM uses systems not as representations of the real world, but as epistemological devices to provoke thinking about the real world. Hence SSM is not an information systems design methodology. It is rather a general problem-solving tool that provides us with the ability to cope with multiple, possibly conflicting, viewpoints.

Within this stage the aim is to determine a root definition for each stakeholder, which includes their “worldview,” a transformation process, together with the definition of the criteria for the efficacy, efficiency, and effectiveness of each stated transformation process (Wood-Harper, Corder, Wood & Watson, 1996). A root definition is a short textual definition of the aims and means of the relevant system to be modelled. The core purpose is expressed as a transformation process in which some entity ‘input’ is changed or transformed into some new form of that same entity ‘output’ (Checkland & Scholes, 1990). Besides identifying the world-view and the transformation process, there are the three E’s to consider as stated by Checkland and Scholes (1990):

E1: Efficacy. Does the system work? Is the transformation achieved?

E2: Efficiency. A comparison of the value of the output of the system and the resources needed to achieve the output; in other words, is the system worthwhile?

E3: Effectiveness. Does the system achieve its longer-term goals?

Stage Three: Measures of Performance Identification

According to Checkland’s Formal Systems Model, every human activity system must have some ways of evaluating its performance, and ways of regulating itself when the desired performance is not being achieved. This final stage aims at identifying appropriate Measures of Performance related to the criteria identified in the earlier stage.

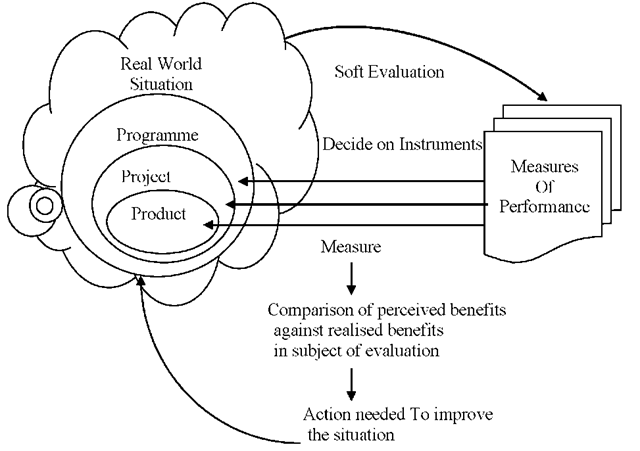

Figure 2. Generic process of soft evaluation

For each criterion of the three Es identified in the earlier stage, it is important to identify the measure of performance that would allow us to judge the extent to which the system has achieved its objectives.

Figure (2) provides a diagrammatic representation of the generic process of soft evaluation, which shows the relationship between the different tools used to investigate the success/failure of an IT intervention.

CONCLUSION

This proposed IT evaluation framework is refined from an earlier work by Abu-Samaha and Wood (1999) to reflect the layered nature of IT interventions. The approach advocated here is founded on the interpretive paradigm (Burrell & Morgan, 1979) and brings together formal work in evaluation (Patton, 1986; Rossi & Freeman, 1982) with a qualitative process of investigation based on Soft

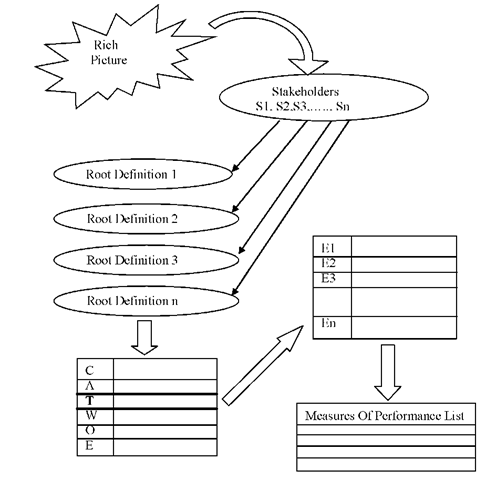

Systems Methodology (Checkland & Scholes, 1990) in order to allow us to make judgements about the outcomes of an implementation from a number of different viewpoints or perspectives. The literature survey has revealed three levels of IS/IT intervention evaluation. Measures would differ at each level of analysis, prompting a different instrument as well as a different process of evaluation. Figures 3 and 4 provide a diagrammatic representation of the soft evaluation method and framework, where the three layers of IT intervention-product, project, and programme-have been included in the evaluation process.

Relevance is of paramount importance for IT and IS evaluation. The main methodological problem in evaluating any project is to choose the right indicators for the measurement of success, or lack of it. The earlier classification of product, project, and programme evaluation shows that a different set of indicators or measures of performance will be chosen at each level or layer of the IT intervention which adds more to the relevance of the chosen measures. Another important aspect of choosing indicators or measures of performance is to choose the relevant measures that add value to a particular person or group of persons. Non-economic as well as economic measures are of equal importance. The proposed approach gives an equal consideration to the tangible as well as the intangible benefits of IT intervention by identifying both efficacy and effectiveness measures along with efficiency measures.

Figure 3. Soft evaluation framework in use

KEY TERMS

Effectiveness: A measure of performance that specifies whether the system achieves its longer-term goals or not.

Efficacy: A measure of performance that establishes whether the system works or not-if the transformation of an entity from an input stage to an output stage has been achieved?

Efficiency: A measure of performance based on comparison of the value of the output of the system and the resources needed to achieve the output; in other words, is the system worthwhile?

IS Success: The extent to which the system achieves the goals for which it was designed. In other words, IS success should be evaluated on the degree to which the original objectives of a system are accomplished (Oxford Dictionary).

Post-Implementation Evaluation: The critical assessment of the degree to which the original objectives of the technical system are accomplished through the systematic collection of information about the activities, characteristics, and outcomes of the system/technology for use by specific people to reduce uncertainties, improve effectiveness, and make decisions with regard to what those programs are doing and affecting (Patton, 1986).

Product Evaluation: The emphasis at this level is on the technical product, software, IT solution, or information system. The concern at this level is to measure to what extent the IT solution meets its technical objectives. The emphasis here is on efficacy: Does the chosen tool work? Does it deliver?

Programme Evaluation: The concern at this level is to measure whether the programme aims have been met and whether the correct aims have been identified.

Project Evaluation: The emphasis at this level is on the project, which represents the chosen objective(s) to achieve the aims of the programme. The concern at this level is to measure whether the project meets these objectives and to what extent it matches user expectations. The emphasis here is on efficiency. Does the project meet its objectives using minimal resources?

Soft Systems Methodology: A general problem-solving tool that provides us with the ability to cope with multiple, possibly conflicting viewpoints based on systems theory, which attempts to study the wider picture; the relation of component parts to each other. It uses systems as epistemological devices to provoke thinking about the real world.

Stakeholder: Any individual, group, organisation, or institution that can affect as well as be affected by an individual’s, group’s, organisation’s, or institution’s policy or policies (Mitroff & Linstone, 1993).