I cannot allocate enough memory for the JVM!

A common issue that developers sometimes meet is that they are not able to allocate enough memory for server Java applications running on 32-bit machines.

The issue is not specific to the JVM: on a 32-bit machine, a process cannot allocate more than 4 GB (2^32 possible memory locations).

Unfortunately, that’s just the beginning; part of the 4 GB address space is reserved for the OS kernel. On normal consumer versions of Windows, the limit drops down to 2 GB. On Linux the limit is 3 GB per process.

Finally, of the remaining memory available you are allowed to use only the biggest contiguous chunk of memory available. That’s because the JVM needs to grab an unfragmented virtual space of memory.

That’s an issue for the Windows platform where optimizations aimed to minimize the relocation of DLL’s during linking, make it more likely for you to have a fragmented address space.

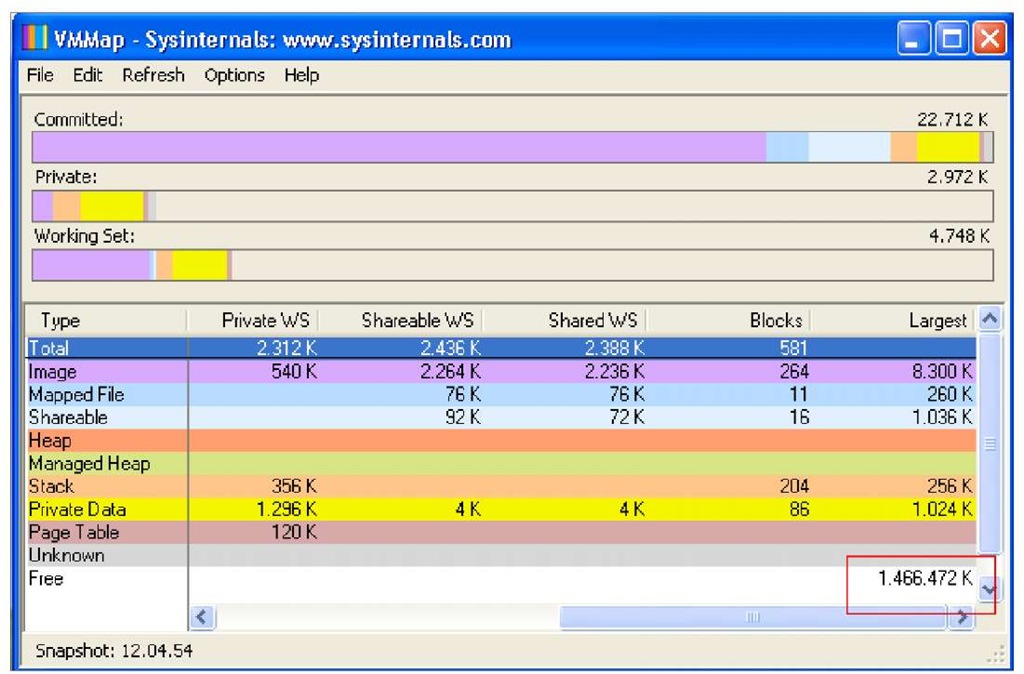

As a proof to our affirmations, examine the following picture taken from the VMMap utility (http://technet.microsoft.com/en-us/sysinternals/dd5 3 5 53 3.aspx) which shows the largest chunk of virtual memory available on a 2 GB of RAM XP Machine.

As you can see in the following screenshot, barely 1.4 GB is left for a single process:

In the end, on a 32-bit Windows machine, you will hardly be able to allocate more than 1.5 GB. On a 32-bit Linux, you are a bit luckier and you can allocate about 2 GB. On Solaris, the limit is set to about 3.5 GB.

What are your alternatives if you need to allocate a larger set of memory? Actually, there are a few options:

• The most obvious one is to move to a 64-bit machine. On a 64-bit machine, the problem is nonexistent because you have 2A64 possible locations and you can allocate up to 16 Exabytes (An Exabyte equals to 1 billion GB).

• If you cannot afford to switch to 64-bit machines but you would just be fine with a little bit more of heap (let’s say 2 GB for Windows), then you can consider switching to Oracle’s JRockit Virtual Machine which introduced support for split heaps. This means that you don’t need to allocate a contiguous chunk of memory, which is particularly restrictive on Windows platforms (you can download JRockit here: http://www.oracle.com/ technology/products/jrockit/index.html).

• The last option available for Enterprise applications, which we strongly suggest to consider, is scaling your system configuration horizontally. By defining a cluster of application servers, the load will be split on several JVMs thus reducing the need to use very large heaps. If you still need to use a very large heap size (over 2 GB), (because of the inherent characteristics of your application), do remember that the time to complete a garbage collection cycle grows in direct proportion to the size of the heap. So, if you have to deal with a huge 64-bit heap size, a good rule is to keep the heap size as large as needed, but no more.

Where do I configure JVM settings in JBoss AS?

If you need to change the default JVM settings for your application server you should find the start-up script and search for the environment variables containing the Java options.

In JBoss application server, the start-up script is contained in the JBOSS HOME/bin folder of your distribution. The thing that is slightly different is the default location, which contains the JVM default settings.

In earlier JBoss releases (JBoss AS 4.X) Virtual Machine’s options were contained in the application server’s start-up script (run.sh for Linux and run.cmd for Windows machines)

Since JBoss AS 5, the environment variables and Java options were configured in a separate file named run.conf.sh/run.conf.bat. You can customize your JVM settings in this file.

Sizing the garbage collector generations

The second most influential knob is the amount of the heap dedicated to the young generation. You can set the initial amount of memory granted to the young generation with the -xx:NewSize flag which can be coupled with -xx:MaxNewSize. For example the following configuration starts a JVM with 448m reserved to the young generation, out of the 1024m available.

The last parameter, -xx:SurvivorRatio, specifies how much space will be granted to the survivor spaces compared to the whole young generation. In this example, setting the survivor ratio to 6 means that Eden receives 6 units of space while each of the two survivor spaces receives 1 unit, so each survivor space receives 1/8 of the young generation. A ratio of 8 equates to 1/10, and so on.

This may be a little complicated, however just remember you should stick to a range between 6-10. The higher the ratio, the smaller the survivor spaces.

Which is the correct ratio between the young and old generations?

The theory says that you should grant plenty of memory to the young generation for applications that create lots of short lived objects and, on the other hand, reserve more memory to the tenured generation for applications making use of long-lived objects such as pools or caches.

So, provided that you have decided the maximum heap size you can afford to give the JVM, you can plot your performance metric against different young generation sizes to find the best setting.

In most cases, you will find that the optimal size of the young generation ranges from 1/3 of the total heap up to 1/2 of the heap. Increasing the young generation becomes counterproductive over half of the total heap or less since it negates its ability to perform a copy collection and results in a full garbage collection. This is also known as the young generation guarantee.

What is the young generation guarantee?

To ensure that the minor collection can complete even if all the objects are live, enough free memory must be reserved in the tenured generation to accommodate all the live objects arriving from the survivor spaces. When there isn’t enough memory available in the tenured generation to accommodate all these objects, a major collection will occur instead.

Also, the survivor spaces settings should be shaped for optimal performance. Therefore if you don’t provide any value for them, they are only allocated 1/34 of the young generation, which turns into a survivor space segment of 1-2 MB. This means, in practical terms, that if you unwisely allocate a large object, it will transition to the tenured space in a few garbage collector cycles.

The suggested size for survivor spaces should range between 1/8 and 1/12 of the size of Eden. Things will get easier with practical examples so there is no need to worry.

The image below shows an example of Java Heap configuration with a young generation space too little. What happens here is that, since the young generation is filled up quickly, short-lived objects are tenured prematurely. They will be collected with a costly major collection, whereas with a larger young collection a minor collection could have reclaimed them.

In the next case, we have set a young space over half of the total heap. Since there is not enough space to accommodate all the live objects promoted to the tenured generation, there will be frequent major collections.

At the end of this topic we have included a use case, which will show how you can find the correct balance between the two generations with some simple trials.

The garbage collector algorithms

The third important knob, which can influence the performance of your JVM, is the garbage collector algorithm, which determines how the garbage collection process is executed. There are three kinds of garbage collectors which can be used:

• Serial collector

• Parallel collector

• Concurrent collector

Actually there’s a fourth one available since release 1.6.14 of J2SE called the G1 collector. We will discuss it in a while.

The serial collector performs garbage collection using a single thread, which stops other JVM threads. While it is a relatively efficient collector, since there is no communication overhead between threads, it cannot take advantage of multiprocessor machines. So it is best suited to single processor machines.

The following image resumes how the serial collector works, by stopping all other threads until the collection has terminated.

The serial collector is selected by default on hardware and operating system configurations not elected as server class machines, or can be explicitly enabled with the option -XX:+UseSerialGC.

The parallel collector (also known as the throughput collector) performs minor collections in parallel, which can significantly improve the performance of applications having lots of minor collections.

As you can see from the next picture, the parallel collector still requires a so-called stop-the-world activity. However, since the collections are performed in parallel, using many CPUs, it decreases garbage collection overhead and hence increases the application throughput.

The parallel collector is selected by default on server class machines, or can be explicitly enabled with the option -xx:+UseParallelGC.

Since the release of J2SE 5.0 update 6 you can benefit from a feature called parallel compaction that allows the parallel collector to also perform major collections in parallel. Without parallel compaction, major collections are performed using a single thread, which can significantly limit scalability.

This collector includes a compaction phase where the garbage collector identifies the regions that are free and uses its threads to copy data into those regions. This produces a heap that is densely packed on one end with a large empty block on the other end. In practice this helps to reduce the fragmentation of the heap, which is crucial when you are trying to allocate large objects.

Parallel compaction is enabled by adding the option -XX:+UseParallelOldGC to the command line.

The last collector we will cover, the concurrent collector (CMS), performs most of its work concurrently (that is, while the application is still running) to keep garbage collection pauses short.

Basically, this collector consumes processor resources for the purpose of having shorter major collection pause times. This can happen because the concurrent collector uses a single garbage collector thread that runs simultaneously with the application threads. Thus, the purpose of the concurrent collector is to complete the collection of the tenured generation before it becomes full.

The following image gives an idea of how the trick is performed:

At first the collector identifies the live objects, which are directly reachable (initial mark), then the collector marks all the live objects reachable while the application is still running (concurrent marking). A subsequent phase named remark is needed to revisit objects modified in the concurrent marking phase. Finally the concurrent sweep phase reclaims all objects that have been marked.

The reverse of the coin is that this technique, which is used to minimize pauses, can reduce overall application performance. Hence, it is designed for applications whose response time is more important than overall throughput.

The concurrent collector is enabled with the option -XX:+UseConcMarkSweepGC.

![tmp39-67_thumb[2] tmp39-67_thumb[2]](http://what-when-how.com/wp-content/uploads/2011/09/tmp3967_thumb2_thumb.jpg)

![tmp39-68_thumb[2] tmp39-68_thumb[2]](http://what-when-how.com/wp-content/uploads/2011/09/tmp3968_thumb2_thumb.jpg)

![tmp39-69_thumb[2] tmp39-69_thumb[2]](http://what-when-how.com/wp-content/uploads/2011/09/tmp3969_thumb2_thumb.jpg)

![tmp39-70_thumb[2] tmp39-70_thumb[2]](http://what-when-how.com/wp-content/uploads/2011/09/tmp3970_thumb2_thumb.jpg)

![tmp39-71_thumb[2] tmp39-71_thumb[2]](http://what-when-how.com/wp-content/uploads/2011/09/tmp3971_thumb2_thumb.jpg)