We have demonstrated experimental and theoretical data indicating that a single neuron may be not only a minimal structural unit, but also a minimal functional unit of a neural network. The ability of a neuron to learn is based on the property of selective plasticity of its excitable membrane. In brains, the neurons are integrated into complex networks by means of synap-tic connections. As we have demonstrated, reorganizations of both synaptic processes and excitability are essential for learning and it is important to evaluate the correspondence between plasticity of excitable membrane and synaptic plasticity. Both forms of plasticity may recognize an input signal as a whole. A chemical input signal fires modification of potential-gated channels and excites them, while excitable membrane in its turn affects its own synaptic input. As a result, the waveform of a generated AP depends upon the already completed chemical calculations. Do these calculations have an effect on the target neuron, too?

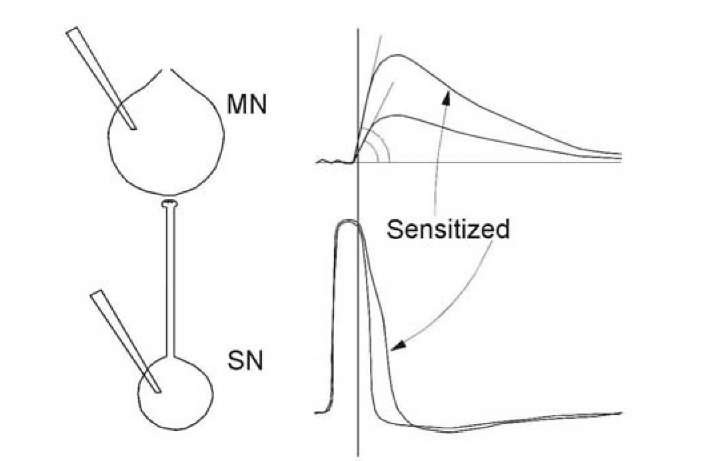

This cannot be accepted with definiteness, but some data support such a notion. First of all, selective decrease of AP amplitude in neurons of the olfactory bulb of the frog [1253] affect the olfactory cortex of forebrain. Reduced somatic AP somehow exerts an effect on the axon and monosynaptic output reaction. This effect is also selective: after adequate stimulation of olfactory epithelium, direct stimulation of the olfactory nerve produces a smaller monosynaptic reaction in the olfactory bulb neurons and the target neurons in the forebrain, while direct stimulation of the olfactory tract (axons of the same neurons) does not exert a standard reaction in the forebrain. In addition, high-frequency stimulation of the mossy fiber axons in the cerebellum [54] or Schaffer collateral pathways in hippocampus [1222, 586, 446] induced an increase in excitability through a monosynaptic connection in the target neurons. Sometimes one has doubts as to whether the AP waveform determines the postsynaptic reaction evoked by the AP, since mismatch may exist between the AP broadening and the increase in the monosynaptic EPSP slope [547]. However, this result was not unexpected, since the broadening of the AP occurs after the time moment when the EPSP slope has been already formed (line in the Fig. 1.35).

Synaptic delay is rather short and only a rapid alteration in the leading edge of an AP can ensure the participation of the excitable membrane in the selective change in the next EPSP evoked by this AP.

Fig. 1.35. Correspondence between AP duration in the presynaptic neuron (SN) and EPSP in the postsynaptic target neuron (MN) (at the left). At the right, sen-sitization evokes AP broadening in the presynapse (bottom) and increase in EPSP slope in the postsynapse (top). Synaptic time delay is smaller than the AP duration and therefore a cause-and-effect connection between the back front of the AP and the EPSP slope and amplitude cannot be direct.

Change in excitability of the postsynaptic neuron may also exert the reverse influences to activity of its own synaptic inputs and thus regulate the synaptic potentials, which it accepts. Neuronal excitability is dependent on the distribution and properties of ion channels in a neuronal membrane. And activation or inhibition of potential dependent channels may compliantly augment or diminish the amplitude of postsynaptic potentials, both the EPSP and inhibitory postsynaptic potential, converging to a given neuron. Specific effect of tetanic stimulation depends upon a complex interaction of the stimulated synapse with all of the other synapses onto the common target cell and it appears that the postsynaptic cell somehow regulates its presynaptic inputs [1359]. Supposedly, Na+ channels not only amplify the generator potential in the subthreshold voltage zone before each action potential, they also depolarize cellular membranes, unblock NMDA receptors and increase EPSP [319]. So, a specific change in excitability may control modification of responses to a specific synaptic pattern, but this control concerns only the later part of the synaptic potentials, since the slope is produced before activation of voltage-gated channels in the same neuron, analogously, as we have described for influence in the target neuron (Fig. 1.35).

A parallel potentiation in synaptic activity and neuronal excitability is enhanced input-output coupling in a cortical neuron [670, 291, 997]. The time course of EPSP potentiation development and change in excitability may differ [54, 1367]. Correspondence between processes in presynaptic and target neurons might depend on involvement of the soma of the presynaptic neuron in the process, as happens in the natural condition. For example, during habituation in mollusks, different branches of presynaptic neurons produce synchronous fluctuations of unitary EPSPs in different postsynaptic neurons [760]. However, correlation between change in presynaptic excitability during learning and modification of reaction in the postsynaptic neuron is not a simple one-to one correspondence.

It was established [1258, 1270] that the primary appearance of APs during pairing in response to an initially ineffective stimulus does not correlate with the decrease in AP threshold, but is determined by the increase in EPSP. Prolongation of pairing after the rise of an AP results in a decrease in the threshold in that response. The presence of an AP in the current response may favor reorganization of thresholds in the following responses. This means that the primary AP generation in response to an earlier ineffective stimulus is unlikely to be due to the local reorganization of excitability in the given neuron but is determined by synaptic plasticity and, maybe, by reorganization of excitability in the presynaptic neuron. After a primary appearance during conditioning, the AP was generated in a sporadic fashion and the growth of excitability could promote the reliability of this AP generation. This is probably the reason why the dynamics of the number of APs were similar to the dynamics of the AP threshold at the end of acquisition, at the beginning of extinction and during reacquisition, when the probability of AP failure was minimal. At the end of extinction, when this agreement was the worst, the AP failure was maximal (Fig. 1.20).

Although initial appearance of the APs during training is determined by EPSP augmentation and reorganization of excitability participates only at the latest stage of training, it is necessary to emphasize that while a signal induces an EPSP in recording neurons, the presynaptic neurons generate the APs in response to the same signal. When an AP is already generated, change in excitability developes rather rapidly. Therefore, EPSP plasticity in the post-synaptic neuron may be accompanied by a preliminary change in excitability in the presynaptic neuron [1253, 641, 446].

The kinetics of presynaptic action potentials is modified after learning [245, 1314, 1351, 71, 687, 1359] and an axon may generate APs of different waveforms [826, 1314, 1360]. The possibility that a change in the conduction velocity of an axon during learning may be the result of a change in cellular excitability also cannot be excluded [1351]. This alteration in the axons may ensure change in synaptic efficacy in the postsynaptic cell. Hence, a modification of the excitability in a presynaptic neuron, at least in some cases, is accompanied by changes of the monosynaptic responses in the target neurons; the existence of a cause-and-effect correspondence between these two events has to be the subject of further investigations. Na++ channels in the presynaptic terminals show faster inactivation kinetics than somatic channels [362]. Therefore, subtle alteration of the AP amplitude in neuronal soma [1266, 1272] may augment transformation of the Na++ channels, the axonal spike and Ca2+ inflow in the presynaptic boutons, thus affecting synaptic transmission in the target neuron. Therefore, in comparison with the small somatic change in excitability, modification of voltage dependent channels in presynaptic terminals must be more efficient for control transmitter secretion.

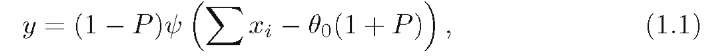

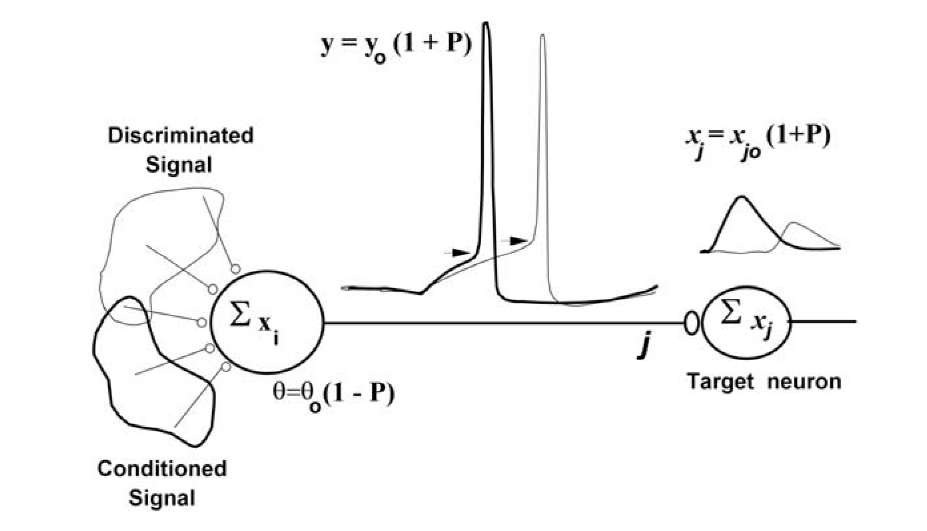

We may conclude that a neuron generates a more powerful AP if a stimulus with greater biological significance acts. An action potential is an output signal. A more powerful AP is a more potent output signal and it may generate stronger postsynaptic potential in the postsynaptic target neurons. An output reaction is a gradual function of the biological significance of an input signal, when this signal is a superthreshold. Biological significance of the signal is analyzable by calculation of the prediction P, as a fraction of rewards or punishments \P \ , which was accepted by the animal in the past after AP generation in this neuron. Punishment is considered to be a negative reward. The negative prediction P means that, in the past, an AP generation lead to a punishment or to the absence of the reward. This has to lead, in the future, to an increase in the threshold and to a decrease of AP generation probability. If, in this case, an AP for all that will be generated, its amplitude will be decreased and efficacy of the next neuron activation will, too, decrease. The same magnitude of prediction P controls threshold, output reaction and has an effect on response of the target neurons. Therefore, prediction P is formed in the neuron, propagated in the target cells (Fig. 1.36) and related to the statistical significance of the reward expectation. We may express the state of activation function of a neuron as follows:

where P is the prediction of an expected reward and ^ is a binary threshold function. If the postsynaptic response ^ xi exceeds the threshold, ^ = 1; otherwise ^ =0. When a memory of the neuron is absent, threshold 00 = const. The function ^(V) was computed by using the Hodgkin-Huxley equation. In a simplest case, it is a binary threshold function. Prediction, computed by a neuron, modulates its threshold and magnitude of output signal and thus is transmitted to the postsynaptic target neuron (Fig. 1.36), which will change its input signal in correspondence with the prediction P.

We may consider the input signal of a neural network as being analogous to the conditioned stimulus, or discriminated stimulus, and the difference between the desired and the actual output of a network as being analogous to the unconditioned stimulus.

Fig. 1.36 demonstrates a neuron that decreases its threshold if a significant stimulus acts. The change in the excitability in presynaptic neuron is accompanied by changes of the output reaction in its axon. A more powerful spike produces a more potent output signal and generates a stronger postsynaptic potential in the postsynaptic target neurons. Thus, the output reaction of neurons ensures transmission of the prediction of the reward.

Neural networks consisting of neurons based on twin interactions are second order networks. Such networks are able to solve much more complicated problems than those solved by neural networks of the first order. In particular, second order networks may be invariant to translation, rotation, and scaling of the input signal [965].

Fig. 1.36. Forward propagation of a prediction. Threshold 60 and output signal y0 correspond to absence of memory of the presynaptic (left) neuron. xi (i = 1, …N) are inputs of this neuron, which in general are gradual. Xj = Xjo(1+P) is a component of the input signals of the corresponding target neuron and Xj0 is this input signal, when prediction occurs within the presynaptic neuron P = 0. Neurons use a prediction P in order to tune their own excitability and modulate their own output signal.

We have constructed a network algorithm (forward propagation of prediction) according to the following rules: 1) learning is a fairly local process within individual neurons (chemical model); 2) network error is generally a common factor for all neurons and for each one it is proportional to the number of connections with the network output; 3) change of excitability in presynap-tic neurons is accompanied by changes of the monosynaptic responses in the postsynaptic neurons [1262].

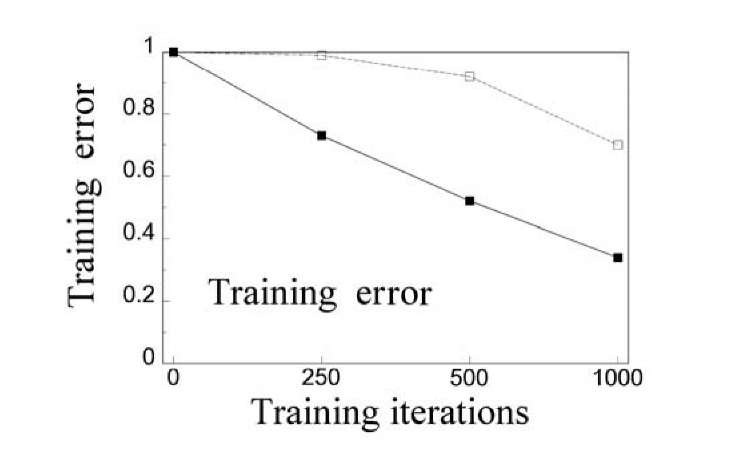

Our algorithm does not use chained derivatives and the expression of correction values of the network weights include only neuron inputs and global network error. Prediction is transmitted from one neuron to another, but each neuron corrects the prediction according to its own experience. Global error acts equally on all nodes, making the learning scheme biologically plausible. This is rather suitable for large applications. However, the algorithm is generally not fully independent of the network structure. Nevertheless this dependence is much weaker than that of back-propagation. It reflects not the structure of the connection between nodes, but only the number of paths ex-

Fig. 1.37. The plot of the averaged training errors against the training iterations for error back-propagation (dotted line) and forward propagation of prediction (solid line). The network consisted of five (three hidden) layers. The connections linked the nodes from one layer to the nodes in the next layer. The number of nodes in each layer was 20.

Forward propagation of the prediction algorithm was carried out in a problem of letter and figure recognition in an the example of a two-layer neural network having 20 neurons in the first layer and a single neuron in the second layer [1262]. Every image was presented by a 20×20-matrix with binary elements. The algorithm found the precise solution after some hundreds of presentations of the learning sequence. The influence of patterns of distortion on the accuracy of recognition was also investigated. The distortion was performed by inverting every pixel of the pattern with some given probability. When the inversion probability was equal to 0.14 then the probability of true recognition of the distorted patterns was up to 0.99. An algorithm of forward propagation of prediction is simple in computer realization, weakly depends on the structure of a neural network and is closer to neurobiological analogues than previously known algorithms. The main feature of this algorithm is that the adjusting of a particular neuron in the network is fulfilled according to the input signal and to the overall error of the network.

Properties of such a model neuron resemble the properties of a neural cell. An increase in complexity of the neuronal model is more than compensated for by simplification of neural network tuning. Tuning of each neuron in the network requires no other information than its inputs (which are modulated by means of predictions in the presynaptic neurons) and network error, which is the same in all neurons. A neuron transmits the prediction of a reward, that is a partially processed signal, into neuronal output. Such neural networks only weakly depend on network structure and a large neural network may be easily constructed and extended. At last, it is tempting to suggest that real brain uses forward propagation of prediction or some similar algorithm in its operations.