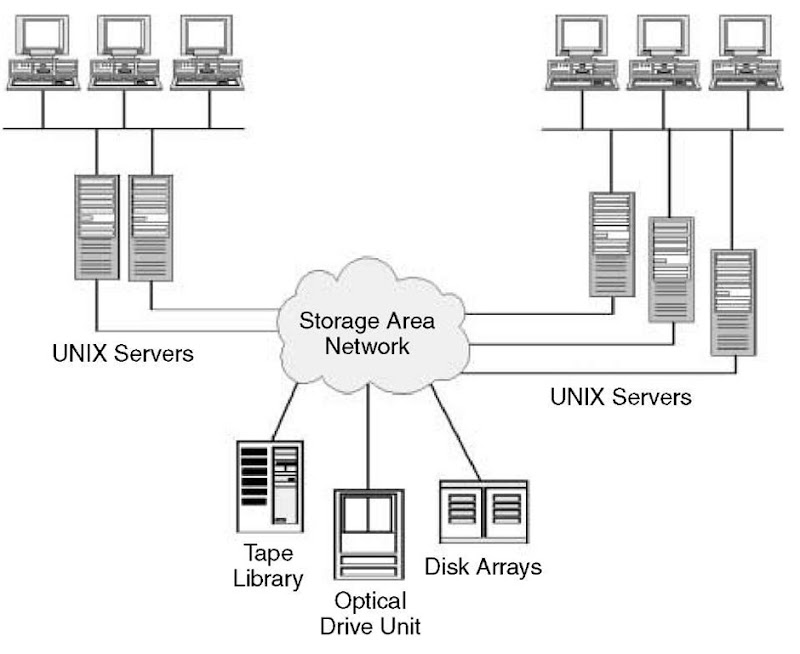

Customer demand for increased performance, availability, and manageability of storage, combined with the emergence of Fibre Channel technology, are driving the convergence of storage and networking architectures. To harness the full capabilities and performance of storage hardware and connectivity, a new network-based storage topology is beginning to emerge—the Storage Area Network (SAN). In providing any-to-any connectivity for storage resources on a dedicated high-speed network, the SAN offloads storage traffic from daily network operations while establishing a direct connection between storage elements and servers (Figure 109).

SAN Concepts

Essentially, a SAN is a specialized network that enables fast, reliable access among servers and external or independent storage resources. In a SAN, a storage device is not the exclusive property of any one server. Rather, storage devices are shared among all networked servers as peer resources. Just as a LAN can be used to connect clients to servers, a SAN can be used to connect servers to storage, servers to each other, and storage to storage.

Figure 109

Storage-Area Network.

SANs provide an open, extensible platform for storage access in data intensive environments like those used for video editing, prepress, OLTP, data warehousing, storage management, and server clustering applications.

SANs offer a number of benefits. The redundancy that is an inherent part of SAN architectures makes high availability more cost-effective for a wider variety of application environments. The pluggable nature of SAN resources—storage, nodes, and clients—enables much easier scalability while preserving ubiquitous data access. And with storage centralized, more efficient management of the data for tasks such as optimization, reconfiguration, and backup/restore become much easier.

SANs are particularly useful for backups. Previously, there were two choices; either a tape drive had to be installed on every server and someone went around changing the tapes, or a backup server was created and the data moved across the network, which consumed bandwidth. Performing backup over the LAN can be excruciatingly disruptive—and slow. A daily backup can suddenly introduce gigabytes of data into the normal LAN traffic. With SANs, organizations can have the best of both worlds: high-speed backups in a centralized location.

SAN Origins

SANs have existed for years in the mainframe environment in the form of Enterprise Systems Connection (ESCON). In mid-range environments, the high-speed data connection was primarily SCSI (Small Computer System Interface)—a point-to-point connection, which is severely limited in terms of the number of connected devices it can support as well as the distance between devices.

An alternative to network-attached storage was developed in 1997 by Michael Peterson, president of Strategic Research (Santa Barbara, CA). He believed network-attached storage was too limiting because it relied on network protocols and did not guarantee delivery. He proposed that SANs could be interconnected using network protocols such as Ethernet, and the storage devices themselves could be linked via nonnetwork protocols.

In a traditional storage environment, a server controls the storage devices and administers requests and backup. With a SAN, instead of being involved in the storage process, the server simply monitors it. By optimizing the box at the head of the SAN to do only file transfers, users are able to get much higher transfer rates, such as 100 Mbps via Fibre Channel. Traditional SCSI connections offer transfer rates of only 40 Mbps—80 Mbps with the newer Ultra2 SCSI.

Using Fibre Channel as the connection between storage devices also increases distance options. While traditional SCSI allows only a 25-meter distance (about 82 feet) between machines and Ultra2 SCSI allows only a 12-meter distance (about 40 feet), Fibre Channel supports spans of 10 kilometers (about 6.2 miles). SCSI can connect only up to 16 devices, whereas Fibre Channel can link as many as 126. By combining LAN networking models with the core building blocks of server performance and mass storage capacity, SAN eliminates the bandwidth bottlenecks and scalability limitations imposed by previous SCSI bus-based architectures.

SAN Features

The following are key features of storage-area networks:

■ Storage and archival traffic are routed over a separate network, offloading the majority of data traffic from the enterprise network.

■ Data transfers are fast with Fibre Channel—up to 100 Mbps with a single loop configuration and up to 200 Mbps with a dual-loop configuration.

■ A shared data storage pool can be easily accessed by remote workstations and servers.

■ The SAN can be easily expanded to a virtually unlimited size with hubs or switches.

■ Nodes on the SAN can be easily added or removed with minimal disruption to the active network.

■ For totally redundant operation, the SAN can be easily configured to support mission-critical applications.

A key feature of SANs is zoning. This is the division of a SAN into subnets that provide different levels of connectivity between specific hosts and devices on the network. In effect, routing tables are used to control access of hosts to devices. This gives IT managers the flexibility to support the needs of different groups and technologies without compromising data security. Zoning can be performed by cooperative consent of the hosts or can be enforced at the switch level. In the former case, hosts are responsible for communicating with the switch to determine if they have the right to access a device.

There are several ways to enforce zoning. With hard zoning, which delivers the highest level of security, IT managers program zone assignments into the flash memory of the hub. This ensures that there can be absolutely no data traffic between zones.

Virtual zoning provides additional flexibility because it is set at the individual port level. Individual ports can be members of more than one virtual zone, so groups can have access to more than one set of data on the SAN.

Broadcast zoning can be used to restrict the scope of broadcasts. For example, IP ARP (Address Resolution Protocol) broadcasts can be kept from SCSI ports on the switch. These IP broadcasts can otherwise cause storage devices to crash.

Technology Mix

As the SAN concept has evolved, it has moved beyond association with any single technology. In fact, just as LANs and WANs use a diverse mix of technologies, so can SANs. This mix can include FDDI, ATM and IBM’s Serial Storage Architecture (SSA), as well as Fibre Channel. SAN architectures also allow for the use of a number of underlying protocols, including TCP/IP and all the variants of SCSI.

Instead of dedicating a specific kind of storage to one or more servers, a SAN allows different kinds of storage—mainframe disk, tape, and RAID—to be shared by different kinds of servers, such as Windows NT, UNIX, and OS/390. With this shared capacity, organizations can acquire, deploy and use storage devices more efficiently and cost-effectively.

SANs also let users with heterogeneous storage platforms use all available storage resources. This means that within a SAN users can back up or archive data from different servers to the same storage system. They can also allow stored information to be accessed by all servers, create and store a mirror image of data as it is created, and share data between different environments.

With a SAN, there is no need for a physically separate network to handle storage and archival traffic. This is because the SAN can function as a virtual subnet that operates on a shared network infrastructure. For this to work, however, different priorities or classes of service must be established. Fortunately, both Fibre Channel and ATM provide the means to set different classes of service.

Although early implementations of SANs have been local- or campus-based, there is no technological reason why they cannot be extended much farther over the WAN As WAN technologies such as SONET and ATM mature, and especially as class-of-service capabilities improve, the SAN can be extended over a much wider area—perhaps globally in the future.

SANs also promise easier and less expensive network administration. Today, administrative functions are labor-intensive and time-consuming, and IT organizations typically have to replicate management tools across multiple server environments. With a SAN, only one set of tools is needed, which eliminates the need for replication and associated costs.

SAN Components

Several components are required to implement a SAN. A Fibre Channel adapter is installed in each server. These are connected via the server’s PCI bus to the server’s operating system and applications. Because Fibre Channel’s transport-level protocol wraps easily around SCSI frames, the adapter appears to be a SCSI device. The adapters are connected to a single Fibre Channel hub, running over fiber-optic cable or copper coaxial cable. Category 5 cable, the high-end twisted pair rated for Fast Ethernet and 155-Mbps ATM, can also be used.

A LAN-free backup architecture may include some type of automated tape library that attaches to the hub via Fibre Channel. This machine typically includes a mechanism capable of feeding data to multiple tape drives and may be bundled with a front-end Fibre Channel controller. Existing SCSI-based tape drives can be used also through the addition of a Fibre Channel-to-SCSI bridge.

Storage management software running in the servers performs contention management by communicating with other servers via a control protocol to synchronize access to the tape library. The control protocol maintains a master index and uses data maps and time stamps to establish server-to-hub connections. Currently, control protocols are specific to the software vendors. Eventually, the storage industry will likely standardize on one of the several protocols now in proposal status before the Storage Network Industry Association.

From the hub, a standard Fibre Channel protocol, Fibre Channel-Arbitrated Loop (FC-AL), functions similarly to token ring to ensure collision-free data transfers to the storage devices. The hub also contains an embedded SNMP agent for reporting to network management software.

Role of Hubs

Much like Ethernet hubs in LAN environments, Fibre Channel hubs provide fault tolerance in SAN environments. On a Fibre Channel-Arbitrated Loop, each node acts as a repeater for all other nodes on the loop, so if one node goes down, the entire loop goes down. For this reason, hubs are an essential source of fault isolation in Fibre Channel SANs. The hub’s port bypass functionality automatically bypasses a problem port and avoids most faults. Stations can be powered off or added to the loop without serious loop effects. Storage management software is used to mediate contention and synchronize data—activities necessary for moving backup data from multiple servers to multiple storage devices. Hubs also support the popular physical star cabling topology for more convenient wiring and cable management.

To achieve full redundancy in a Fibre Channel SAN, two fully independent, redundant loops must be cabled. This scheme provides two independent paths for data with fully redundant hardware. Most disk drives and disk arrays targeted for high-availability environments have dual ports specifically for the purpose. Wiring each loop through a hub provides higher availability port bypass functionality to each of the loops.

Many organizations need multiple levels of hubs. Hubs can be cascaded up to the Fibre Channel-Arbitrated Loop limit of 126 nodes (127 nodes with an FL or switch port). Normally, the distance limitation between Fibre Channel hubs is three kilometers. However, Hewlett-Packard offers technology that extends the distance between hubs to 10 kilometers, allowing organizations to link servers situated on either side of a campus, or even spanning a metropolitan area.

The tools needed to manage a Fibre Channel fabric are available through the familiar SNMP (Simple Network Management Protocol) interface. The draft proposal for a MIB (Management Information Base) has been circulated at the Internet Engineering Task Force (IETF). New vendor-specific MIBs will emerge as products are developed with new management features. Hubs and other central networking hardware provide a natural point for network management.

Among the companies currently offering SAN management solutions is Legato Systems, whose Enterprise Storage Management Architecture (ESMA) enables customers to take advantage of the benefits offered by SAN topologies for backup and restore. One element of ESMA is the Legato NetWorker Storage Node, which enables large, business-critical servers to be backed up directly to tape, under the control of a central Legato NetWorker server. This results in the ability to off-load backup traffic from the local-area network and move it to the storage-area network, taking advantage of the bandwidth offered by Fibre Channel.

Another ESMA component is Legator SmartMedia. The any-to-any connectivity of SANs enables tape libraries to be connected to multiple servers and Legato SmartMedia manages the sharing of media and devices between them. Legato SmartMedia enables drive and library sharing, managing application requests for media from a central location. This centralized management of distributed libraries results in increased manageability and reduced operating costs.

Last Word

The move to Storage-Area Networks over the next few years will provide a new level of scalability to system administrators and allow a much greater degree of flexibility than the traditional attached storage paradigm. Fibre Channel technology provides the basic foundation of this shift, allowing enterprises to start implementing a solid foundation today to support the environments of the future. However, SCSI has not yet exhausted its potential as a storage connectivity option. SCSI is installed in more than 90 percent of networks, and the latest SCSI variant— Ultra160/m SCSI—may even pose a challenge to Fibre Channel. In addition to supporting data transfers at up to 160 Mbps, Ultra160/m SCSI offers intelligent data management. Products incorporating Ultra160/m intelligently test and manage the storage network so that the maximum reliable data transfer rate is used. If the cabling, backplanes, and terminators can support the desired speed, the data flows at full throttle. If not, the hardware will be smart enough to gracefully negotiate a lower transfer rate automatically. The result will be more system autonomy and less IT manager involvement.