Performance of web services

Until now we have spoken about web services as complex stuff because of the inherently intricate process of marshalling and unmarshalling and the network latency to move SOAP packets. However, how do web services perform? As single tests are not very indicative, we will compare the performance of a web service with the equivalent operation executed by an EJB.

The web service will be in charge of executing a task on a legacy system and returning a collection of 500 objects. We will deploy our project on JBoss 5.1.0, which uses the default JBossWS native stack as JAX-WS implementation.

You have several options available to benchmark your web services. For example, you could create a simple web service client in the form of a JSP/Servlet and use it to load-test your web application. Alternatively, you can use a Web Service SOAP Sampler, which is built-in in your JMeter collection of samplers.

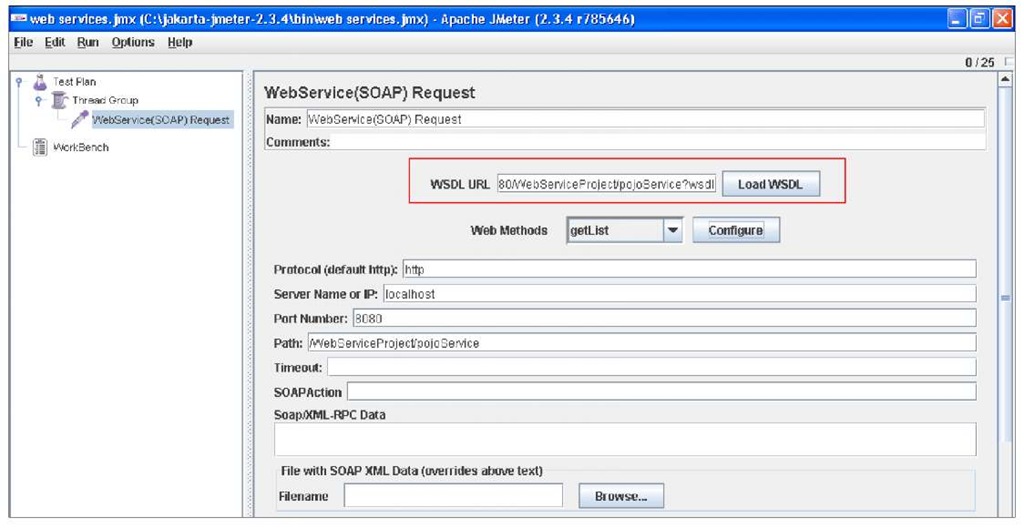

Just right-click from your Thread Group and choose Add | Sampler | Web Service (SOAP) Request.

You can either configure manually all the settings, which are related to the web service or, simply, let JMeter configure them for you by loading the WSDL (Choose Load WSDL button). Then pick up the method you want to test and select Configure which automatically configures your web service properties.

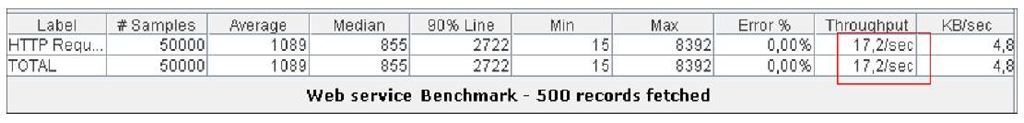

Our benchmark will collect 50000 samples from our web service. Here’s the resulting JMeter aggregate report:

The web service requires an average of about 1 second to return, exhibiting a throughput of 17/sec.

Smart readers should have noticed another peculiarity from this benchmark in that there is a huge difference between the Min and Max value. This is due to the fact that JBossWS performs differently during the first method invocation of each service and the following ones, especially when dealing with large WSDL contracts.

During the first invocation of the service lots of data is internally cached and reused during the following ones. While this actually improves the performance of subsequent calls, it might be necessary to limit the maximum response time. By setting the org.jboss.ws.eagerlnitia lizeJAXBContextCache system property to true, both on the server side (in the JBoss start script) and on the client side (a convenient constant is available in org.jboss.ws.Constants) JBossWS will try to eagerly create and cache the JAXB contexts before the first invocation is handled.

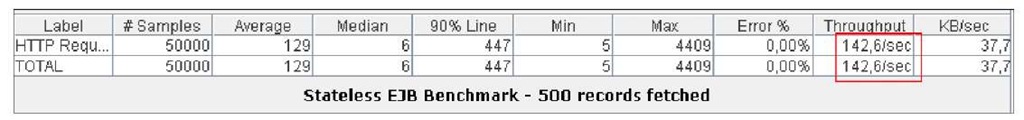

The same test will be now executed using an EJB layer, which performs exactly the same job. The result is quite different:

As you can see, the EJB was over eight times faster than the web service for the most relevant metrics. This benchmark is intentionally misusing web services just to warn the reader against the potential risk of a flawed interface design, like returning a huge set of data from a collection.

Web services are no doubt a key factor in the integration of heterogeneous systems and have reached a reasonable level of maturity; however they should be not proposed as the right solution for everything, just because they use a standard protocol for exchanging data.

Even if web services fit in perfectly in your project’s picture, you should be aware of the most important factors, which influence the performance of web services. The next section gathers some useful elements, which should serve as wake-up call at the early phase of project designing.

Elements influencing the performance of web services

The most important factor in determining the performance of web services are the characteristics of the XML documents, which are sent and returned by web services. We can distinguish three main elements:

• Size: The length of data elements in the XML document.

• Complexity: The number of elements that the XML document contains.

• Level of nesting: Refers to objects or collections of objects that are defined within other objects in the XML document.

On the basis of these assumptions, we can elaborate the following performance guidelines:

You should design coarse-grained web services, that is services which perform a lot of work on the server and acknowledge just a response code or a minimal set of attributes.

The amount of parameters passed to the web service should be as well skimmed to the essential in order to reduce the size of outgoing SOAP messages. Nevertheless, take into consideration that XML size is not the only factor that you need to consider, but also network latency is a key element. If your web services tend to be chatty, with lots of little round trips and a subtle statefulness between individual communications, they will be slow. This will be slower than sending a single larger SOAP message.

Developers often fail to realize that the web service API call model isn’t well suited to building communicating applications where caller and callee are separated by a medium (networks!) with variable and unconstrained performance characteristics/latency.

For this reason, don’t make the mistake of fragmenting your web service invocation in several chunks. In the end the size of the XML will stay the same but you will pay with additional network latency.

Another important factor, which can improve the performance of your web services, is caching. You could consider caching responses at the price of additional memory requirements or potential stale data issues. Caching should be also accomplished on web services documents, like the Web service description language (WSDL), which contains the specifications of the web service contract. It’s advised to refer to a local backup copy of your WSDL when you are rolling your service in production as in the following example:

At the same time, you should consider caching the instance that contains the web service port. A web service port is an abstract set of operations supported by one or more endpoints. Its name attribute provides a unique identifier among all port types defined within the enclosing WSDL document.

In short, a port contains an abstract view of the web service, but acquiring a copy of it is an expensive operation, which should be avoided every time you need to access your Web service.

The potential threat of this approach is that you might introduce in your client code objects (the proxy port), which are not thread safe, so you should synchronize their access or use a pool of instances instead. An exception to this rule is the Apache CXF implementation, which documents the use cases where the proxy port can be safely cached in the project FAQs: http://cxf.apache.org/faq.html.

Reducing the size of SOAP messages

Reducing the size of the XML messages, which are sent across your services, is one of the most relevant tuning points. However, there are some scenarios where you need to receive lots of data from your services; as a matter of fact, the Java EE 1.5 specifications introduce the javax.jws.WebService annotation, which makes it quite tempting to expose your POJOs as web services.

The reverse of the coin is that many web services will grow up and prosper with inherited characteristics of POJOs like, for example:

If you cannot afford the price of rewriting your business implementations from scratch, then you need to reduce at least the cost of this expensive fetch.

A simple but effective strategy is to override the default binding rules for Java-to-XML Schema mapping using JAXB annotations. Consider the following class Person, which has the following fields:

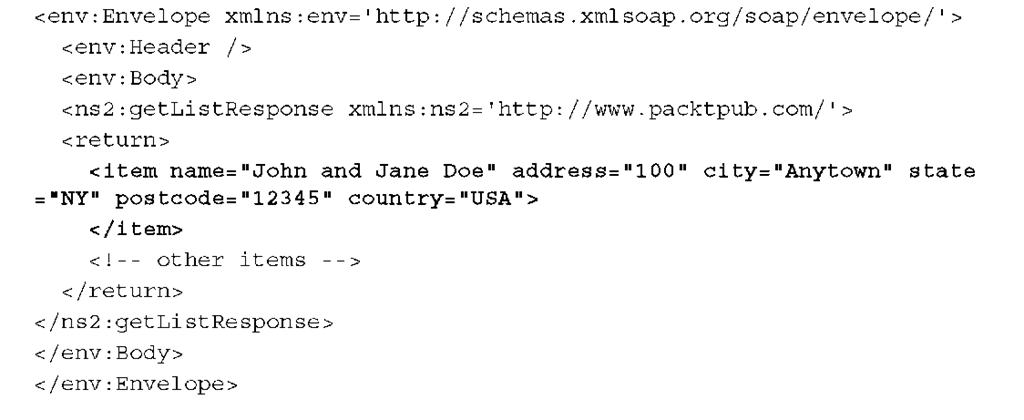

When you are returning a sample instance of this class from a web service, you will move across the network this 460 bytes SOAP message:

As you can see, lots of characters are wasted in XML elements which could conveniently be replaced by attributes, thus saving a good quantity of bytes:

The corresponding XML generated is 380 bytes, about 18 percent smaller than the default XML prepared by the JAXB parser:

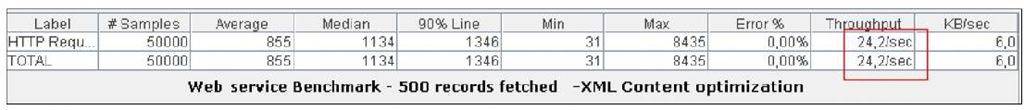

If we try to issue again our initial benchmark, using custom JAXB annotations, the reduced size in the SOAP message is reflected in a higher throughput:

![tmp181-109_thumb[2] tmp181-109_thumb[2]](http://what-when-how.com/wp-content/uploads/2011/09/tmp181109_thumb2_thumb.jpg)

![tmp181-110_thumb[2] tmp181-110_thumb[2]](http://what-when-how.com/wp-content/uploads/2011/09/tmp181110_thumb2_thumb.jpg)

![tmp181-111_thumb[2] tmp181-111_thumb[2]](http://what-when-how.com/wp-content/uploads/2011/09/tmp181111_thumb2_thumb.png)

![tmp181-112_thumb[2] tmp181-112_thumb[2]](http://what-when-how.com/wp-content/uploads/2011/09/tmp181112_thumb2_thumb.png)

![tmp181-113[4] tmp181-113[4]](http://what-when-how.com/wp-content/uploads/2011/09/tmp1811134_thumb.png)

![tmp181-114[4] tmp181-114[4]](http://what-when-how.com/wp-content/uploads/2011/09/tmp1811144_thumb.png)

![tmp181-115_thumb[2] tmp181-115_thumb[2]](http://what-when-how.com/wp-content/uploads/2011/09/tmp181115_thumb2_thumb.png)