In last topic, we discussed how the three basic components of a network (client computer, server computer, and circuit) worked together. In this section, we will get a bit more specific about how the client computer and the server computer can work together to provide application software to the users. An application architecture is the way in which the functions of the application layer software are spread among the clients and servers in the network.

The work done by any application program can be divided into four general functions. The first is data storage. Most application programs require data to be stored and retrieved, whether it is a small file such as a memo produced by a word processor or a large database such as an organization’s accounting records. The second function is data access logic, the processing required to access data, which often means database queries in SQL (structured query language). The third function is the application logic (sometimes called business logic), which also can be simple or complex, depending on the application. The fourth function is the presentation logic, the presentation of information to the user and the acceptance of the user’s commands. These four functions, data storage, data access logic, application logic, and presentation logic, are the basic building blocks of any application.

There are many ways in which these four functions can be allocated between the client computers and the servers in a network. There are four fundamental application architectures in use today. In host-based architectures, the server (or host computer) performs virtually all of the work. In client-based architectures, the client computers perform most of the work. In client-server architectures, the work is shared between the servers and clients. In peer-to-peer architectures, computers are both clients and servers and thus share the work. The client-server architecture is the dominant application architecture.

Clients and Servers

TECHNICAL FOCUS

There are many different types of clients and servers that can be part of a network, and the distinctions between them have become a bit more complex over time. Generally speaking, there are four types of computers that are commonly used as servers:

• A mainframe is a very large general-purpose computer (usually costing millions of dollars) that is capable of performing very many simultaneous functions, supporting very many simultaneous users, and storing huge amounts of data.

• A microcomputer is the type of computer you use. Microcomputers used as servers can range from a small microcomputer, similar to a desktop one you might use, to one costing $20,000 or more.

• A cluster is a group of computers (often microcomputers) linked together so that they act as one computer. Requests arrive atthe cluster (e.g., Web requests) and are distributed among the computers so that no one computer is overloaded. Each computer is separate, so that if one fails, the cluster simply bypasses it. Clusters are more complex than single servers because work must be quickly coordinated and shared among the individual computers. Clusters are very scalable because one can always add one more computer to the cluster.

• A virtual server is one computer (often a microcomputer) that acts as several servers. Using special software, several operating systems are installed on the same physical computer so that one physical computer appears as several different servers to the network. These virtual servers can perform the same or separate functions (e.g., a printer server, Web server, file server). This improves efficiency (when each server is not fully used, there is no need to buy separate physical computers) and may improve effectiveness (if one virtual server crashes, it does not crash the other servers on the same computer).

There are five commonly used types of clients:

• A microcomputer is the most common type of client today. This includes desktop and portable computers, as well as Tablet PCs that enable the user to write with a pen-like stylus instead of typing on a keyboard.

• A terminal is a device with a monitor and keyboard but no central processing unit (CPU). Dumb terminals, so named because they do not participate in the processing of the data they display, have the bare minimum required to operate as input and output devices (a TV screen and a keyboard). In most cases when a character is typed on a dumb terminal, it transmits the character through the circuit to the server for processing. Every keystroke is processed by the server, even simple activities such as the up arrow.

• A network computer is designed primarily to communicate using Internet-based standards (e.g., HTTP, Java) but has no hard disk. It has only limited functionality.

• A transaction terminal is designed to support specific business transactions, such as the automated teller machines (ATM) used by banks. Other examples of transaction terminals are point-of-sale terminals in a supermarket.

• A handheld computer, Personal Digital Assistant (PDA), or mobile phone can also be used as a network client.

Host-Based Architectures

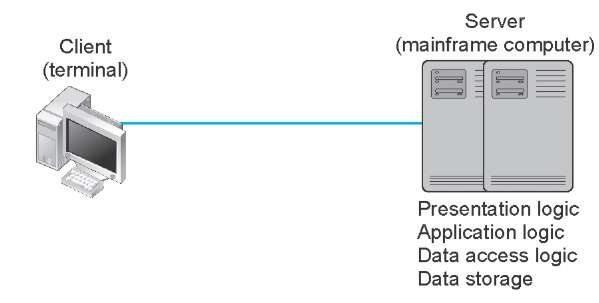

The very first data communications networks developed in the 1960s were host-based, with the server (usually a large mainframe computer) performing all four functions. The clients (usually terminals) enabled users to send and receive messages to and from the host computer. The clients merely captured keystrokes, sent them to the server for processing, and accepted instructions from the server on what to display (Figure 2.1).

This very simple architecture often works very well. Application software is developed and stored on the one server along with all data. If you’ve ever used a terminal (or a microcomputer with Telnet software), you’ve used a host-based application. There is one point of control, because all messages flow through the one central server. In theory, there are economies of scale, because all computer resources are centralized (but more on cost later).

There are two fundamental problems with host-based networks. First, the server must process all messages. As the demands for more and more network applications grow, many servers become overloaded and unable to quickly process all the users’ demands. Prioritizing users’ access becomes difficult. Response time becomes slower, and network managers are required to spend increasingly more money to upgrade the server. Unfortunately, upgrades to the mainframes that usually are the servers in this architecture are "lumpy." That is, upgrades come in large increments and are expensive (e.g., $500,000); it is difficult to upgrade "a little."

Client-Based Architectures

In the late 1980s, there was an explosion in the use of microcomputers and microcomputer-based LANs. Today, more than 90 percent of most organizations’ total computer processing power now resides on microcomputer-based LANs, not in centralized mainframe computers. Part of this expansion was fueled by a number of low-cost, highly popular applications such as word processors, spreadsheets, and presentation graphics programs. It was also fueled in part by managers’ frustrations with application software on host mainframe computers. Most mainframe software is not as easy to use as microcomputer software, is far more expensive, and can take years to develop. In the late 1980s, many large organizations had application development backlogs of two to three years; that is, getting any new mainframe application program written would take years. New York City, for example, had a six-year backlog. In contrast, managers could buy microcomputer packages or develop microcomputer-based applications in a few months.

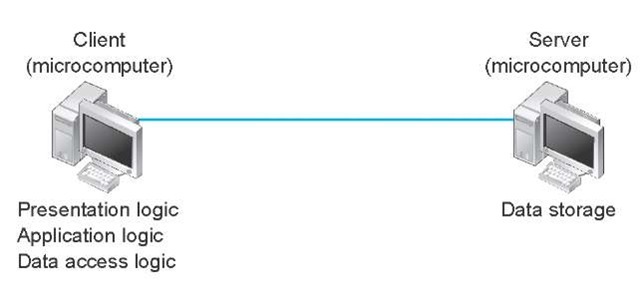

With client-based architectures, the clients are microcomputers on a LAN, and the server is usually another microcomputer on the same network. The application software on the client computers is responsible for the presentation logic, the application logic, and the data access logic; the server simply stores the data (Figure 2.2).

Figure 2.1 Host-based architecture

Figure 2.2 Client-based architecture

This simple architecture often works very well. If you’ve ever used a word processor and stored your document file on a server (or written a program in Visual Basic or C that runs on your computer but stores data on a server), you’ve used a client-based architecture.

The fundamental problem in client-based networks is that all data on the server must travel to the client for processing. For example, suppose the user wishes to display a list of all employees with company life insurance. All the data in the database (or all the indices) must travel from the server where the database is stored over the network circuit to the client, which then examines each record to see if it matches the data requested by the user. This can overload the network circuits because far more data is transmitted from the server to the client than the client actually needs.

Client-Server Architectures

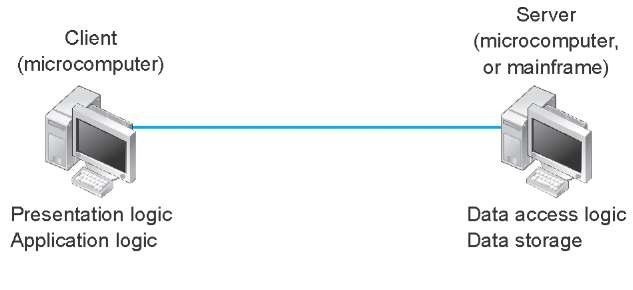

Most organizations today are moving to client-server architectures. Client-server architectures attempt to balance the processing between the client and the server by having both do some of the logic. In these networks, the client is responsible for the presentation logic, whereas the server is responsible for the data access logic and data storage. The application logic may either reside on the client, reside on the server, or be split between both.

Figure 2.3 shows the simplest case, with the presentation logic and application logic on the client and the data access logic and data storage on the server. In this case, the client software accepts user requests and performs the application logic that produces database requests that are transmitted to the server. The server software accepts the database requests, performs the data access logic, and transmits the results to the client. The client software accepts the results and presents them to the user. When you used a Web browser to get pages from a Web server, you used a client-server architecture. Likewise, if you’ve ever written a program that uses SQL to talk to a database on a server, you’ve used a client-server architecture.

Figure 2.3 Two-tier client-server architecture

For example, if the user requests a list of all employees with company life insurance, the client would accept the request, format it so that it could be understood by the server, and transmit it to the server. On receiving the request, the server searches the database for all requested records and then transmits only the matching records to the client, which would then present them to the user. The same would be true for database updates; the client accepts the request and sends it to the server. The server processes the update and responds (either accepting the update or explaining why not) to the client, which displays it to the user.

One of the strengths of client-server networks is that they enable software and hardware from different vendors to be used together. But this is also one of their disadvantages, because it can be difficult to get software from different vendors to work together. One solution to this problem is middleware, software that sits between the application software on the client and the application software on the server. Middleware does two things. First, it provides a standard way of communicating that can translate between software from different vendors. Many middleware tools began as translation utilities that enabled messages sent from a specific client tool to be translated into a form understood by a specific server tool.

The second function of middleware is to manage the message transfer from clients to servers (and vice versa) so that clients need not know the specific server that contains the application’s data. The application software on the client sends all messages to the middleware, which forwards them to the correct server. The application software on the client is therefore protected from any changes in the physical network. If the network layout changes (e.g., a new server is added), only the middleware must be updated.

There are literally dozens of standards for middleware, each of which is supported by different vendors and each of which provides different functions. Two of the most important standards are Distributed Computing Environment (DCE) and Common Object Request Broker Architecture (CORBA). Both of these standards cover virtually all aspects of the client-server architecture but are quite different. Any client or server software that conforms to one of these standards can communicate with any other software that conforms to the same standard. Another important standard is Open Database Connectivity (ODBC), which provides a standard for data access logic.

Two-Tier, Three-Tier, and n-Tier Architectures There are many ways in which the application logic can be partitioned between the client and the server. The example in Figure 2.3 is one of the most common. In this case, the server is responsible for the data and the client, the application and presentation. This is called a two-tier architecture, because it uses only two sets of computers, one set of clients and one set of servers.

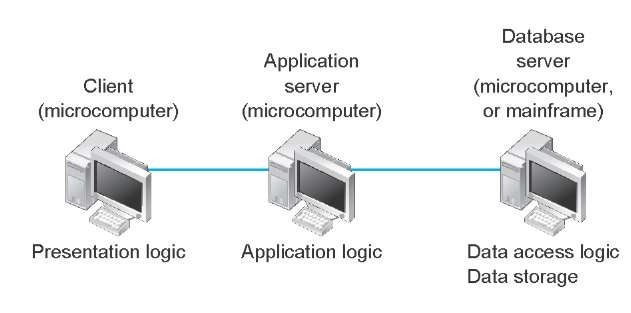

A three-tier architecture uses three sets of computers, as shown in Figure 2.4. In this case, the software on the client computer is responsible for presentation logic, an application server is responsible for the application logic, and a separate database server is responsible for the data access logic and data storage.

A Monster Client-Server Architecture

MANAGEMENT FOCUS

Every spring, Monster.com, one of the largest job sites in the United States, with an average of more than 40 million visits per month, experiences a large increase in traffic. Aaron Braham, vice president of operations, attributes the spike to college students who increase their job search activities as they approach graduation.

Monster.com has 1,000 Web servers, e-mail servers, and database servers at its sites in Indianapolis and Maynard, Massachusetts. The main Web site has a set of load-balancing devices that forward Web requests to the different servers depending on how busy they are.

Braham says the major challenge is that 90 percent of the traffic is not simple requests for Web pages but rather search requests (e.g., what network jobs are available in New Mexico), which require more processing and access to the database servers. Monster.com has more than 1 million job postings and more than 20 million resumes on file, spread across its database servers. Several copies of each posting and resume are kept on several database servers to improve access speed and provide redundancy in case a server crashes, so just keeping the database servers in sync so that they contain correct data is a challenge.

An n-tier architecture uses more than three sets of computers. In this case, the client is responsible for presentation logic, a database server is responsible for the data access logic and data storage, and the application logic is spread across two or more different sets of servers. Figure 2.5 shows an example of an n-tier architecture of a groupware product called TCB-Works developed at the University of Georgia. TCB Works has four major components. The first is the Web browser on the client computer that a user uses to access the system and enter commands (presentation logic). The second component is a Web server that responds to the user’s requests, either by providing HTML pages and graphics (application logic) or by sending the request to the third component, a set of 28 C programs that perform various functions such as adding comments or voting (application logic). The fourth component is a database server that stores all the data (data access logic and data storage). Each of these four components is separate, making it easy to spread the different components on different servers and to partition the application logic on two different servers.

Figure 2.4 Three-tier client-server architecture

Figure 2.5 The n-tier client-server architecture

The primary advantage of an .n-tier client-server architecture compared with a two-tier architecture (or a three-tier compared with a two-tier) is that it separates out the processing that occurs to better balance the load on the different servers; it is more scalable. In Figure 2.5, we have three separate servers, which provides more power than if we had used a two-tier architecture with only one server. If we discover that the application server is too heavily loaded, we can simply replace it with a more powerful server, or even put in two application servers. Conversely, if we discover the database server is underused, we could put data from another application on it.

There are two primary disadvantages to an . -tier architecture compared with a two-tier architecture (or a three-tier with a two-tier). First, it puts a greater load on the network. If you compare Figures 2.3, 2.4, and 2.5, you will see that the n-tier model requires more communication among the servers; it generates more network traffic so you need a higher capacity network. Second, it is much more difficult to program and test software in . -tier architectures than in two-tier architectures because more devices have to communicate to complete a user’s transaction.

Thin Clients versus Thick Clients Another way of classifying client-server architectures is by examining how much of the application logic is placed on the client computer. A thin-client approach places little or no application logic on the client (e.g., Figure 2.5), whereas a thick-client (also called fat-client) approach places all or almost all of the application logic on the client (e.g., Figure 2.3). There is no direct relationship between thin and fat client and two-, three- and n-tier architectures. For example, Figure 2.6 shows a typical Web architecture: a two-tier architecture with a thin client. One of the biggest forces favoring thin clients is the Web.

Thin clients are much easier to manage. If an application changes, only the server with the application logic needs to be updated. With a thick client, the software on all of the clients would need to be updated. Conceptually, this is a simple task; one simply copies the new files to the hundreds of affected client computers. In practice, it can be a very difficult task.

Figure 2.6 The typical two-tier thin-client architecture of the Web

Thin-client architectures are the wave of the future. More and more application systems are being written to use a Web browser as the client software, with Java Javascriptor AJAX (containing some of the application logic) downloaded as needed. This application architecture is sometimes called the distributed computing model.

Peer-to-Peer Architectures

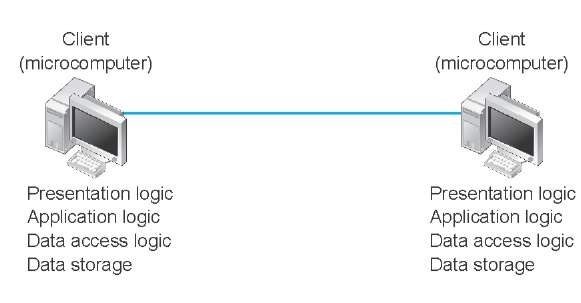

Peer-to-peer (P2P) architectures are very old, but their modern design became popular in the early 2000s with the rise of P2P file sharing applications (e.g., Napster). With a P2P architecture, all computers act as both a client and a server. Therefore, all computers perform all four functions: presentation logic, application logic, data access logic, and data storage (see Figure 2.7). With a P2P file sharing application, a user uses the presentation, application, and data access logic installed on his or her computer to access the data stored on another computer in the network. With a P2P application sharing network (e.g., grid computing such as seti.org), other users in the network can use others’ computers to access application logic as well.

The advantage of P2P networks is that the data can be installed anywhere on the network. They spread the storage throughout the network, even globally, so they can be very resilient to the failure of any one computer. The challenge is finding the data. There must be some central server that enables you to find the data you need, so P2P architectures often are combined with a client-server architecture. Security is a major concern in most P2P networks, so P2P architectures are not commonly used in organizations, except for specialized computing needs (e.g., grid computing).

Choosing Architectures

Each of the preceding architectures has certain costs and benefits, so how do you choose the "right" architecture? In many cases, the architecture is simply a given; the organization has a certain architecture, and one simply has to use it.

Figure 2.7 Peer-to-Peer architecture

In other cases, the organization is acquiring new equipment and writing new software and has the opportunity to develop a new architecture, at least in some part of the organization. There are at least three major sets of factors to consider (Figure 2.8). Because P2P architectures are seldom used in organizations, we do not consider them in this section.

Cost of Infrastructure One of the strongest forces driving companies toward client-server architectures is cost of infrastructure (the hardware, software, and networks that will support the application system). Simply put, microcomputers are more than 1,000 times cheaper than mainframes for the same amount of computing power. The microcomputers on our desks today have more processing power, memory, and hard disk space than a mainframe of the early 1990s, and the cost of the microcomputers is a fraction of the cost of the mainframe. Therefore, the cost of client-server architectures is lower than that of server-based architectures, which rely on mainframes. Client-server architectures also tend to be cheaper than client-based architectures because they place less of a load on networks and thus require less network capacity.

Cost of Development The cost of developing application systems is an important factor when considering the financial benefits of client-server architectures. Developing application software for client-server architectures can be complex. Developing application software for host-based architectures is usually cheaper. The cost differential may change as companies gain experience with client-server applications, as new client-server products are developed and refined, and as client-server standards mature. However, given the inherent complexity of client-server software and the need to coordinate the interactions of software on different computers, there is likely to remain a cost difference.

Even updating the network with a new version of the software is more complicated. In a host-based network, there is one place in which application software is stored; to update the software, you simply replace it there. With client-server networks, you must update all clients and all servers. For example, suppose you want to add a new server and move some existing applications from the old server to the new one. All application software on all fat clients that send messages to the application on the old server must now be changed to send to the new server. Although this is not conceptually difficult, it can be an administrative nightmare.

Scalability Scalability refers to the ability to increase or decrease the capacity of the computing infrastructure in response to changing capacity needs. The most scalable architecture is client-server computing because servers can be added to (or removed from) the architecture when processing needs change. For example, in a four-tier client-server architecture, one might have ten Web servers, four application servers, and three database servers. If the application servers begin to get overloaded, it is simple to add another two or three application servers.

|

Host-Based |

Client-Based |

Client-Server |

|

|

Cost of infrastructure |

High |

Medium |

Low |

|

Cost of development |

Low |

Medium |

Medium |

|

Scalability |

Low |

Medium |

High |

Figure 2.8 Factors involved in choosing architectures

Also, the types of hardware that are used in client-server settings (e.g., minicomputers) typically can be upgraded at a pace that most closely matches the growth of the application. In contrast, host-based architectures rely primarily on mainframe hardware that needs to be scaled up in large, expensive increments, and client-based architectures have ceilings above which the application cannot grow because increases in use and data can result in increased network traffic to the extent that performance is unacceptable.