In the simplest form, a computer clock consists of an oscillator and counter/ divider that deliver repetitive pulses at intervals in the range from 1 to 10 ms, called the tick (sometimes called the jiffy). Each pulse triggers a hardware interrupt that increments the system clock variable such that the rate of the system clock in seconds per second matches the rate of the real clock. This is true even if the tick does not exactly divide the second. The timer interrupt routine serves two functions: one as the source for various kernel and user housekeeping timers and the other to maintain the time of day. Housekeeping timers are based on the number of ticks and are not disciplined in time or frequency. The precision kernel disciplines the system clock in time and frequency to match the real clock by adding or subtracting a small increment to the system clock variable at each timer interrupt.

In modern computers, there are three sources that can be used to implement the system clock: the timer oscillator that drives the counter/divider, the real-time clock (RTC) that maintains the time when the power is off (or even on for that matter), and what in some architectures is called the processor cycle counter (PCC) and in others is called the timestamp counter (TSC). We use the latter acronym in the following discussion. Any of these sources can in principle be used as the clock source. However, the engineering issues with these sources are quite intricate and need to be explored in some detail.

Timer Oscillator

The typical system clock uses a timer interrupt rate in the 100- to 1,000-Hz range. It is derived from a crystal oscillator operating in the 1- to 10-MHz range. For reasons that may be obscure, the oscillator frequency is often some multiple or submultiple of 3.579545 MHz. The reason for this rather bizarre choice is that this is the frequency of the NTSC analog TV color burst signal, and crystals for this frequency are widely available and inexpensive. Now that digital TV is here, expect the new gold standard at 19.39 MHz or some submultiple as this is the bit rate for ATSC.

In some systems, the counter/divider is implemented in an application-specific integrated circuit (ASIC) producing timer frequencies of 100, 256, 1,000, and 1,024 Hz. The ubiquitous Intel 8253/8254 programmable interval timer (PIT) chip includes three 16-bit counters that count down from a preprogrammed value and produce a pulse when the counter reaches zero, then repeat the cycle endlessly. Operated at one-third the color burst frequency or 1.1931816 MHz and preloaded with the value 11932, the PIT produces 100 Hz to within 15 PPM. The resulting systematic error is probably dominated by the inherent crystal frequency error.

Timestamp Counter

The TSC is a counter derived from the processor clock, which in modern processors operates above 1 GHz. The counter provides exquisite resolution, but there are several potential downsides using it directly as the clock source. To provide synchronous data transfers, the TSC is divided to some multiple of the bus clock where it can be synchronized to a quartz or surface acoustic wave (SAW) resonator using a phase-lock loop (PLL). The resonator is physically close to the central processing unit (CPU) chip, so heat from the chip can affect its frequency. Modern processors show wide temperature variations due to various workloads on the arithmetic and logical instruction units, and this can affect the TSC frequency.

In multiprocessor CPU chips, there is a separate TSC for every processor, and they might not increment in step or even operate at the same frequency. In systems like Windows, the timekeeping functions are directed to a single processor. This may result in unnecessary latency should that processor be occupied while another is free. Alternatively, some means must be available to discipline each TSC separately and provide individual time and frequency corrections. A method to do this is described in Section 15.2.5.

In early processors, the TSC rate depended on the processor clock frequency, which is not necessarily the same at all times. In sleep mode, for example, the processor clock frequency is much lower to conserve power. In the most modern Intel processors, the TSC always runs at the highest rate determined at boot time. In other words, the PLL runs all the time. Further complicating the issues is that modern processor technology can support out-of-order execution, which means that the TSC may appear earlier or later relative to a monotonic counter. This can be avoided by either serializing the instruction stream or using a serialized form of the instruction to read the TSC.

The ultimate complication is that, to reduce radio-frequency interference (RFI), a technique called spread-spectrum clocking can be utilized. This is done by modulating the PLL control loop with a triangular signal, causing the oscillator frequency to sweep over a small range of about 2 percent. The maximum frequency is the rated frequency of the clock, while the minimum in this case is 2 percent less. As the triangular wave is symmetric and the sweep frequency is in the kilohertz range, the frequency error is uniformly distributed between zero and 2 percent with mean 1 percent. Whether this is acceptable depends on the application. On some motherboards, the spread-spectrum feature can be disabled, and this would be the preferred workaround.

Considering these observations, the TSC may not be the ideal choice as the primary time base for the system clock. However, as explained in this topic, it may make an ideal interpolation means.

Real-Time Clock

The RTC function, often implemented in a multifunction chip, provides a battery backup clock when the computer power is off as well as certain additional functions in some chips. In the STMicroelectronics M41ST85W RTC chip, for example, an internal 32.768-kHz crystal oscillator drives a BCD counter that reckons the time of century (TOC) to a precision of 10 ms. It includes a square-wave generator that can be programmed from 1 to 32,768 Hz in powers of two. The oscillator frequency is guaranteed within 35 PPM (at 25°C) and can be digitally steered within 3 PPM. This is much better than most commodity oscillators and suggests a design in which the PIT is replaced by the RTC generator.

The RTC is read and written over a two-wire serial interface, presumably by a BIOS (Basic Input/Output System) routine. The data are read and written bit by bit for the entire contents. The BCD counter is latched on the first read, so reading the TOC is an atomic operation. The counter is also latched on power down, so it can be read at power-up to determine how long the power has been absent.

For laptops in power-down or sleep mode, it is important that the clock oscillator be as stable and as temperature insensitive as possible. The crystal used in RTC devices is usually AT-cut with the zero-coefficient slope as close to room temperature as possible. The characteristic slope is a parabola in which the highest frequency is near motherboard temperature and drops off at both higher and lower temperatures. For the M41ST85W chip, the characteristic frequency dependence is given by

where k = 0.036 ± 0.006 PPM/C2, and T0 is 25°C. This is an excellent characteristic compared to a typical commodity crystal and would be the much preferred clock source, if available.

There is some art when incorporating an RTC in the system design, especially with laptops, for which the power can be off for a considerable time. First, it is necessary to correct the RTC from time to time to track the system time when it is disciplined by an external source. This is usually done simply by setting the system clock at the current time, which in most operating systems sets the RTC as well. In Unix systems, this is done with the settimeofday() system call about once per hour. Second, in a disciplined shutdown, the RTC and system clock time can be saved in nonvolatile memory and retrieved along with the current RTC time at a subsequent startup. From these data, the system clock can be set precisely within a range resulting from the RTC frequency error and the time since shutdown. Finally, the frequency of the RTC itself can be measured and used to further refine the time at startup.

Precision System Clock implementation

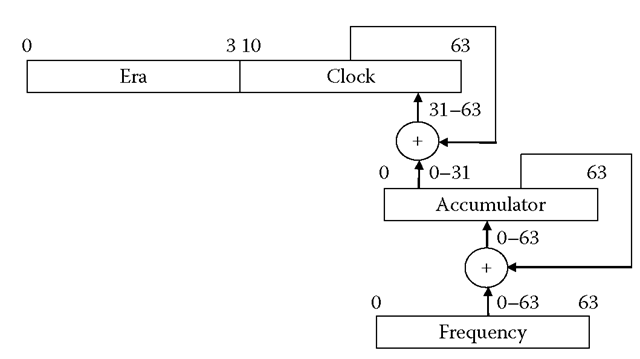

In this section, we examine software implementation issues for a precision system clock with time resolution less than 1 ns and frequency resolution less than 1 ns/s. The issues involve how to represent precision time values, how to convert between different representations. Most current operating systems represent time as two 32-bit or 64-bit words, one in seconds and the other in microseconds or nanoseconds of the second. Arithmetic operations using this representation are awkward and nonatomic.* Modern computer architectures support 64-bit arithmetic, so it is natural to use this word size for the precision system clock shown in Figure 15.1.

FIGURE 15.1

Precision system clock format.

The clock variable is one 64-bit word in seconds and fraction with the decimal point to the left of bit 32 in big-endian order. Arithmetic operations are atomic, and no synchronization primitive, such as a spin-lock, is needed, even on a multiple-processor system.

The clock variable is updated at each timer interrupt, which adds an increment of 232/Hz to the clock variable, where Hz is the timer interrupt frequency.This format is identical to NTP unsigned timestamp format, which of course is not accidental. It is convenient to extend this format to the full 128-bit NTP signed date format and include an era field in the clock format. The absence of the low-order 32 bits of the fraction is not significant since the 32-bit fraction provides resolution to less than 1 ns.

In general, the disciplined clock frequency does not divide the second, so an accumulator and frequency register are necessary. The frequency register contains the tick value plus the frequency correction supplied by the precision kernel discipline. At each tick interrupt, its contents are added to the accumulator. The high-order 32 bits of the accumulator are added to the low-order 32 bits of the clock variable as an atomic operation, then the high-order 32 bits of the accumulator are set to zero.

Furthermore, clock adjustments must be less than 68 years in the past or future. Adjustments beyond this range require the era field to be set by other means, such as the RTC. This model simplifies the discipline algorithm since timestamp differences can span only one era boundary. Therefore, given that the era field is set properly, arithmetic operations consist simply of adding a 64-bit signed quantity to the clock variable and propagating a carry or borrow in the era field if the clock variable overflows or underflows. If the high-order bit of the clock variable changes from a one to a zero and the sign bit of the adjustment is positive (0), an overflow has occurred, and the era field is incremented by one. If the high-order bit changes from a zero to a one and the sign bit is negative (1), an underflow has occurred, and the era field is decremented by one.

If a clock adjustment does span an era boundary, there will be a tiny interval while the carry or borrow is propagated and where a reader could see an inconsistent value. This is easily circumvented using Lamport’s rule in three steps: (1) read the era value, (2) read the system clock variable, and (3) read the era value again. If the two era values are different, do the steps again. This assumes that the time between clock readings is long compared to the carry or borrow propagate time.

The 64-bit clock variable represented in seconds and fraction can easily be converted to other representations in seconds and some modulus m, such as microseconds or nanoseconds. To convert from seconds-fraction to modulus m, multiply the fraction by m and right shift 32 bits. To convert from modulus m to seconds-fraction, multiply by 264/m and right shift 32 bits.

Precision System Clock Operations

The precision system clock design presented in this section is based on an earlier design for the Digital Alpha running the 64-bit OSF/1 kernel. The OSF/1 kernel uses a 1,024-Hz timer frequency, so the amount to add at each tick interrupt is 232/1,024 = 2222 = 4,194,304. The frequency gain is 2-32 = 2.343 x 10-10 s/s; that is, a change in the low-order bit of the fraction yields a frequency change of 2.343 x 10-10 s/s.

Without interpolation, this design has a resolution limited to 2-10 = 977 jus, which may be sufficient for some applications, including software timer management. Further refinement requires the PCC to interpolate between timer interrupts. However, modern computers can have more than one processor (up to 14 on the Alpha), each with its own PCC, so some means are needed to wrangle each PCC to a common timescale.

The design now suggested by Intel to strap all clock functions to one processor is rejected as that processor could be occupied with another thread while another processor is available. Instead, any available processor can be used for the clock reading function. In this design, one of the processors is selected as the master to service the timer interrupt and update the system clock variable c(t). Note that the interpolated system clock time s(t) is equal to c(t) at interrupt time; otherwise, it must be interpolated.

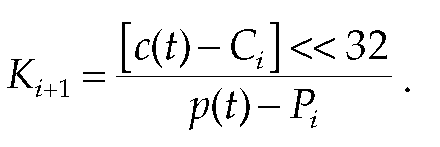

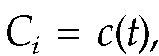

A data structure is associated with each processor, including the master. For convenience in the following, sequential updates for each structure are indexed by the variable i. At nominal intervals less than 1 s (staggered over all processors), the timer interrupt routine issues an interprocessor interrupt to each processor, including the master. At the ith timer interrupt, the interprocessor interrupt routine sets structure member structure

structure

member![]() and updates a structure member Ki as described in the following discussion.

and updates a structure member Ki as described in the following discussion.

During the boot process, i is set to 0, and the structure for each processor is initialized as discussed. For each structure, a scaling factor![]() where

where![]() is the measured processor clock frequency, is initialized. The

is the measured processor clock frequency, is initialized. The![]() can be determined from the difference of the PCC over 1 s measured by a kernel timer. As the system clock is set from the RTC at initialization and not yet disciplined to an external source, there may be a small error in

can be determined from the difference of the PCC over 1 s measured by a kernel timer. As the system clock is set from the RTC at initialization and not yet disciplined to an external source, there may be a small error in but this is not a problem at boot time.

but this is not a problem at boot time.

The system clock is interpolated in a special way using 64-bit arithmetic but avoiding divide instructions, which are considered evil in some kernels. Let s(t) be the interpolated system clock at arbitrary time t and i be the index of the most recent structure for the processor reading the clock. Then,

Note that to avoid overflow, the interval between updates must be less than 1 s.

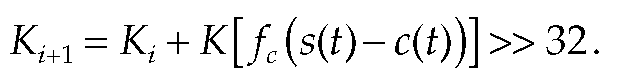

The scaling factor K is adjusted in response to small variations in the apparent PCC frequency, adjustments produced by the discipline algorithm, and jitter due to thread scheduling and context switching. During the i + 1 interprocessor interrupt, but before the Q and P{ are updated, K is updated:

Note that the calculation shown here uses a divide instruction, but this is done infrequently. For instance, if the interval spans just under 1 s and if

Note that only small adjustments in K will be required, which suggests that the adjustment can be approximated with sufficient accuracy while avoiding the divide instruction. If we observe that for small

The value of fc was first measured when K0 was computed so is not known exactly. However, small inaccuracies will not lead to significant error.

Notwithstanding the careful management of residuals in this design, there may be small discontinuities due to PCC frequency variations or discipline operations. These may result in tiny jumps forward or backward. To conform to required correctness assertions, the clock must never jump backward or stand still. For this purpose, the actual clock reading code remembers the last reading and guarantees the clock readings to be monotone-definite increasing by not less than the least-significant bit in the 64-bit clock value.

However, to avoid lockup if the clock is unintentionally set far in the future, the clock will be stepped backward only if more than 2 s. Ordinarily, this would be done only in response to an explicit system call.