Modeling Natural Phenomena

Except for the pixel- and voxel-based types, the representation schemes we have discussed so far are not very useful for modeling natural phenomena. Objects such as trees, mountains, grass, or various terrain cannot easily be modeled by linear poly-hedra or smooth surface patches. Using very small pieces in the representation would overwhelm one with massive amounts of data. Even if this were not a problem, it would not be a satisfactory solution. The picture might look all right at the start, but what if one were to zoom in? One would have to adjust the fineness of the subdivision dynamically to prevent things from eventually looking flat. Modeling and rendering natural phenomena is a digression from the main thrust of this topic. For that reason, we shall only take a brief look at this subject. The four topics we consider are fractals, iterated function systems, grammar based models, and particle systems.

Fractals. One of the most important applications of fractals to graphics is in the representation of natural phenomena. For a definition of a fractal, see Section 22.3. They enable one to represent such phenomena in a realistic way with a small amount of data. The zooming problem also is no problem here. There is one caveat however. Fractals are typically used to represent “generic” trees, mountains, or whatever. They do not lend themselves easily to represent a specific tree or mountain. This is usually not an issue though.

Why are fractals so great for modeling certain natural phenomena? To begin with let us show how fractal curves and surfaces can be generated. The basic construction generalizes that of the well-known Koch curve (see Section 22.3).

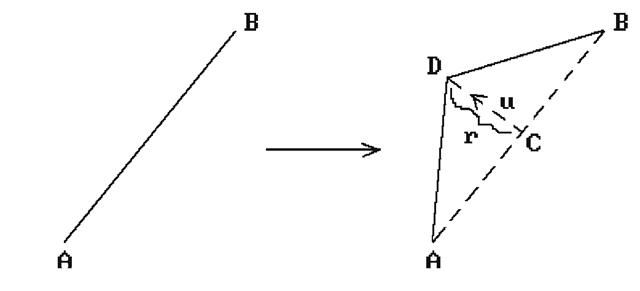

In the one-dimensional case, the algorithm starts with a given initial polygonal curve and then generates a sequence of new curves, each of which adds more detail to the previous one. In every iteration we replace each segment of the old curve with a new curve segment. The simplest way to do this is to displace the midpoint of the segment by a random amount along the perpendicular bisector. See Figure 5.28. Given the segment AB, let C be its midpoint. Compute a unit normal vector u for it, choose a suitable random number r based on the current scale, and replace AB by the segments AD and DB, where C is the midpoint of AB and D = C + ru.

Figure 5.28. Curve midpoint displacements.

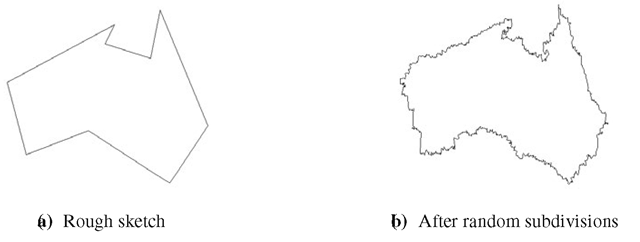

Figure 5.29. A fractal island.

Now, to describe some natural shape such as the boundary of an island proceed as follows: Specify the rough outline of island with a polygonal curve and then apply the algorithm described above, that is successively replace each edge with an appropriate collection of edges. Figure 5.29 shows one possible result after starting with an approximation to the Australian continent. One does have to deal with the problem of self-intersections in the resulting curves.

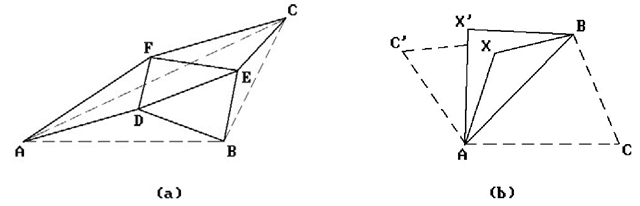

In the two-dimensional case, we have more freedom. For example, for surfaces described as a collection of triangles one common approach is to do the following: “Subdivide” each triangle into smaller triangles obtained by connecting its vertices to appropriate random offsets of the midpoints of its sides. This replaces each triangle successively by seven new smaller triangles and the process can be repeated. In Figure 5.30(a) the midpoints of the edges of triangle ABC were offset to D, E, and F, and the triangle replaced by triangles ABD, BDE, BEC, CEF, ACF, ADF, and DEF. A similar construction works for quadrilaterals. There is one complication in the twodimensional case, namely, if one is not careful, then gaps can appear in places where triangles used to be adjacent. Figure 5.30(b) shows the potential problem if we offset the midpoint of the edge AB to X and X’ with respect to the triangles ABC and ABC’, respectively. One has to make sure that one uses the same random number and normal when offsetting an edge with respect to both triangles that have it in common.

Figure 5.30. Surface midpoint displacements.

Some good references on fractals are [Mand83] and [DevK89].

Iterated Function Systems. Iterated function systems are an elegant way to generate fractals. We refer the reader to Section 22.4 for a brief discussion. [Barn88] is a good reference.

Grammar-Based Models. Building on work of Lindenmayer ([Lind68]) on parallel graph grammars, Smith ([Smit84]) described a class of plant models that he called graftals. The modeling involved two stages: first one generates a formal string from an initial string using production rules and then the image is generated by interpreting this string as a geometric tree in a suitable way. Graftals were not necessarily fractals, but were very similar in that one could generate as much detail as desired. Very realistic plants and trees could be generated using botanical laws.

Particle Systems. Particle systems, introduced in [Reev83], were good at modeling phenomena that was “fluid-like” and changed over time by flowing or spattering, such as clouds, smoke, fire, water falls, and water spray. Typical particles were spheres and ellipsoids. They would be created in a random way with respect to size, position, shape, and other properties and then randomly deleted. During their lifetime their paths, which could be controlled by specified physical forces, would be rendered in appropriate colors. See also [ReBl85].

Most models above are what are called procedural models. We have more to say about this later in Sections 5.6 and 5.11.

Physically Based Modeling

The kind of modeling we have discussed so far has dealt mostly with static objects. Allowing for animation would not change that since animation is nothing but a matter of generating a sequence of views of static objects (rather than a single view). This static modeling is what the traditional CAD systems do and is quite adequate in aiding users in the tasks for which they are used. The real world does not consist of isolated objects however. Objects interact due to a variety of forces. We need to broaden our outlook. Another goal should be to facilitate the modeling of the real world with its interactions. Geometric modeling, the modeling of isolated static objects, is an important step toward modeling real world scenes, but it is only a first step. The next step is to make it easier for users to include the interactions of the objects.

For example, if we wanted to model a ball in a scene with a cloth draped over it, we could do it with the standard modeling system, but it would take quite some effort. We would have to figure out the creases in the cloth on our own. These creases are determined by gravity and other physical forces associated to the particular material from which the cloth is made. We could use the relevant equations of physics to define the set of spline surface patches, or whatever, that would generate the correct picture. How much easier it would be if we only had to tell the CAD program the position and radius of the ball, the material properties of the flat cloth, the starting position of the cloth parallel to the floor at the top of the ball, and then let the program compute the final shape of the cloth after it has reached equilibrium with respect to the forces acting on it. Obviously, a program that could do this would have to have the relevant equations and algorithms programmed within it, but this would only have to be done once.

As another example, suppose that we wanted to show a ball bouncing on a floor. Again, we could do this animation ourselves with a traditional CAD system by determining by hand the series of positions of the ball along with the time intervals between those positions that made up the animation. Why can the CAD program not do this for us, so that all we had to input was an initial height from which the ball is dropped? Obviously, the CAD system could be programmed to do this. This would take some hard work, but again, it would only have to be done once, and then it could help many users in this and similar types of problems.

Modeling that also considers the dynamics of physical objects in addition to their static properties is called physically based modeling. The objects may be simple particles or rigid objects, but could also be much more complex, like cloth. As indicated earlier, we are not really dealing with a new representation scheme but rather an extension of “traditional” representation schemes. This is a relatively new branch of computer graphics, with the name “physically based modeling” being introduced for the first time in an ACM SIGGRAPH 87 course by A.H. Barr ([BarrA87]). To carry out its program involves a great deal of knowledge about physics and other sciences.

Physically based modeling can be interpreted quite generally to encompass the three main areas in computer graphics, modeling, rendering, and animation, but at its core, it deals with classical dynamics of rigid or flexible bodies, interaction of bodies, and constraint-based control. An active area of research is how a user can best control the models. There is a trade-off between realism and control. If the models perform realistically, they are typically controlled by differential equations and the only control a user has in initial conditions. On the other hand, giving the user more control might mean that objects will perform less realistically. Constraint-based techniques are a common way to deal with this problem. This includes constraints defined by equations in physics but also refers to situations where we would like the user to be able to say things like “move object A so that it touches both objects B and C,” “let a ball roll down a hill following a given path,” or “show a moving robot, figure, or object in a domain with obstacles.” Unfortunately, if constraints are not chosen carefully, we may create underconstrained situations where there is no unique solution, or overconstrained situations where there is no solution.

For a more thorough discussion of physically based modeling see the references in that section of the bibliography.

Parametric and Feature-Based Modeling

We have mostly talked about various technical aspects of modeling systems, but a good modeler must also take the user’s or designer’s point of view into account. The difference between a machine representation and a user representation was briefly alluded to in Section 5.3. We also touched on this subject in our discussion of generative modeling in Section 5.3.5. Users should not have to be forced to adapt their way of describing geometry to any low-level abstractions due to technical requirements of a modeler. Defining nontrivial geometric models is usually a difficult task in the best of circumstances. If possible, the process should require no more expertise than the understanding of the final model. Of course, there are times when knowing the underlying mathematics is essential to building a model and so the option of taking advantage of it should be there. Nevertheless, for those times when it is not, we would like a modeler to have the ability to understand high-level, user-friendly concepts. Of course, what is considered user friendly depends on the user. In this section we are concerned with manufacturing environments, where, for example, designers often think of geometric objects in terms of important features that are associated to them, such as, “a block with a slot and rounded corners.” Today’s modelers have a long way to go in fully supporting that type of interface. This section will introduce the reader to what is referred to as feature-based modeling. [ShaM95] is a good reference for this subject. A brief survey can be found in [SodT94].

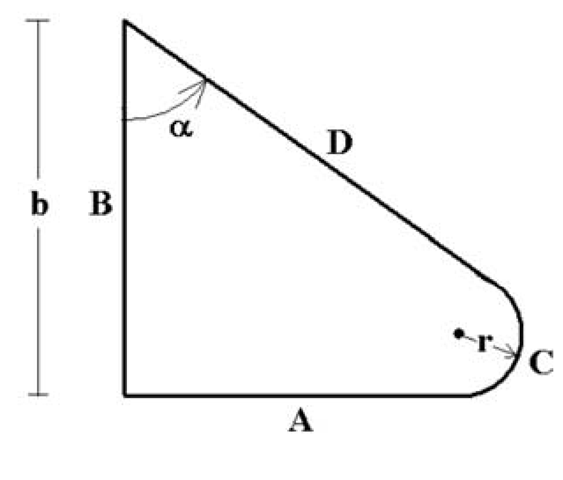

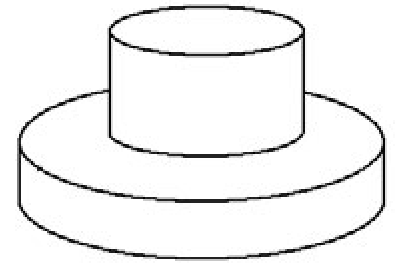

Systems using parametric or variational models were a first step toward feature-based modeling and making life easier for the designer. As is pointed out in [ShaM95], perhaps 80% of new designs are simply variations of old ones. Therefore, given the effort that is sometimes needed to create an individual model, why should one have to create each of these variants from scratch? As an oversimplified example of the type of interface that would be found in a modeler using parametric models, the object in Figure 5.31 might be defined by the following sorts of commands:

Figure 5.31. A parametric model.

(1) horizontal line A of length a

(2) line B perpendicular to line A of length b

(3) line D makes signed angle a with line B

(4) circular arc C tangent to lines A and D

It would then be up to the system to check if this description gives rise to a unique consistent model for particular values of a and b. The basic design process in such a modeling system is then that the user describes a list of geometric primitives to be used and the geometric relationships between them symbolically without any numbers. After the user inputs the actual geometric constraints, the system creates an actual instance of the object if possible. The user can subsequently input new data and get new instances of the object. Note that the “parametric” models considered here are higher-level constructs than those in the generative representation discussed in Section 5.3.5.

Although the terms “parametric” and “variational” are often used interchangeably, there is a subtle distinction between what are called parametric and variational methods. Parametric methods solve constraints by replacing symbolic variables by values that are computed sequentially from previously computed variables. Variational methods use equations to represent constraints and solve them simultaneously. The difference is captured by the difference between defining a variable via a formula or implicitly. For more on parametric and variational modeling see [ShaM95]. Some sample papers on constraint-based modeling with additional references are [LiHS02] and [Podg02].

An approach to geometric design based on functional programming that extends variational modeling is described in [PaPV95]. The authors discuss a high-level functional programming language (a language that manipulates functions) with the underlying geometric objects represented in a hierarchical manner much like in CSG. Elementary polyhedra are stored as inequalities and the basic Boolean set operations are supported. The language is such that all syntactically correct objects are valid. It is argued that the power of this functional approach is that it lets the user naturally generate new models from old ones and is similar to generative modeling in this respect.

Parametric and variational modeling is a start toward facilitating geometric design, but it still only deals with individual geometric primitives with no grouping capabilities and lacks a vision of the whole modeling process. Consider a manufacturing company. Its ability to deal with the design, planning, and manufacturing process in an integrated way is clearly of practical importance. To do this one needs to model the whole process. However, the models used by a designer should be allowed to be different from those used by the person manufacturing the product about which the designer may know little. Both may evolve over time and one only needs a way to map from one to the other. Feature modeling seems like a promising approach to an integrated solution. Again, the problem with the type of modelers we have been discussing up to now is that they dealt solely with the geometry of objects and ignored many of the other issues such as process planning, assembly planning, and inspection planning. Even in the case of just the geometry they were not totally adequate since they tended to be low-level and did not make it easy for a designer to make changes, although parametric modeling helped.

Feature-based modeling dates back to the mid-1970s when attempts were made to get data on manufacturing features for NC programming. Kyprianou [Kypr80] was the person who first introduced automated feature recognition into a modeler (BUILD). [PraW85] was one of the earliest studies of “design by features.” So what exactly is a “feature?”

The term “feature” refers to a high-level abstract entity. It refers to some interesting aspect of, or associated to, an object and is usually a combining of details into one entity that is more meaningful for manipulation than the individual parts. For example, in a b-rep modeler a block with a hole through it might consist of a collection of surface patches with no explicit notion of the center and radius of the hole. Moving the hole might then involve moving and changing a subset of these patches -a tedious task. The term “feature” was first used in manufacturing but has since taken on a broader meaning. Machined parts typically can be described by things like holes, slots, pockets, and grooves. A relatively small collection of such features might have been adequate to describe a part in a particular manufacturing environment and with them one might then be able to create a manufacturing plan. Features are important to automating the design to manufacturing process because they help define the functionality of objects. [ShaM95] lists the following characteristics of a feature:

(1) It is a physical constituent of a part.

(2) It is mappable to a generic shape.

(3) It has engineering significance. (This may involve describing its function or how it “behaves.”)

(4) It has predictable properties.

A feature model is a data structure representing a part or assembly mainly in terms of its constituent features. It is convenient to subdivide features into the following subtypes:

|

(1) |

Geometric features |

|

|

(a) Form features: |

They describe some idealized geometry. |

|

|

(b) Tolerance features: |

They describe variance constraints from the idealized geometry. |

|

|

(c) Assembly features: |

This is a grouping of various feature types into a larger entity and includes kinematic relationships. |

|

|

(2) |

Functional features: |

These describe the overall function of the part. |

|

(3) |

Material features: |

These give material names, specify treatments such as painting, etc. |

Figure 5.32 shows a standard example of what one means by form features. It is a slightly modified version of the CAM-I ANC101 part that is not the picture of any real functioning object but is simply used to test geometric capabilities of modelers. (CAM-I is an abbreviation for Computer Aided Manufacturing, Inc., a nonprofit consortium in Arlington, Texas.) Form features can be primitive or compound. For example, one can talk about a specific pattern of holes rather than just an individual hole.

Figure 5.32. Modified ANC101 test part (CAM-I and [ShaM95]).

Tolerance constraints are needed to ensure that parts will work as specified given the inevitability of inaccuracies in the manufacturing process. The three geometric features (form, tolerance, and assembly features) are the ones that are mostly supported by modelers. Support for functional features is currently still very weak because it assumes a lot more intelligence on the part of modelers than they currently have. Although there is no limit on the number of features one can define, attempts have been made to create taxonomies for them. This is important if one is to have any data exchange standards.

Just as one has to worry about the validity of geometry, one has to also make sure that features are created, modified, and deleted in a valid way. Unfortunately, this is not a mathematical process like it was in the case of geometry. [ShaM95] mentions four general classes of validity checks that are needed:

|

(1) |

Attachment validation: |

A recess feature cannot be attached to an outside face of a block. |

|

(2) |

Dimension limits: |

A hole cannot be larger than the block that contains it. |

|

(3) |

Location limits: |

A hole should not get too close to the edge of a block. |

|

(4) |

Interaction limits: |

This is where two or more features change the geometry or semantics of features. The geometry may not necessarily be invalid. For example, a larger hole may delete a smaller hole. |

As one moves from one stage to another in the manufacturing process, the features that are relevant to the persons involved may change. At the design stage, one may worry about the strength of a particular geometric configuration, whereas at the manufacturing stage this may no longer be relevant and the only features one may care about is where certain holes are to be drilled. This calls for mappings from one feature model to another and is referred to as feature mapping.

Modelers are typically not concerned with features as such, at least not explicitly. One either needs to add to them the ability to deal with features or add features as a set of primitive data structures. For example, CSG is not detailed enough for many features and b-rep is too detailed. So how do we add feature capability to modelers? The three standard approaches are

|

(1) |

Interactive feature definition: |

A human determines the features either at model creation time or later. |

|

(2) |

Automatic feature recognition: |

Here one extracts feature information a posteriori if the geometric models are already defined. |

|

(3) |

Design by feature: |

Here one designs with features in the first place. |

Approach (1) is easiest to implement, but it is up to the user to check for validity and it may be a tedious job if there are lots of features. Approach (2) is much more complicated than (1), but has been incorporated in some modelers (for example, BUILD). A number of different algorithms have been developed to get a handle on the recognition problem. One basically needs a program that looks for patterns. For example, to look for a pocket in a face one could look for a cycle of edges at which the solid has a convex corner. Difficult as automatic feature detection may be, one may need it if different features are used at different stages in the manufacture of an object. With regard to approach (2), some features could be defined at model creation time, as when one creates a slot by sweeping. However, these would by in large be purely geometric features and not all features are that. Furthermore, the primitive operations of a modeler may not directly correspond to the features that are of interest to someone and some may lead to ambiguous features (see Figure 5.33). A good overview of feature recognition techniques can be found in [JiMa97].

Approach (3) is probably the most attractive. A modeler might have a menu allowing a designer to create an object in terms of its features. One would be able to create an object with a slot or hole in essentially one step, or at least in a number of steps that depended on the number of varying parameters for that particular shape. Figure 5.34 shows 10 of the 13 steps needed to create the ANC101 part in Figure 5.32. Although this might make life easier for the designer, it would certainly make life much harder for the implementer of this modeler. The problem is validity. The modeler would have to make sure that the chosen features were consistent, a difficult task in general. For example, if someone defined a block with a hole, the modeler would have to make sure that the hole was not too close to the side so that it would break through. The bigger the collection of features, the more checking that would have to be done. Roller ([Roll95]) discusses designing with constrained parameterized features.

There are two ways to deal with feature definitions in a design-by-feature system, procedural or declarative, although these can be combined. In the procedural approach, features are defined by a collection of procedures in the programming language of the system. In the declarative approach a feature definition consists of a collection of constraint specifications, rules, or predicate logic statements. Satisfaction of the declarations is accomplished by a constraint satisfaction algorithm, an inference engine, or unification, respectively. Three basic approaches are used for solving the constraint problem: constraint graphs where the nodes are geometric entities and the edges are the constraints, logical assertions, or algebraic equations that are expressed symbolically and solved symbolically. None of the approaches are easy. See [LiON02] for an example of feature mapping in a design-by-feature system.

Figure 5.33. Ambiguous features: boss on disk or flange on cylinder?

Figure 5.34. Designing with features ([ShaM95]).

In conclusion, many modelers are now supporting features. Overall, b-rep modelers, in particular those that support nonmanifolds, seem better suited to the feature recognition task than CSG modelers. The only advantage of the latter is in editing but this can also be dealt with in a b-rep modeler. For example, consider an object with slots of varying lengths. Changing the length of the slots may cause them to intersect. With a CSG representation the history of any changes can be maintained relatively easily, whereas with a boundary representation such changes might cause radical changes in the relationships of facets. [Prat87b] mentions the following feature-specific advantages of a boundary representation:

(1) Features are usually best described in terms of faces, edges, etc.

(2) Dimensioning and tolerancing of features need these low-level entities.

(3) CSG representations can be ambiguous. See Figure 5.33 again.

(4) Local operations for feature manipulation are available in the design stage.

Feature recognition algorithms for b-rep modelers divide into two types: those that use purely surface information and those that use volume decompositions. The latter seem to be a better approach but are not as developed yet.

Finally, since there are a number of feature-based modelers, it is important that one can exchange data between them. One might also want to input some feature data to an application program. STEP (Standard for exchange of product data) is a set of standards that resulted from an international effort to enable this exchange. See [ShaM95] for an overview and additional references.

![Modified ANC101 test part (CAM-I and [ShaM95]). Modified ANC101 test part (CAM-I and [ShaM95]).](http://what-when-how.com/wp-content/uploads/2012/06/tmp303812_thumb.png)

![Designing with features ([ShaM95]). Designing with features ([ShaM95]).](http://what-when-how.com/wp-content/uploads/2012/06/tmp303814_thumb.png)