Congestion avoidance is used to avoid tail drop, which has several drawbacks. RED and its variations, namely WRED and CBWRED, are commonly used congestion-avoidance techniques used on Cisco router interfaces. Congestion avoidance is one of the main pieces of a QoS solution.

Tail Drop and Its Limitations

When the hardware queue (transmit queue, TxQ) is full, outgoing packets are queued in the interface software queue. If the software queue becomes full, new arriving packets are tail-dropped by default. The packets that are tail-dropped have high or low priorities and belong to different conversations (flows). Tail drop continues until the software queue has room. Tail drop has some limitations and drawbacks, including TCP global synchronization, TCP starvation, and lack of differentiated (or preferential) dropping.

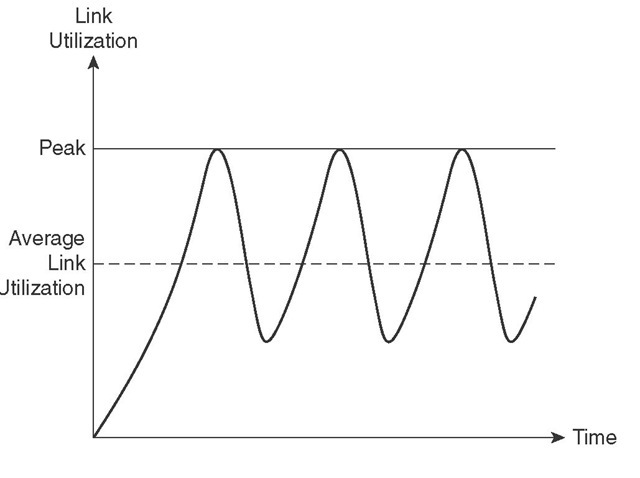

When tail drop happens, TCP-based traffic flows simultaneously slow down (go into slow start) by reducing their TCP send window size. At this point, the bandwidth utilization drops significantly (assuming that there are many active TCP flows), interface queues become less congested, and TCP flows start to increase their window sizes. Eventually, interfaces become congested again, tail drops happen, and the cycle repeats. This situation is called TCP global synchronization. Figure 5-1 shows a diagram that is often used to display the effect of TCP global synchronization.

Figure 5-1 TCP Global Synchronization

The symptom of TCP global synchronization, as shown in Figure 5-1, is waves of congestion followed by troughs during which time links are underutilized. Both overutilization, causing packet drops, and underutilization are undesirable. Applications suffer, and resources are wasted.

Queues become full when traffic is excessive and has no remedy, tail drop happens, and aggressive flows are not selectively punished. After tail drops begin, TCP flows slow down simultaneously, but other flows (non-TCP), such as User Datagram Protocol (UDP) and non-IP traffic, do not. Consequently, non-TCP traffic starts filling up the queues and leaves little or no room for TCP packets. This situation is called TCP starvation. In addition to global synchronization and TCP starvation, tail drop has one more flaw: it does not take packet priority or loss sensitivity into account. All arriving packets are dropped when the queue is full. This lack of differentiated dropping makes tail drop more devastating for loss-sensitive applications such as VoIP.

Random Early Detection

RED was invented as a mechanism to prevent tail drop. RED drops randomly selected packets before the queue becomes full. The rate of drops increases as the size of queue grows; better said, as the size of the queue grows, so does the probability of dropping incoming packets. RED does not differentiate among flows; it is not flow oriented. Basically, because RED selects the packets to be dropped randomly, it is (statistically) expected that packets belonging to aggressive (high volume) flows are dropped more than packets from the less aggressive flows.

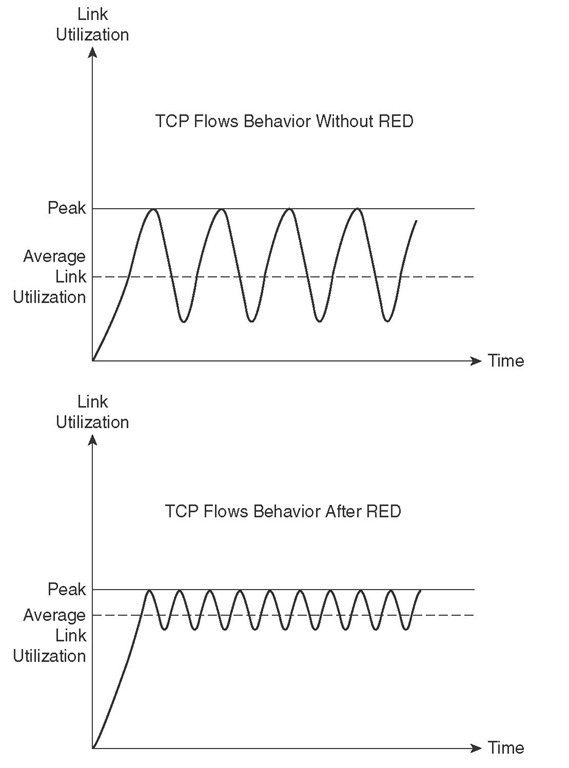

Because RED ends up dropping packets from some but not all flows (expectedly more aggressive ones), all flows do not slow down and speed up at the same time, causing global synchronization. This means that during busy moments, link utilization does not constantly go too high and too low (as is the case with tail drop), causing inefficient use of bandwidth. In addition, average queue size stays smaller. You must recognize that RED is primarily effective when the bulk of flows are TCP flows; non-TCP flows do not slow down in response to RED drops. To demonstrate the effect of RED on link utilization, Figure 5-2 shows two graphs. The first graph in Figure 5-2 shows how, without RED, average link utilization fluctuates and is below link capacity. The second graph in Figure 5-2 shows that, with RED, because some flows slow down only, link utilization does not fluctuate as much; therefore, average link utilization is higher.

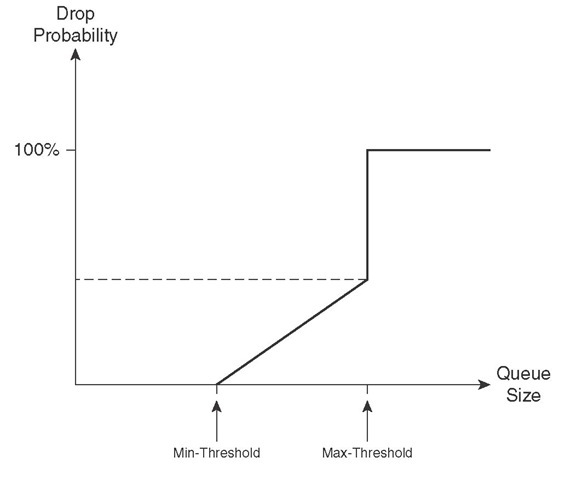

RED has a traffic profile that determines when packet drops begin, how the rate of drops change, and when packet drops maximize. The size of a queue and the configuration parameters of RED guide its dropping behavior at any given point in time. RED has three configuration parameters: minimum threshold, maximum threshold, and mark probability denominator (MPD). When the size of the queue is smaller than the minimum threshold, RED does not drop packets. As the size of queue grows above the minimum threshold and continues to grow, so does the rate of packet drops. When the size of queue becomes larger than the maximum threshold, all arriving packets are dropped (tail drop behavior). MPD is an integer that dictates to RED to drop 1 of MPD (as many packets as the value of mark probability denominator), while the size of queue is between the values of minimum and maximum thresholds.

Figure 5-2 Comparison of Link Utilization with and Without RED

For example, if the MPD value is set to 10 and the queue size is between minimum and maximum threshold, RED drops one out of ten packets. This means that the probability of an arriving packet being dropped is 10 percent. Figure 5-3 is a graph that shows a packet drop probability of 0 when the queue size is below the minimum threshold. It also shows that the drop probability increases as the queue size grows. When the queue size reaches and exceeds the value of the maximum threshold, the probability of packet drop equals 1, which means that packet drop will happen with 100 percent certainty.

Figure 5-3 RED Profile Demonstration

The minimum threshold of RED should not be too low; otherwise, RED starts dropping packets too early, unnecessarily. Also, the difference between minimum and maximum thresholds should not be too small so that RED has the chance to prevent global synchronization. RED essentially has three modes: no-drop, random-drop, and full-drop (tail drop). When the queue size is below the minimum threshold value, RED is in the no-drop mode. When the queue size is between the minimum and maximum thresholds, RED drops packets randomly, and the rate increases linearly as the queue size grows. While in random-drop mode, the RED drop rate remains proportional to the queue size and the value of the mark probability denominator. When the queue size grows beyond the maximum threshold value, RED goes into full-drop (tail drop) mode and drops all arriving packets.

Weighted Random Early Detection

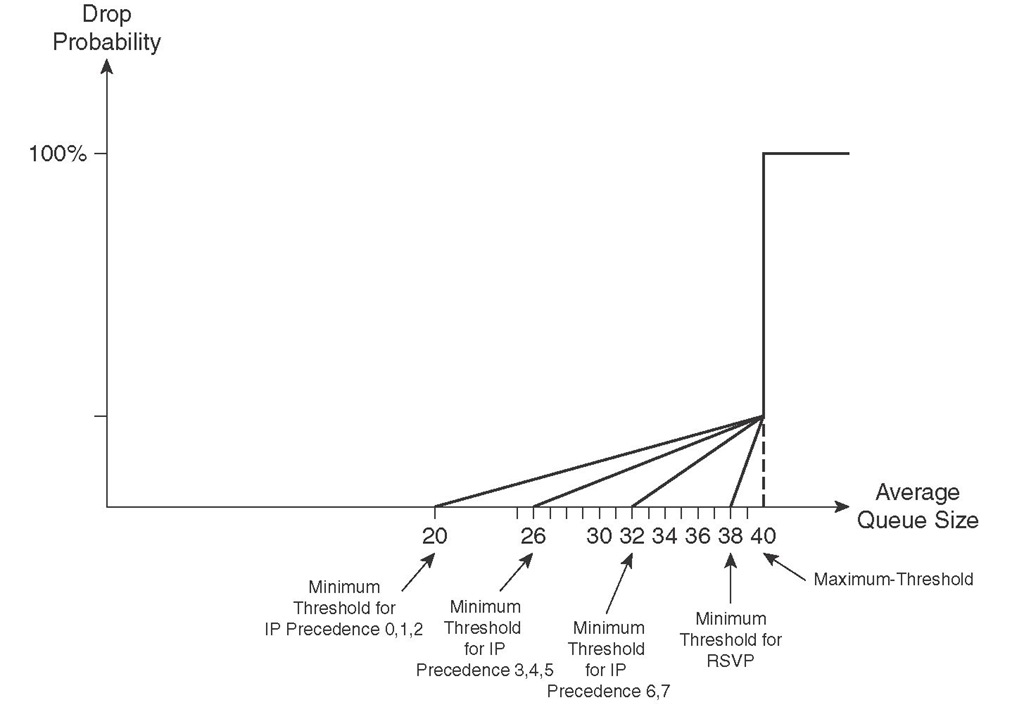

WRED has the added capability of differentiating between high- and low-priority traffic, compared to RED. With WRED, you can set up a different profile (with a minimum threshold, maximum threshold, and mark probability denominator) for each traffic priority. Traffic priority is based on IP precedence or DSCP values. Figure 5-4 shows an example in which the minimum threshold for traffic with IP precedence values 0, 1, and 2 is set to 20; the minimum threshold for traffic with IP precedence values 3, 4, and 5 is set to 26; and the minimum threshold for traffic with IP precedence values 6 and 7 is set to 32. In Figure 5-4, the minimum threshold for RSVP traffic is set to 38, the maximum threshold for all traffic is 40, and the MPD is set to 10 for all traffic.

Figure 5-4 Weighted RED Profiles

WRED considers RSVP traffic as drop sensitive, so traffic from non-RSVP flows are dropped before RSVP flows. On the other hand, non-IP traffic flows are considered least important and are dropped earlier than traffic from other flows. WRED is a complementary technique to congestion management, and it is expected to be applied to core devices. However, you should not apply WRED to voice queues. Voice traffic is extremely drop sensitive and is UDP based. Therefore, you must classify VoIP as the highest priority traffic class so that the probability of dropping VoIP becomes very low.

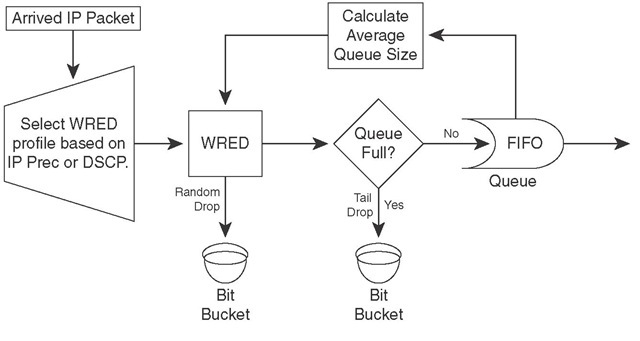

WRED is constantly calculating the current average queue length based on the current queue length and the last average queue length. When a packet arrives, first based on its IP precedence or DSCP value, its profile is recognized. Next, based on the packet profile and the current average queue length, the packet can become subject to random drop. If the packet is not random-dropped, it might still be tail-dropped. An undropped packet is queued (FIFO), and the current queue size is updated. Figure 5-5 shows this process.

Figure 5-5 WRED Operation

Class-Based Weighted Random Early Detection

When class-based weighted fair queueing (CBWFQ) is the deployed queuing discipline, each queue performs tail drop by default. Applying WRED inside a CBWFQ system yields CBWRED; within each queue, packet profiles are based on IP precedence or DSCP value. Currently, the only way to enforce assured forwarding (AF) per-hop behavior (PHB) on a Cisco router is by applying WRED to the queues within a CBWFQ system. Note that low-latency queuing (LLQ) is composed of a strict-priority queue (policed) and a CBWFQ system. Therefore, applying WRED to the CBWFQ component of the LLQ yields AF behavior, too. The strict-priority queue of an LLQ enforces expedited forwarding (EF) PHB.

Configuring CBWRED

WRED is enabled on an interface by entering the random-detect command in the IOS interface configuration mode. By default, WRED is based on IP precedence; therefore, eight profiles exist, one for each IP precedence value. If WRED is DSCP based, there are 64 possible profiles. Non-IP traffic is treated equivalent to IP traffic with IP precedence equal to 0. WRED cannot be configured on an interface simultaneously with custom queuing (CQ), priority queuing (PQ), or weighted fair queuing (WFQ). Because WRED is usually applied to network core routers, this does not impose a problem. WRED has little performance impact on core routers.

To perform CBWRED, you must enter the random-detect command for each class within the policy map. WRED is precedence-based by default, but you can configure it to be DSCP-based if desired. Each traffic profile (IP precedence or DSCP based) has default values, but you can modify those values based on administrative needs. For each IP precedence value or DSCP value, you can set a profile by specifying a min-threshold, max-threshold, and a mark-probability-denominator. With the default hold-queue size within the range 0 to 4096, the min-threshold minimum value is 1 and the max-threshold maximum value is 4096. The default value for the mark probability denominator is 10. The commands for enabling DSCP-based WRED and for configuring the minimum-threshold, maximum-threshold, and mark-probability denominator for each DSCP value and for each IP precedence value within a policy-map class are as follows:

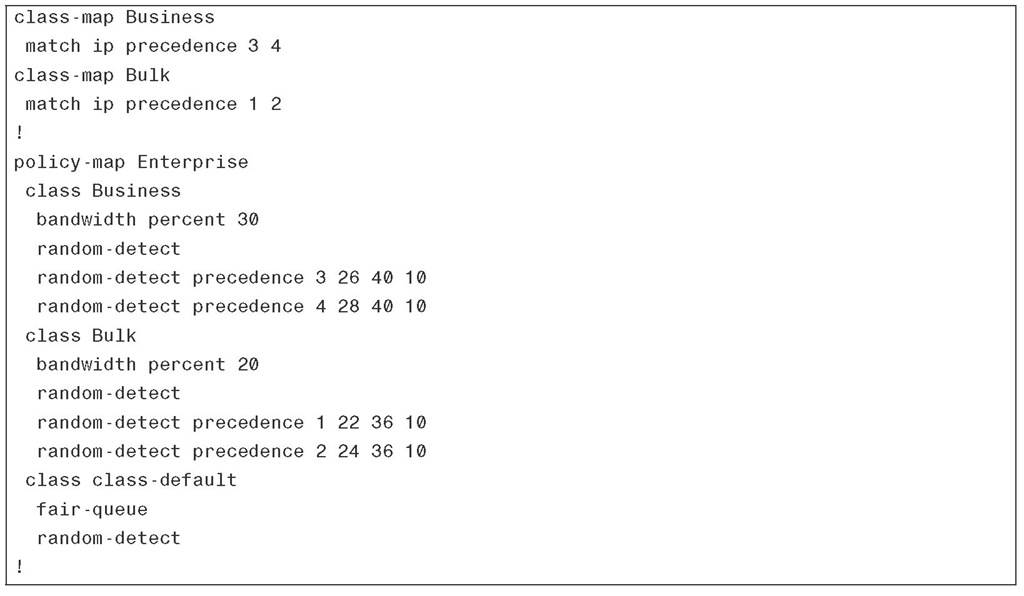

Applying WRED to each queue within a CBWFQ system changes the default tail-dropping behavior of that queue. Furthermore, within each queue, WRED can have a different profile for each precedence or DSCP value. Example 5-1 shows two class maps (Business and Bulk) and a policy map called Enterprise that references those class maps. Class Business is composed of packets with IP precedence values 3 and 4, and 30 percent of the interface bandwidth is dedicated to it. The packets with precedence 4 are considered low drop packets compared to the packets with a precedence value of 3 (high drop packets). Class Bulk is composed of packets with IP precedence values of 1 and 2 and is given 20 percent of the interface bandwidth. The packets with a precedence value of 2 are considered low drop packets compared to the packets with a precedence value of 1 (high drop packets). The policy map shown in Example 5-1 applies fair-queue and random-detect to the class class-default and provides it with the remainder of interface bandwidth. Note that you can apply fair-queue and random-detect simultaneously to the class-default only.

Example 5-1 CBWRED: IP Precedence Based

Please remember that you cannot simultaneously apply WRED and WFQ to a class policy. (WRED random-detect and WFQ queue-limit commands are mutually exclusive.) Whereas WRED has its own method of dropping packets based on max-threshold values per profile, WFQ effectively tail-drops packets based on the queue-limit value. Recall that you cannot apply WRED and PQ, CQ, or WFQ to an interface simultaneously either.

Example 5-2 shows a CBWRED case that is similar to the one given in Example 5-1; however, Example 5-2 is DSCP based. All AF2s plus CS2 form the class Business, and all AF1s plus CS1 form the class Bulk. Within the policy map Enterprise, class Business is given 30 percent of the interface bandwidth, and DSCP-based random-detect is applied to its queue. For each AF and CS value, the min-threshold, max-threshold, and mark-probability-denominator are configured to form four profiles within the queue. Class Bulk, on the other hand, is given 20 percent of the interface bandwidth, and DSCP-based random-detect is applied to its queue. For each AF and CS value, the min-threshold, max-threshold, and mark-probability-denominator are configured to form four profiles within that queue.

Example 5-2 CBWRED: DSCP Based

In Example 5-2, similar to Example 5-1, the fair-queue and random-detect commands are applied to the class-default class. In Example 5-2 however, random-detect is DSCP-based as opposed to Example 5-1, where the class-default random-detect is based on IP precedence by default.

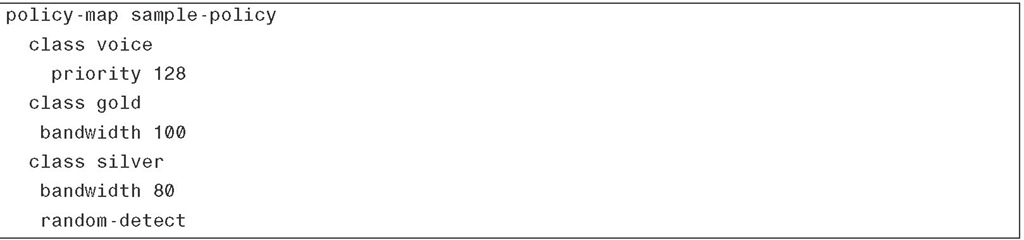

Use the show policy-map interface interface command to see the packet statistics for classes on the specified interface or PVC. (Service policy must be attached to the interface or the PVC.) The counters that are displayed in the output of this command are updated only if congestion is present on the interface. Note that the show policy-map interface command displays policy information about Frame Relay PVCs only if Frame Relay traffic shaping (FRTS) is enabled on the interface; moreover, ECN marking information is displayed only if ECN is enabled on the interface. Example 5-3 shows a policy map called sample-policy that forms an LLQ. This policy assigns voice class to the strict priority queue with 128 kbps reserved bandwidth, assigns gold class to a queue from the CBWFQ system with 100 kbps bandwidth reserved, and assigns the silver class to another queue from the CBWFQ system with 80 kbps bandwidth reserved and RED applied to it.

Example 5-3 A Sample Policy Map Implementing LLQ

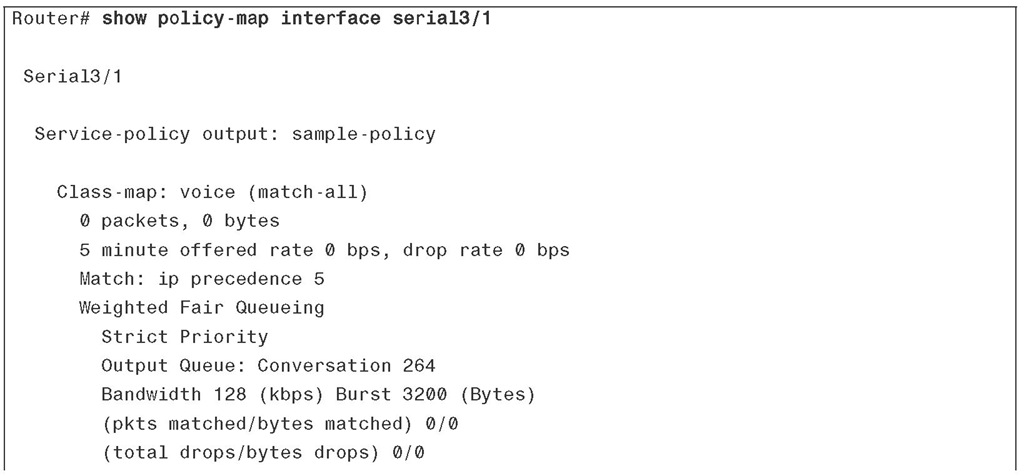

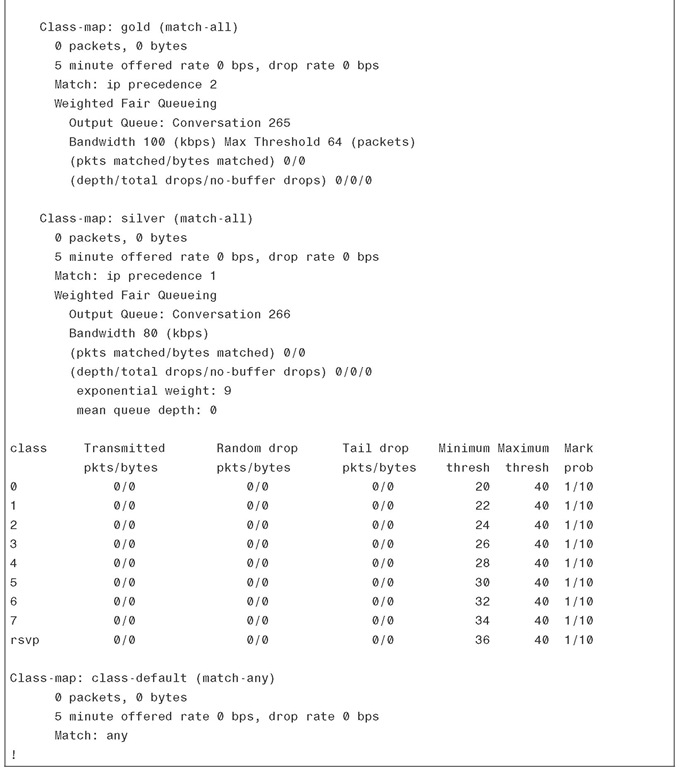

Example 5-4 shows sample output of the show policy-map interface command for the serial 3/1 interface with the sample-policy (shown in Example 5-3) applied to it.

Example 5-4 Monitoring CBWFQ

Example 5-4 Monitoring CBWFQ

In Example 5-4, WRED is applied only to the silver class, and WRED is IP precedence-based by default. On the output of the show command for the silver class, you can observe the statistics for nine profiles: eight precedence levels plus RSVP flow.