Statistical methods for the identification of protein-coding regions in prokaryotic genomes have been the main tools for gene annotation since the start of genomic era. The pioneer papers describing gene-recognition algorithms appeared in press in the 1980s (Fickett, 1982; Staden, 1984; Gribskov etal., 1984; Almagor, 1985; Claverie and Bougueleret, 1986; Borodovsky etal., 1986a, b, c; Fichant and Gautier, 1987). These methods were using the so-called intrinsic information, statistical patterns in nucleotide ordering, characteristic for protein-coding and noncoding regions observed in a single DNA sequence. Methods utilizing the so-called extrinsic information embodied in common or similar sequences conserved in evolution and identified by comparison of DNA or proteins of different species were developed later (Gish and States, 1993; Robison etal., 1994). With the fast accumulation of DNA and protein sequences, use of extrinsic information has gained more attention and methods combining both intrinsic and extrinsic information have appeared (Borodovsky etal., 1994; Frishman et al., 1998).

Development of ab initio gene recognition algorithms that use intrinsic information generally follows three steps. At the first step, statistical measures (e.g., G + C composition) that may help distinguish sequence types (e.g., protein-coding vs. noncoding) are determined. At the second step, the statistical models are built for sequences of all types. At the third step, the models are integrated into a statistical pattern recognition algorithm.

In 1992, in a comprehensive review, Fickett and Tung analyzed more than 20 types of statistical measures of protein-coding regions and singled out as the most informative ones the frame-dependent (positional) hexamer frequencies (Fickett and Tung, 1992).

Introduced in 1986, inhomogeneous Markov chain models of a protein-coding region (Borodovsky et al., 1986b) have been a rigorous mathematical framework for using positional oligonucleotide frequencies. This model combined with a homogeneous Markov model of noncoding sequence in a Bayesian algorithm possesses a significant predictive power, as was demonstrated by the GeneMark gene finding program (Borodovsky et al., 1986c; Borodovsky and McIninch, 1993).

The GeneMark algorithm identifies protein-coding regions in both DNA strands by analyzing only one of them. To accomplish this, a Markov model of a DNA sequence complementary to a gene on the opposite strand (“shadow” model) is added to the already-mentioned models of “direct” protein-coding sequence and noncoding sequence. The algorithm outline is as follows. Given the first-order Markov chain model, the probability that a DNA sequence segment, S = {si, s2,… , sn} (n is a multiple of 3), comes from a protein-coding region with s 1 being the first nucleotide of a codon (event E1 or frame 1 setting) is given by the formula P(S\E1) = P 1(s1) * P 1(s2|s1) * P2(s3|s2) * P3(s4|s3) * ••• * P2(s„\sn-1) Here P’(s 1) is the probability for s 1 to be situated in frame i and P’(sk\sk-1) is the transition probability of sk to succeed sk-1 situated in frame i. Similar expressions for P(S\Ei), i = 2 to 7 could be written for the remaining six events, which correspond to sequence S coming from the coding region in frame 2 and frame 3 settings (Ei, i = 2, 3); from a region complementary to a gene in frames 4, 5, and 6 (Ei, i = 4, 5, 6), here the shadow model is used; and from a noncoding region (E7), here the noncoding model is used (and no frame setting is needed). Thus, the determined probabilities P(S\Ei) are used to compute an a posteriori probability for each Ei to be associated with observed

event Ei. It is possible to consider the GeneMark algorithm as an approximation to the forward-backward algorithm, known in the theory of hidden Markov models (HMM) (Rabiner, 1989), which computes the a posteriori probability of a hidden (functional) state underlying a given observed state (a nucleotide) in a particular sequence position (Azad and Borodovsky, 2004a). This interpretation could be unexpected as the forward-backward algorithm is used frequently at a training step for an HMM-based algorithm. Here we certainly deal with the prediction step. Still, we may consider the value of P(S\Ei) computed by GeneMark for a short DNA segment S (within a sliding window) to be an approximation to the value of an a posteriori probability for the hidden state Ei for a nucleotide position in the middle of segment S computed by a rigorous forward-backward HMM algorithm, given the entire model of states and transitions.

Given the notion that genes in prokaryotic genomes are continuous open reading frames (ORFs), though by far not all ORFs are genes, the GeneMark program computes a scoring statistics for each ORF in the genomic sequence. This score is the average value of the a posteriori probabilities of the events Ei (for one and the same i defined by the ORF) for the short subsequences of the ORF in question. If this value is larger than a certain threshold, then the ORF is predicted as a gene.

It can be shown that Markov models of higher orders perform better for gene recognition than the first-order ones. The difficulty though may arise with the high-order model parameter estimation (model training). If the length of DNA sequence available for training is limited, an attempt to derive parameters of Markov models of high order may end up in overfitting of at least a fraction of the model parameters to the training data. However, the attempt to recover the informative parameters of the high-order model and combine them with informative parameters of lower-order models may result in better predictive ability (Jelinek et al., 1980). Such combined (interpolated) models have been used in the Glimmer algorithm (Salzberg et al., 1998; Delcher et al., 1999). Their parameters are defined by recursive equation, PIMM(b\ck) = k(ck) * P(b\ck) + (1 – X(ck)) * PIMM(b\ck-1). Here PIMM(b\ck) is the interpolated transition probability of a nucleotide b to succeed k-mer ck,

P(b\ck) is the transition probability as defined in a regular Markov chain model, and X(ck) is the interpolation parameter corresponding to k-mer ck, 0 < X(ck) < 1. It is reasonable to expect that if the frequency of ck in the training sequence is sufficiently high, then A.(ck) should be close to 1; for low frequency ck, k(ck) should be close to 0. However, accurate estimation of X(ck) is a critical issue and several approaches have been proposed to address it (Salzberg etal., 1998; Potamianos and Jelinek, 1998; Azad and Borodovsky, 2004b).

The Bayesian framework was further generalized by using HMM, which have been used in several prokaryotic as well as eukaryotic gene prediction algorithms developed in the last decade (Krogh etal., 1994; Yada and Hirosawa, 1996; Lukashin and Borodovsky, 1998; Yada et al., 2001; Larsen and Krogh, 2003; Burge and Karlin, 1997; Krogh, 2000; see also Article 14, Eukaryotic gene finding, Volume 7, Article 21, Gene structure prediction in plant genomes, Volume 7, and Article 26, Dynamic programming for gene finders, Volume 7). In an HMM, the gene recognition problem is reformulated as a problem of finding the most likely sequence H = {H\,… , Hn}, where Hi stands for a hidden state, for example protein-coding or noncoding, underlying a given DNA sequence S = {51,… ,sn}. Hidden (functional) and observed (nucleotide) states are connected in an HMM by probabilistic (Markov chain type) dependencies. If HMM structure and parameters are defined, then for a given sequence S, the Viterbi algorithm solves the problem stated above and finds the most likely state sequence H * by maximizing the conditional probability P(H\S) with respect to H.

The first HMM-based gene prediction algorithm, ECOPARSE, was described by Krogh et al. (1994). ECOPARSE and the later developed GeneHacker (Yada and Hirosawa, 1996) are based on a classic HMM, with a hidden state emitting one nucleotide triplet (codon) from the protein-coding state and emitting one nucleotide from noncoding state. In the GeneMark.hmm program (Lukashin and Borodovsky, 1998), a hidden state is assumed to emit a whole sequence of observed states (nucleotides), with length specified by a probability distribution. The homogeneous and inhomogeneous Markov models (earlier utilized in GeneMark) are used as submodels of the main HMM to describe these emitted nucleotide sequences. The HMM architecture of GeneMark.hmm also included hidden states for rare “atypical” (e.g., horizontally transferred) genes, along with states for abundant native “typical” genes. Note that GeneMark.hmm and GeneMark possess complementary properties as the former implements the generalized Viterbi algorithm dealing with the sequence as a whole and the latter approximates the forward-backward algorithm, revealing local features of gene organization. Particularly, the a posteriori probability graphs generated by GeneMark in six reading frames help in detecting frameshifts (local irregularities) in the sequence that mainly occur because of sequencing errors.

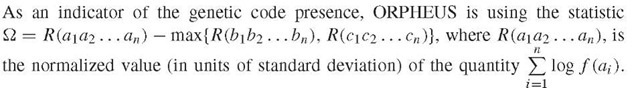

Extrinsic information could be used in gene finding algorithms in several different ways. Early extrinsic algorithms used straightforward similarity search on protein level, that is, BLASTX, to identify ORFs whose protein products score statistically significant hits to known proteins. More recent algorithms combine extrinsic and intrinsic information. For instance, parameters of the gene models in the ORPHEUS program (Frishman etal., 1998) are estimated from ORF sequences whose protein products show significant similarity to known proteins.

Here f (xi) is the frequency of xi codon and (a1… an), (bi… bn), (ci… cn) stand for the sequences of codons in three possible frames. Similar validation of a training set by using extrinsic data is implemented in the recent algorithm EasyGene (Larsen and Krogh, 2003). EasyGene scores sequence S of each ORF by computing a statistics W = log (Psnj) where P(S\M) and P(S\N) are the probabilities of S being generated by HMM of a gene, M, and HMM of noncoding region, N, respectively. A rather unique feature of EasyGene is the assessment of the statistical significance of the computed score. The CRITICA algorithm (Badger and Olsen, 1999) uses both extrinsic and intrinsic approaches to assign scores reflecting protein-coding propensity to a DNA sequence fragment in each of the six possible frames. The score is a sum of two components: a comparative (extrinsic) score based on the relative identities of nucleotides and corresponding potential amino acids and noncomparative (intrinsic) score based on dicodon bias in real protein-coding frames. Shibuya and Rigoutsos (2002) developed Bio-Dictionary, a database of patterns called “seqlets” extracted from protein sequence databases. If the number of seqlets matching to the ORF protein product exceeds a predetermined threshold, the ORF is predicted as a gene. This approach was implemented in a gene prediction algorithm, called Bio-Dictionary Gene Finder (BDGF) (Shibuya and Rigoutsos, 2002). Finally, one of the most extrinsic information oriented programs GeneHacker Plus (Yada et al., 2001), an improved version of GeneHacker (Yada and Hirosawa, 1996), has an ability to include the results of similarity searches as restrictions in the Viterbi algorithm.

The exact determination of gene start position is an important part of prokaryotic gene identification task. The algorithms mentioned above, ECOPARSE, EasyGene, GeneHackerPlus, GeneMark.hmm, and Glimmer use ribosome binding site (RBS) models to improve accuracy of gene start prediction. A two-component RBS model is included in the HMM architecture of GeneMark.hmm and its parameters along with other model parameters could be derived by GeneMarkS (Besemer et al., 2001), the unsupervised training program implementing the expectation-maximization procedure. This procedure iterates predictions and model derivation to the point where the gene predictions made with updated models coincide with gene prediction in the previous step (i.e., till the convergence is reached). The convergence is facilitated by use of heuristic pseudocounts at the initial step of the procedure (Besemer and Borodovsky, 1999). Note that several of the above-mentioned algorithms have extensions for deriving models by unsupervised learning procedure, which are useful if one has to start analysis of a new genome. Assessment of the performance of gene finding algorithms has been far from simple issue due to the lack of sufficient number of experimentally validated genes. Even an ability of a program to reproduce correctly most of the genes annotated in GenBank will not provide conclusive evidence of the algorithm’s high predictive power unless the test on experimentally validated genes would support this statement. Two parameters are usually used to measure the prediction accuracy: the percentage of genes in the test set that are correctly identified by the algorithm (Sensitivity, Sn) and the percentage of predictions that match the genes in the test set (Specificity, Sp).

All above-mentioned programs have shown high performance with Sn and Sp values higher than 90% in majority of the tests that were done with regard to GenBank annotated sequences. Still there is a need to carefully design test sets for various prokaryotic species and assess the performance if one is interested in this issue in depth.

Currently, the largest number of experimentally validated genes is known for Escherichia coli. When 195 E. coli genes with their starts determined by N-terminal protein sequencing were used for accuracy tests, both GeneMarkS and EasyGene correctly identified above 93% of the gene starts (Larsen and Krogh, 2003). Similar results were observed when the same algorithms were tested on a larger set comprising 850 E. coli genes with validated start positions (Rudd, 2000).

The rather short history of development of the prokaryotic gene identification algorithms presents a picture of significant accomplishments. However, the perfectionist goal to identify by computer all protein-coding genes in a given genome without false-positives remains a challenge. The main part of this challenge is the flawless detection of short genes. Identification of atypical or laterally transferred genes is a closely related problem as genes from this group are frequently escaping detection. Further improvement of gene start prediction is another issue that remains to be addressed. However, continuation of strong efforts toward integrating intrinsic and extrinsic approaches should bring us closer to a point when the prokaryotic gene identification problem would be declared completely solved.