The nineteenth century saw profound changes in the advance of knowledge in several important areas. Geology and biology had both come to realize that vast spans of time were needed to explain the observed fossil changes and rock formations. Geologists had introduced the idea of strata occurring in the order in which they had been formed, an idea readily translated to archaeology, where lower layers of finds were assumed to be older.

The new ideas of biological evolution advanced by Charles Darwin in his 1859 essay On the Origin of Species gave another sense of time. Whereas great scientists like Isaac Newton had, a couple of centuries before, readily accepted that the world started some six thousand years ago, based on a particular interpretation of the biblical story, Darwin left scientists grappling with the idea that humans had developed from "lower" creatures over a very long period of time, which meant that there was a long prehistory to be examined and understood.

By the end of the nineteenth century, archaeologists had recognized a progression in technologies apparent in their artifact collections, and the contexts of the finds had suggested that human populations had moved from stone tools, through the use of copper, to bronze, and then iron. Archaeologists of the day, however, had little or no evidence to put dates to these changes or get any sense of the length of periods involved.

The history of the Near East and Middle East was fairly well understood in the late nineteenth and early twentieth centuries, thanks largely to the fact that in these literate societies records had been kept, giving times for the reigns of kings and major events. This meant that the great works, such as the pyramids of Egypt, could be dated reasonably well, as could the introduction of metallurgical technologies in different parts of this region. The region was considered to be the cradle of civilization, from which the knowledge of building techniques and metalworking spread out gradually through trading links and other associations to displace the crude technologies ofprehistoric Europe. This was known as the idea of diffusion.

Some did argue that, in a way that parallels evolution in the biological world, the technologies may have evolved in different areas and spread more locally, but with limited dating evidence, this idea was almost impossible to support or reject from the available information.

In order to construct a meaningful story explaining the developments of human populations in any part of the world it is essential to have a reliable dating framework. With no written records pertaining to the barbarian world, the only way in which any framework could be constructed was by cross-reference to areas where the historical chronology was known. Typological dating—that is, dating by analogy to other artifacts of known date—can become a difficult circular argument. Added to this, the idea that technology had diffused out from the ancient East gradually toward the west, perhaps with a major jump to the Iberian Peninsula (modern Spain and Portugal), which itself then acted as another center for diffusion, colored the interpretations, since a passage of time was generally added for the process of uptake of the new technologies.

It is with this widely accepted idea of the spread of civilization across Europe from the East, with dating in the East being well established through the historical record, that archaeological thought progressed until the scientific advances of the second half of the twentieth century.

EARLY RADIOCARBON DATING

In order to appreciate the impact of the information that has been provided by radiocarbon dating on our understanding of prehistory, it is first necessary to have a brief understanding of the theory and practice of the methodology.

Carbon exists in three forms, or isotopes, 12C, 13C, and 14C, of which two are stable, but 14C, or carbon 14 as it is sometimes known, is radioactive and decays over time. Carbon 14 is produced when cosmic neutrons strike nitrogen in the upper atmosphere. It readily combines with oxygen to form 14CO2—radioactive carbon dioxide, which mixes throughout the atmosphere.

All living things take in some of this material while they are alive, either as gas from the atmosphere, or dissolved in water, or, in the case of animals, as part of their diet of plants or other animals. The amounts of this radioactive carbon are very small indeed, something like one part for every million million parts of nonradioactive carbon. As soon as an organism dies, however, it no longer takes up more carbon 14, but that which it does have decays slowly, reducing to half the original amount in about 5,730 years. If one knows how much radioactive carbon there was at the time the organism was alive, and one can measure the tiny amount of it left in the organic matter today, given the rate of decay, it is theoretically possible to tell the length of time that has elapsed since the organism died.

This calculation is achieved by converting the carbon into either a liquid or gaseous substance and measuring the number of radioactive decays from this sample over a time period. This brilliant idea for a new dating technique was first applied by Willard Libby in 1949 and was very quickly recognized by archaeologists as a way of establishing the missing chronological framework within which to set their findings. Yet it was quite some time before the majority of archaeologists were prepared to accept the dates being produced. They had several reasons to be skeptical about the results of radiocarbon dating.

First, contamination of the sample is a serious potential problem, especially since one is dealing with such small quantities of carbon 14. For example, a minute drop of oil (ancient carbon), small amounts of fungus growing on the organic remains, or even flakes of skin from the collector of the sample (modern carbon) could seriously affect the results.

The so-called half-life for carbon 14—that is, the time it takes to decay to half its original amount—was understood by Libby early on to be 5,568 years, whereas it is now known to be closer to 5,730 years. Also, the amounts being measured are very small indeed, so that minuscule errors in reading the amounts of radioactive material present in the sample will have proportionally a very large impact on the result.

Another potential problem is that although it was initially assumed that all organisms took in the same mix of radioactive and nonradioactive carbon, it was later found that a process known as "fractionation" occurs, whereby different organisms take up different isotopes in varying proportions.

Finally, one of the original assumptions behind the carbon-14 dating process was that the amount of radioactive carbon in the atmosphere is likely to have been fairly constant throughout the last fifty thousand to sixty thousand years—the maximum period during which radiocarbon dating generally can be applied, because after this time the amounts become too small to be measured with an acceptable degree of accuracy.

As each of these problems was addressed—by greater understanding of the theory behind the system, by the introduction of better protocols for the collection, submission, and analysis of the materials, and by improvements in the analyzing equipment— the technique gained wide-scale acceptance, and Willard Libby was awarded the Nobel Prize for chemistry in 1960.

Colin Renfrew refers to this period when the first dates were coming out as the "first radiocarbon revolution." But even as the method of carbon-14 dating gained acceptance, some surprising results emerged concerning dates relating to early agriculture and settlement. Dates from Jericho suggested settlement around six thousand years ago, about fifteen hundred years earlier than expected (subsequent analyses have set the foundation of pre-pottery Jericho to around 7000 b.c.). Dates for the European Neolithic were coming out around a thousand years earlier than the accepted wisdom of the time. The radiocarbon-derived dates for artifacts from the Egyptian and Mesopotamian areas, for which there was a sound historical chronology already in existence, were apparently different by a few hundred years, whereas many dates that started to come from prehistoric sites in Europe were suggesting that they were far older than was thought possible. The many potential errors in deriving radiocarbon dates continued to make it easy to suggest that the whole methodology was flawed.

DENDROCHRONOLOGY

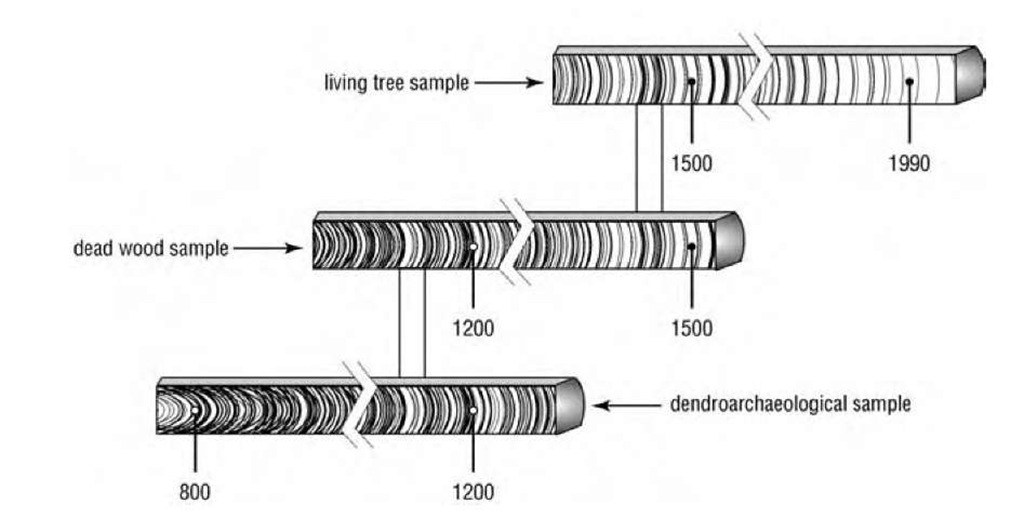

The next real breakthrough in the story of how a dating framework for prehistory in the barbarian world came about was the availability of precisely dated wood samples that would allow for independent testing of the radiocarbon timescale. Dendrochronology, or tree-ring dating, is based on the fact that trees of the same species, growing over a wide geographical area and subject to the same weather conditions throughout their growth, will produce similar ring-width series that can be crossmatched between them (fig. 1). Although every individual tree will reflect its own unique circumstances in its rings, there is generally sufficient climatically induced "signal" that if the ring series is long enough it can be matched to others that grew at the same period in history. If one starts with living, or recently felled trees, each ring can be assigned a calendar year. Some individuals of a species may have missing or even apparent double rings, but these can usually be detected by cross-matching against many other trees from the same species.

By finding older sources of wood, either preserved in deposits or used in archaeological contexts, it is possible to match the outermost rings of this older wood with the innermost rings of the dated material, and extend the chronology back in time. By successive overlapping of older and older material, long chronologies, over thousands of years, can be produced.

Dendrochronology developed rapidly at the start of the twentieth century, particularly in the United States with the work of A. E. Douglass (1919). When Charles Ferguson in the mid-1960s developed a bristlecone pine chronology going back several thousand years (1969), and in the 1980s Bernd Becker (1981) and Michael Baillie and colleagues (1983) produced long oak chronologies, wood samples from a wide geographical area, of precisely known date, could be subjected to radiocarbon analysis. As early as 1967, H. E. Suess produced a graph that enabled corrections to be applied to radiocarbon dates resulting from the fluctuations observed from tree-ring samples, and this method of determining chronology was rapidly developed.

If the amount of carbon 14 in the atmosphere had remained constant, and if the conditions of preservation of the material had not had differential effects on the amounts of radioactive carbon in the samples, one would expect that if the amount of carbon 14 was plotted against time (or against the calendrical date of the wood sample derived by dendrochronology) one would find a simple relationship.

The results actually obtained show that there have been great fluctuations in the amount of carbon 14 in the atmosphere at different periods in history and that these changes can occur rapidly, over a matter of a few years or decades, as well as showing longer-term fluctuations over centuries or millennia. This variation is thought to be the result of fluctuations in the magnetic field of the Earth.

This means that if one simply draws a decay curve and reads a date from it corresponding to the amount of carbon 14 found in a given sample, there is the potential to be a long way from the actual date of the sample. In fact the decay curve has many "wobbles" within it, such that it is possible that the same amount of carbon 14 found in a sample could actually result from material from more than one date. By the late 1980s these fluctuations had been well documented by Minze Stuiver and Gordon Pearson, and it became possible to give a more precise statistical probability of the actual date range of the sample being submitted. Stuiver and Pearson’s later curve (1993) has become the standard against which most radiocarbon determinations in the time span back to about 6000 b.c. have been calibrated.

This high-precision dating requires far more accurate measurements of the carbon 14 in the sample, an accuracy that results from more careful preparation of the sample and longer counting periods, but such improvement obviously incurs greater costs. To obtain a 10 percent increase in the level of accuracy requires an additional one hundred times the length of counting. It is not always appropriate to expend these resources on samples if, for instance, all that is required is to know the broad relative dates of several samples in a sequence. A situation therefore emerged whereby one could obtain a "routine date" or a "high-precision date" depending on the questions to be answered.

In the late 1970s a further advance in radiocarbon dating was made with the introduction of accelerator mass spectrometry (AMS). In this method, the actual amount of carbon 14 present in the sample is measured directly by mass spectroscopy, rather than counting the number of radioactive decays in a given time period. The introduction of AMS carbon-14 dating has reduced the associated error terms to a period of around plus or minus sixty to eighty years in most cases.

CALIBRATION

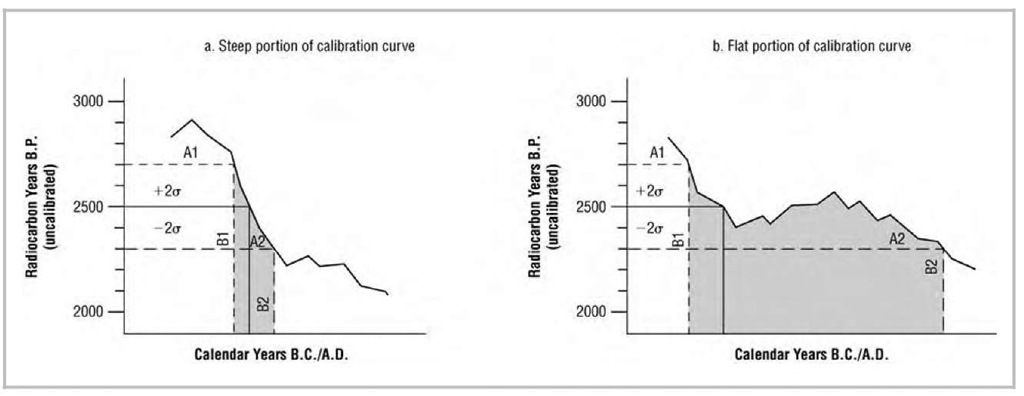

Once a radiocarbon age determination has been produced, it is generally converted into a calibrated age, by reference to a calibration curve based on car-bon-14 determinations of dendrochronologically dated wood. Such calibration curves show the variations in carbon content against calendar years, with the associated error terms—which vary in different periods. A very basic understanding of statistics is necessary here. An uncalibrated age is given with its associated possible error, expressed as one standard deviation from the mean: for example, 2500±100 b.p. (or "years ago"). In order to ensure that there is a 95 percent probability (the normal limit for most scientific studies) that the calibrated date will lie within the range quoted, we need to take a two-standard-deviation range: that is, 2500±200, or 2700-2300 years b.p. If the upper and lower limits of these uncalibrated dates are then plotted on the calibration curve, they can be converted into calendar years, which may give a broader or narrower date range, depending on the shape of the curve at this point.

Apart from the dating of human artifacts, the development of long dendrochronologies has allowed environmental factors to be dated, giving important background information to the human story. Dendroclimatology, the extraction of climatic information from the tree-ring series, is a well-established and growing area of tree-ring work.

Dendrochronology has itself provided dates of great importance—for example the event of 1628 b.c. first described by Valmore LaMarche and Kath-erine Hirschboeck (1984) and discussed at length by Baillie in A Slice through Time (1995). The eruption of Santorini (also known as Thera) took place in the Bronze Age and would have had effects throughout the Aegean. The precise dating of this event has implications for interpreting several prehistoric events in the region and has often been proposed as the most likely cause for the end of Minoan civilization on Crete. This itself was clarified when an ash layer identified as coming from this eruption was found stratified before the end of Minoan civilization, between two phases known as LM1A and LM1B. LM1A appears to end at Akrotiri with the eruption, and the end of LM1B is traditionally linked to around 1450 b.c.

Fig. 1. Cross-dated wood samples overlap in time. Successive overlapping of older tree-ring sequences allow long chronologies to be built. In practice, many wood samples represent each year of the chronology.

Some scientists believed that the eruption, presumably marking the end of LM1A, could not be put earlier than 1550 b.c. based on links between the Aegean artifacts and the established Egyptian chronology; although when a tree-ring event first suggested a possible date in the seventeenth century b.c. other workers were able to reconcile their interpretations of the archaeology to fit with this date. The Santorini eruption brings together several strands of scientific dating—tree rings, radiocarbon dating, and ice core work, as well as traditional linkages based on stylistic similarities between objects.

Radiocarbon analysis of short-lived organic matter, such as seeds charred by the eruption, has been carried out on many samples. This has produced a range of dates that even after calibration gives a spread that is not completely capable of distinguishing between a seventeenth and a sixteenth century b.c. date. In fact, the eruption falls on one of those parts of the radiocarbon calibration curve where it is actually not possible to distinguish between 1628 b.c. and 1530 b.c. because the curve has a "wobble" during this period (fig. 2). In this particular time frame, the collection of more and more radiocarbon samples to date a single event does not make the actual date any clearer.

Layers in ice cores also approximate to annual events and have been used as a dating tool, with the added advantage that acidity peaks in the ice have been found to coincide with ash deposits from volcanic eruptions. An acidity layer corresponding to an eruption has been noted at 1645±20 b.c. This range is remarkably close to the 1628 b.c. event noted in two different tree-ring sequences from widely separate geographical areas.

No one can prove that these two markers represent the same event, and no one can yet prove that the event in question is the eruption of Santorini.

However, there are no other candidate eruptions that have yet been identified, and something must have caused both observations.

The ice core evidence and the amounts of sulfur outgassed from Santorini, causing the acidity peak, have been the subject of much debate. The radiocarbon dates for this event show a spread that is not helpful in pinning down the actual date. Ancient historical records in the form of Egyptian writings only give negative information, in that were the date of the Santorini eruption really in the mid-sixteenth century b.c. one might reasonably expect it to have been recorded in this century, but no records have been found. Baillie makes a strong argument for the tree-ring date to relate to Santorini and leaves us with the thought that if it is not recording that event, another major event causing the decline in tree-ring widths over North America and Ireland must have taken place, which is as yet unrecognized.

THE COLLAPSE OF TRADITIONAL THINKING ON PREHISTORY

Tree-ring calibration of the radiocarbon timescale removed the doubt lingering in some minds about the veracity of the dates being produced and brought in a whole new raft of dates for both the Near East and Europe. Much greater than the production of dates themselves, however, was the realization that came about as a result of having large numbers of accurate dates. Although the established historical framework for the ancient East remained largely unaltered, most dates for significant events in Europe, such as the introduction of stone buildings or monuments, metalworking, and so forth, were found to be far earlier than most archaeologists had previously expected. Whereas the great pyramids of Egypt had always been considered to be among the oldest man-made stone buildings on Earth, dating back to perhaps 2500-2700 b.c., it now emerged that the megalithic tombs of western Europe were older than either the pyramids or the round tombs of Crete, both of which had always been considered as their precursors. Newgrange in Ireland dates to about 3200 b.c. Similarly, it can now be shown that copper was being worked in the Balkans several centuries before a comparable level of development emerged in the Aegean, a region that was thought to be the source of a skill base that was then taken westward.

The whole idea of the diffusion of ideas from the East, bringing civilization to western Europe was found to be wrong. Colin Renfrew recognized what he called a "chronological fault line," with the areas of the Aegean and eastern Mediterranean lying on one side and western Europe on the other. Those areas to the south and east of the line do not have their dates much altered as a result of tree-ring-based radiocarbon calibration, whereas those to the north and west are made several centuries earlier.

Continuing the analogy with geology, all the strata and cultures once thought to lie at the same level before radiocarbon dating became shifted in their relationship to each other, with the western European layers being much earlier in comparison, but with their internal relative dating to each other remaining the same. So the "layers" of the Late Neolithic in the Iberian Peninsula, for example, used to be matched with the Early Bronze Age in the Aegean, but now match at a similar time level. Thus all the earlier work of relating changes and sites to each other within each of these areas remains valid; it is just the associations across the "fault line" where changes have to be taken into account.

OTHER DATING METHODS

The closing decades of the twentieth century saw the development of a range of other specialist dating methods. Some of these are more suited to dating rocks and remains beyond the normal useful range of radiocarbon dating. Methods that are of relatively limited use in the timeframe considered here, are not readily applicable to archaeological remains, or are as yet still considered under development include the following.

Fig. 2. Hypothetical radiocarbon calibration curves derived from tree rings.

Of far more value with prehistoric archaeological remains are thermoluminescence (TL), optical stimulation luminescence dating (OSL), and obsidian hy-dration. The last of these is restricted to obsidian finds, which form a surface hydration layer when exposed to air, the thickness of this layer corresponding with the length of exposure.

Thermoluminescence (TL) and optical (OSL) dating have perhaps been the most widely used, especially with ceramic artifacts. TL was developed in the 1960s and 1970s. TL is based on the fact that some minerals such as quartz, feldspars, and calcites react in a particular way after exposure to radiation, so that when heated, they give off light. The system relies on impurities in the original item. The sites of the atoms of the impurities attract free electrons, which are released when heat energy is applied. The electrons recombine at luminescence centers and release photons. The amount of thermoluminescence is proportional to the number of trapped electrons present, which is in turn proportional to the radiation exposure, or time elapsed. This is not a straight linear relationship, since the longer the exposure time, the fewer the sites available to trap electrons.

Some event in which the temperature of the object reached 450°C needs to have taken place to "zero" the system—for example, the firing of pottery, or heating in a hearth. It may be difficult to guarantee that objects, say, at the edge of a hearth were in fact zeroed. Pottery does not have this drawback, and objects as young as one hundred years can be dated in this way. The subsequent exposure of such items to sunlight might empty some or all of the sites, but the method is very suitable for buried objects.

The first comparisons of dates between ther-moluminescence and radiocarbon were published in 1970 by D. W. Zimmerman and J. Huxtable. TL dates from three sites were 5350 b.c., 5330 b.c., and 4610 b.c., and the range of radiocarbon dates for the same site fall into the period 5300-4600 b.c. This was reassuring news for many scientists.

OSL works on principles similar to those of TL, with samples being exposed to green laser light to empty the electron traps. The main difference from TL is that light rather than heat is the agent that zeroes the system and gives the dating reference. Samples of quartz grains exposed to sunlight but then subsequently deposited and buried are the main samples subjected to this analysis. One example is the White Horse at Uffington in southern England. This is a prehistoric figure of a horse, cut directly into the hillside and packed with white chalk. Various experts had judged the artistic style of this object to be either Anglo-Saxon or Celtic (Late Iron Age). However, analysis of silt laid down, presumably around the time of formation, gave OSL dates in the range 1400-600 b.c.—dating the piece to the Late Bronze Age, which relates quite well to other finds in the area.

The existence of an independent, scientifically based dating framework that does not rely on stylistic similarities between objects has profoundly changed our view of the ancient world. Although each of these dating techniques has its limitations, and individual results still need to be assessed with the appropriate caution, the overall pattern that emerges is quite different from that of a relatively few decades ago.

Consequently, the view of prehistory in areas such as western Europe has changed dramatically since the 1960s. Although definitions of civilization are always difficult, and generally involve living in complex social societies and writing, our view of the so-called barbarian people inhabiting western Europe—living primitively while the great civilizations of Egypt and the Aegean thrived, and "waiting" to be civilized by influences from the East—has had to be changed out of all recognition when considering the organization necessary to build the large stone structures of Stonehenge in England, Newgrange in Ireland, Maeshowe in Orkney, the megalithic tombs of Brittany and Spain, and the timber pile-dwellings of central Europe.

The nature of past environments is a key aspect of archaeology because human action cannot be understood in isolation from its surroundings. For example, the lifestyle of a human group living in a densely forested area in a temperate climate would be very different from that of the same community inhabiting a treeless arctic landscape. Furthermore, in the case of any individual archaeological site, it must be realized that the modern environment may bear little relationship to that of the past. There may have been major changes in climate, sea level, soils, and plant and animal communities over the millennia. Thus a site occupying a coastal setting in the Mesolithic period might now lie several kilometers inland, or it might be completely submerged by the sea.

The reconstruction of past environments is based on many types of evidence, ranging from long-term perspectives on climate change provided by analysis of deep sea sediments and the Greenland and Antarctic ice sheets to reconstruction of local plant and animal communities from biological remains excavated from archaeological sites. Specialists from many fields, including climatologists, geologists, soil scientists, botanists, and zoologists are involved in analyzing such data.

THE HISTORY OF ARCHAEOLOGICAL INTEREST IN THE ENVIRONMENT

Until the 1970s archaeology was concerned mainly with using structures and artifacts to produce a reconstruction of a site, with little attention paid to the surrounding environment. If any "environmental" evidence at all was retrieved, it usually consisted of animal bones and larger plant remains (such as charred grain), which might be discussed in relation to site economy.

Important exceptions did exist, notably where excavation of wetland sites was involved. In wetlands, permanent waterlogging results in an oxygen-poor environment that reduces the level of mi-crobial activity and enables organic materials to be preserved. These materials range from pollen grains to complete wooden buildings, and from microscopic parasite eggs to intact bodies such as the Danish Iron Age "bog bodies" Tollund Man and Grauballe Man. The discovery of sites such as the prehistoric lake villages of Switzerland in the mid-nineteenth century prompted the realization that the study of plant and animal remains could add significantly to an understanding of site function and setting.

In Britain one area of wetland that became a focus for early collaboration between archaeologists and environmental scientists was the East Anglian Fenland. The Fenland Research Committee was established in the 1930s to investigate the sedimentary history and archaeology of the area, which was densely settled in the Roman period. The prehistoric archaeology of the Fens was investigated by Grahame Clark, who later demonstrated the potential of biological remains for answering questions about environment and resource availability in his well-known excavations at the Early Mesolithic site of Star Carr in northeastern England.