Graphics Reference

In-Depth Information

be smoothed away and not detectable at the coarser levels of the pyramid where the

small

assumption is valid. Thus, the correct motion never gets propagated to

finer levels of the pyramid.

Techniques for large-displacement optical flow often adapt ideas from the invari-

ant descriptor literature discussed in the last chapter to match regions that are far

apart. For example, Brox et al. [

73

] proposed an algorithm that begins with the seg-

mentation of each image into roughly constant-texture patches, each of which is

described by a SIFT-like descriptor. The descriptors are matched to obtain several

nearest-neighbor correspondences, and optical flow is estimated for each patch pair.

Finally, an additional term is added to a robust Horn-Schunck-type cost function that

biases the flow at

(

u

,

v

)

(

x

,

y

)

to be close to one of the

(

u

i

,

v

i

)

candidates at

(

x

,

y

)

obtained

from the descriptor matching.

Liu et al. [

289

] proposed another option called “SIFTFlow” that replaces the bright-

ness constancy assumption with a SIFT-descriptor constancy assumption. That is,

the optical flow data term is based on

S

2

(

x

+

u

,

y

+

v

)

−

S

1

(

x

,

y

)

, where

S

1

(

x

,

y

)

and

S

2

in

I

1

and

I

2

, respectively. The

descriptors are computed densely at each pixel of the images instead of at sparsely

detected feature locations. However, themethod is really designed tomatch between

different scenes, and the resulting flow fields typically appear blocky compared to

the method of Brox et al.

(

x

,

y

)

are the SIFTdescriptor vectors computed at

(

x

,

y

)

5.3.6

Human-Assisted Motion Annotation

In the context of visual effects, it's important to be able to interactively evalu-

ate and modify optical flow fields, since the result of an automatic algorithm is

unlikely to be immediately acceptable. Liu et al. [

288

] proposed a human-assisted

motion annotation algorithm that semiautomatically creates an optical flow field for

a video sequence in two steps. First, the user draws contours around foreground

and background objects in one frame, which are automatically tracked across the

rest of the frames. The user then employs a graphical user interface to refine the

contours in each frame until they conform tightly to the desired object bound-

aries, and also specifies the relative depth ordering of the objects. Next, the optical

flow is automatically estimated across the sequence within each labeled contour.

The user interactively refines the optical flow in each layer by introducing point

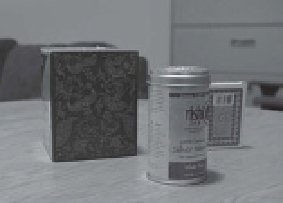

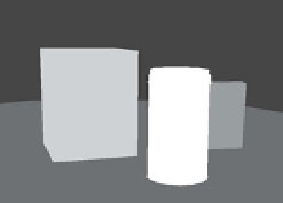

(a)

(b)

(c)

Figure 5.9.

(a) One frame of an original video sequence. (b) Layers created semi-automatically

based on the human-assistedmotion estimation algorithmof Liu et al. [

288

]. (c) The

u

components

of an optical flow field semiautomatically generated using the layers in (b). The optical flow field

is very smooth within the layers.