Graphics Reference

In-Depth Information

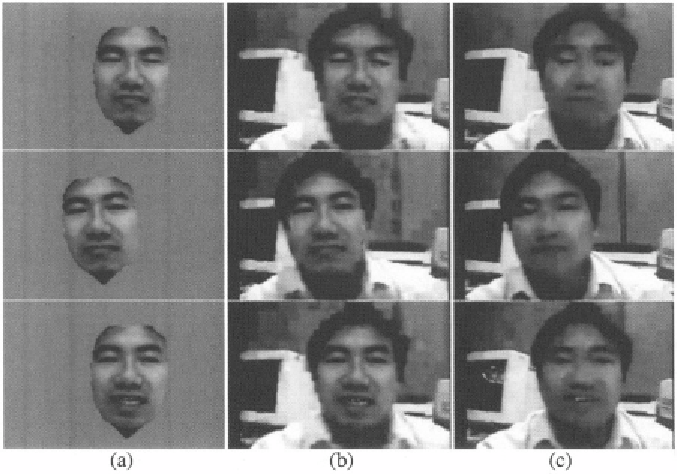

Figure 4.2.

(a): The synthesized face motion. (b): The reconstructed video frame with syn-

thesized face motion. (c): The reconstructed video frame using H.26L codec.

2001]. Compared to other 3D non-rigid facial motion tracking approaches using

single camera, which are cited in Section 1, the features of our tracking system

can be summarized as: (1) the deformation space is learned automatically from

data such that it avoids manual crafting but still captures the characteristics

of real facial motion; (2) it is real-time so that it can be used in real-time

human computer interface and coding applications; (3) To reduce “drifting”

caused by error accumulation in long-term tracking, it uses templates in both

the initial frame and previous frame when estimating the template-matching-

based optical flow (see [Tao and Huang, 1999]); and (4) it is able to recover

from temporary loss of tracking by incorporating a template-matching-based

face detection module.

4. Summary

In this chapter, a robust real-time 3D geometric face tracking system is pre-

sented. The proposed motion-capture-based geometric motion model is adopted

in the tracking system to replace the original handcrafted facial motion model.

Therefore, extensive manual editing of the motion model can be avoided. Us-

ing the estimated geometric motion parameters, we conduct experiments on

Search WWH ::

Custom Search