Information Technology Reference

In-Depth Information

method

by taking for all the weight vectors the same value 1/

n

, where

n

is the

dimension of weight vectors.

3.3.5 Probabilistic Neural Networks

The idea of probabilistic neural networks was born in the late 1980s at Lockheed

Palo Alto Research Centre, where the problem of special patterns classification

into submarine/non-submarine classes was to be solved. Specht (1988) suggested

using a newly elaborated special kind of neural network, the

probabilistic neural

networks.

To solve the classification problem, the new type of network had to

operate in parallel with a

polynomial ADALINE

(Specht, 1990).

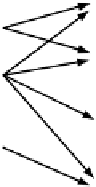

Probability network

X

1

:

:

X

2

y

1

:

:

:

:

:

:

:

:

:

:

y

m

X

k

X

p

Input Layer

Pattern Unit

Summation Unit

Output Layer

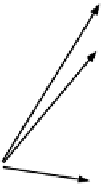

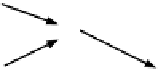

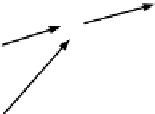

Figure 3.11.

Architecture of a probability network

Supposing that

12

PP P

are the

a priori probabilities

for the vector

x

to belong to

a corresponding category, and denoting by

,

,...,

m

L

the merit of classification loss for the

category

i

, the Bayesian decision rules

PL p

for

i

= 1, 2,…,

m

, can help determine

the largest product value. In case that, say,

,

ii i

PL

t

holds, the input vector

x

is assigned to the category

i

. In this case the

decision boundary

for the above

decision, that can be a nonlinear

decision surface

of arbitrary complexity, is

defined by

PL p

ii i

j

j

j

PL p

j

j

j

p

.

i

LP

ii

The structure of probabilistic networks is similar to that of backpropagation

networks, but the two types of network have different activation functions. In

probabilistic networks the sigmoid function is replaced by a class of exponential

functions (Specht, 1988). Also, the probabilistic networks require only a single

training pass, in order that - with the growing number of training examples - the

decision surfaces finally reach the Bayes-optimal decision boundaries (Specht,

1990). This is achieved by modelling the well-known Bayesian classifier that

Search WWH ::

Custom Search