Cryptography Reference

In-Depth Information

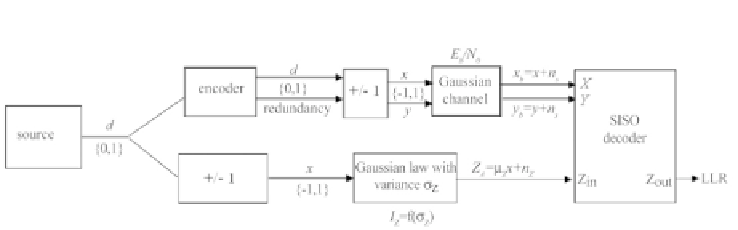

Figure 7.26 - Algorithm for determining the transfer function

IE

=

T

(

IA,Eb/N

0

)

b. Definition of the

apriori

mutual information

Hypotheses:

•

Hyp. 1: when the interleaving is large enough, the distribution of the input

extrinsic information can be approximated by a Gaussian distribution after

a few iterations.

•

Hyp.

2: probability density

f

(

z

|

x

)

satisfies the exponential symmetry

condition, that is,

f

(

z

|

x

)=

f

(

−

z

|

x

)

exp

(

−

z

)

.

The first hypothesis allows the

a priori

LLR

Z

A

ofaSISOdecodertobemod-

elled by a variable having independent Gaussian noise

n

z

, with variance

σ

z

and

expectation

μ

z

, applied to the transmitted information symbol

x

according to

the expression

Z

A

=

μ

z

x

+

n

z

The second hypothesis imposes

σ

z

=2

μ

z

. The amplitude of the extrinsic infor-

mation is therefore modelled by the following distribution:

√

4

πμ

z

exp

μ

z

x

)

2

4

μ

z

1

(

λ

−

f

(

λ

|

x

)=

−

(7.60)

From (7.59) and (7.60), observing that

f

(

z

|

1

)=

f

(

−

z

|−

1)

, we deduce the

a

priori

mutual information:

√

4

πμ

z

exp

log

2

2

1+exp(

dλ

+

∞

μ

z

)

2

4

μ

z

1

(

λ

−

IA

=

−

×

−

λ

)

−∞

or again

exp

λ

σ

z

2

2

2

σ

z

+

∞

1

√

2

πσ

z

−

IA

=1

−

−

×

log

2

[1 + exp (

−

λ

)]

dλ

(7.61)

−∞