Image Processing Reference

In-Depth Information

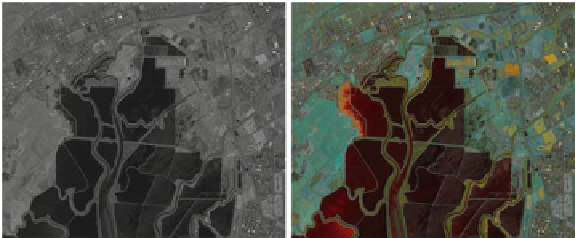

(a)

(b)

Fig. 6.2

Results of fusion for the variational approach for the moffett

2

image from the AVIRIS.

a

Grayscale fused image, and

b

RGB fused image

bands each of which has a nominal bandwidth of 10 nm. The RGB version of the

fusion output is obtained by independently fusing three nearly equal subsets of the

input hyperspectral data, and then assigning it to the different channels of the display.

As previously stated, we have followed a simple strategy for partitioning the set of

hyperspectral bands, that is, partition the sequentially arranged bands into nearly

equal three subsets. The RGB version of the result for the moffett

2

data is shown in

Fig.

6.2

b. One may notice that the fusion results are quite good, although some loss

of details may be seen at small bright objects due to smoothness constraint imposed

on the fused image. For improved results, one may use a discontinuity-preserving

smoothness criterion as suggested by Geman and Geman [63]. However, this would

make the solution computationally very demanding and hence, is not pursued.

6.6 Summary

A technique for fusion of hyperspectral images has been discussed in this chapter,

without

explicit

computation of fusion weights. The weighting function, defined

as a data dependent term, is an implicit part of the fusion process. The weighting

function is derived from the local contrast of the input hyperspectral bands, and is also

based on the concept of balancing the radiometric information in the fused image.

An iterative solution based on the Euler-Lagrange equation refines the fused image

that combines the pixels with high local contrast for a smooth and visually pleasing

output. However, at some places, the output appears oversmooth which smears the

edges and boundaries.

The solution converges to the desired fused image within a few iterations. How-

ever, it requires computation of the implicit weighting function at the end of every

iteration which makes it computationally expensive as compared to the techniques

described in the previous chapters.