Java Reference

In-Depth Information

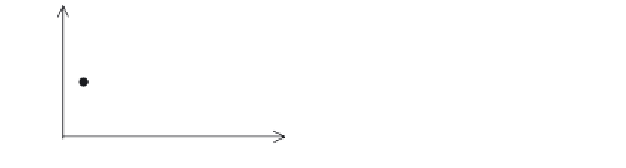

Income

Income

Age

Age

Figure 1-4

Fitting a regression line to a set of data points.

In two dimensions,

4

involving the attributes age and income, and

with a small number of data points, the problem seems fairly simple.

We could probably even “eye” a solution by drawing a line to fit the

data and estimating the values for

m

and

b

. However, consider data

that is not in two dimensions, but a hundred, a thousand, or several

thousand dimensions. The attributes may include numerical values

or consist of categories that are either numbers or strings. Some of

the categorical data may have an ordering (e.g.,

high,

medium,

low

) or

be unordered (e.g.,

married,

unmarried,

widowed

). Further, consider

cases where there are tens of thousands or millions of cases. It is

intractable for a human to make sense of this data, but it is relatively

easy for the right algorithm executing on a sufficiently powerful

computer. Here lies the essence of data mining.

Now, data mining algorithms are typically much more complex

than that of linear regression; however, the concept is the same: there

is a compact representation of the “knowledge” present in the data

that can be used for prediction or inspection.

1.2.5

Some Jargon

Every field has its jargon—the vernacular of the “in crowd.” Here's a

quick overview of some of the data mining jargon.

At one level, data mining experts talk about things like models

and techniques, or

mining functions

called classification, regression,

clustering, attribute importance, and association.

Classification

mod-

els predict some outcome, such as which offer a customer will

respond to.

Regression

models predict a continuous number, such as

what is the predicted value of a home or a person's income.

4

This use of the term “dimension” here should not be confused with the same

term as used in OLAP, which has a different intent. Here, the term “dimension”

is synonymous with “attribute” or “column.”

Search WWH ::

Custom Search