Java Reference

In-Depth Information

7.2.5

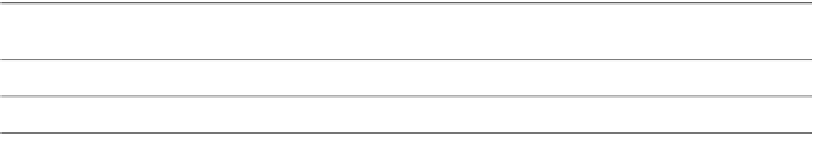

Evaluate Model Quality: Compute Regression Test Metrics

In regression models, quality is measured by computing the cumulative

errors when comparing predicted values against known values. Gen-

erally, the lower the cumulative error the better the model perfor-

mance. There are many mathematical metrics that can be used to

quantify error, such as

root mean squared (RMS)

error,

mean absolute

error

, and

R-squared

error. Table 7-8 illustrates the computation of

some of these metrics by taking three cases. To compute the mean

absolute error, we take the ratio between the sum of the absolute

value of prediction errors and number of predictions. To compute the

root mean squared error, we first compute the mean of the prediction

error squares, then take the square root.

There is another metric called the R-squared value, which mea-

sures the relative predictive power of a model. R-squared is a

descriptive measure between 0 and 1. The closer it is to 1, the greater

the accuracy of the regression model. When R squared equals 1, the

regression makes perfect predictions. For more details about these

regression model evaluation metrics refer to [Witten/Frank 2005].

7.2.6

Apply Model: Obtain Prediction Results

After finding a regression model with minimum error, we apply that

model to new data to make predictions. The model signature, as

discussed for classification in Section 7.2.1, is also applicable for

regression. The apply data must provide all the attributes in the

model's signature. Of course, attribute values for some cases may be

missing and most models can function normally in the presence of

some missing values. The regression apply operation can produce

contents such as

predicted value

(model predicted target value) and

Table 7-8

Regression test metrics

Prediction error

(predicted (p)

Promotion ID

Predicted value

Actual value

actual (a))

1

$950,000

$900,000

$50,000

2

$725,000

$745,000

$20,000

3

$1,050,000

$970,000

$70,000

Mean Absolute Error (50,000 20,000 70,000)/3

$46,667

50,000

2

20,000

2

70,000

2

/3

Root Mean Squared Error

$50,990

Search WWH ::

Custom Search