Java Reference

In-Depth Information

in that layer. For more details about neural networks refer to [Han/

Kamber 2006].

7.1.6

Evaluate Model Quality: Compute Classification Test Metrics

It is important to evaluate the quality of supervised models before

using them to make predictions in a production system. As discussed

in Chapter 3, to test supervised models, the historical data is split

into two datasets, one for building the model, the other for testing it.

Test dataset cases are typically not used to build a model, in order to

give a true assessment of a model's predictive accuracy.

JDM supports four types of popular test metrics for classification

models:

prediction accuracy, confusion matrix, receiver operating charac-

teristics

(

ROC

)

,

and

lift

. These metrics are computed by comparing

predicted and actual target values. This section discusses these test

metrics in the context of the ABCBank's customer attrition problem.

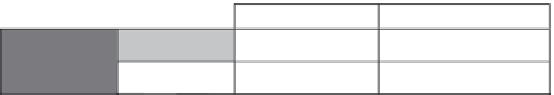

In the customer attrition problem, assume that the test dataset

has 1,000 cases and the classification model predicted 910 cases cor-

rectly, 90 cases incorrectly. The accuracy of the model on this dataset

is 910/1,000

0.91 or 91 percent.

Consider that out of 910 correct predictions 750 customers are

non-attriters and the remaining 160 are attriters. Out of 90 wrong

predictions 60 are predicted as

Attriters

when they are actually

Non-attriters

and 30 are predicted as

Non-attriters

when they are

actually

Attriters

. This is illustrated in Figure 7-6. To represent this,

we use a matrix called a

confusion matrix

. A

confusion matrix

is a

two-dimensional, N

N table that indicates the number of correct

and incorrect predictions a classification model made on specific

test data, where N represents the number of target attribute values.

It is called a confusion matrix because it points out where the

model gets

confused

, that is, makes incorrect predictions.

Predicted

Attriter

Non-attriter

30 (FN)

Attriter

160

Actual

Non-attriter

60 (FP)

750

Figure 7-6

Confusion matrix.

Search WWH ::

Custom Search