Information Technology Reference

In-Depth Information

z

−

1

z

−

1

z

−

1

z

−

1

x

(

τ

)

Bias

y

(

τ

)

u

(

τ

)

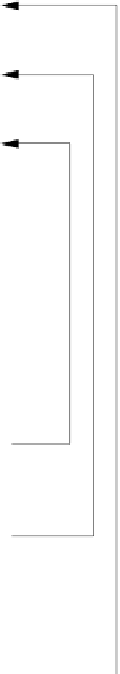

Fig. 6.12 A fully recurrent one hidden layer neural network. The notation is ex-

plained in the text.

6.2.1.3

Application of ZED to RTRL

The application of the ZED in this case cannot be based, as previously done,

using a static set of

n

error values. Instead, one has to consider

L

previous

values of the error in order to build a dynamic approximation of the error

density. This will use a time sliding window that will allow the definition of

the density in an online formulation of the learning problem.

For a training set of size

n

, the error r.v. e(

i

) represents the differ-

ence between the desired output vector and the actual output, for a given