Information Technology Reference

In-Depth Information

4250

B

B

B

B

4000

B

B

B

3750

B

3500

3250

3000

3

4

16

17

35

37

39

40

strategy

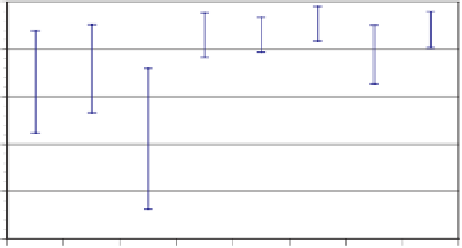

Fig. 3.

Performance of eight

Walverine

variants in the TAC-05 seeding rounds (507 games)

agent to miss two games. Moreover, it was clear to all present that the first 22 games

were tainted, due to a serious malfunction by

RoxyBot

.

5

Since games with erratic agent

behavior add noise to the scores, the TAC operators published unofficial results with the

errant

RoxyBot

games removed (Table 2).

Walverine

's missed games occurred during

those games, so removing them corrects both sources of our bad luck, and renders

Walverine

the top-scoring agent.

Ta b l e 2 .

Scores, adjusted scores, and 95% mean confidence intervals on control variate adjusted

scores for the 58 games of the TAC Travel 2005 finals, after removing the first 22 tainted games.

(

LearnAgents

experienced network problems for a few games, accounting for their high variance

and lowering their score.)

Agent

Raw Score

Adjusted Score

95% C.I.

Walverine

4157

4132

±

138

RoxyBot

4067

4030

±

167

Mertacor

4063

3974

±

152

Whitebear

4002

3902

±

130

Dolphin

3993

3899

±

149

SICS02

3905

3843

±

141

LearnAgents

3785

3719

±

280

e-Agent

3367

3342

±

117

Figure 4 shows the adjusted scores with error bars.

Walverine

beat the runner up

(

RoxyBot

)atthe

p

=0

.

17 significance level. Regardless of the ambiguity (statisti-

cal or otherwise) of

Walverine

's placement in the competition, we consider its strong

performance under real tournament conditions to be evidence (albeit limited) of the

efficacy of our approach to strategy generation in complex games such as TAC.

5

The misbehavior was due to a simple human error: instead of playing a copy of the agent on

each of the two game servers per the tournament protocol, the

RoxyBot

team accidentally set

both copies of the agent to play on the same server.

RoxyBot

not only failed to participate in

the first server's games, but placed double bids in games on the other server.