Information Technology Reference

In-Depth Information

Minority class

cluster 2

S

5

S

1

S

1

S

2

S

5

S

2

S

4

Minority class

cluster 1

S

4

Δ

1

S

3

S

3

Δ

2

Δ

(a)

(b)

S

1

S

5

S

2

S

4

S

3

(c)

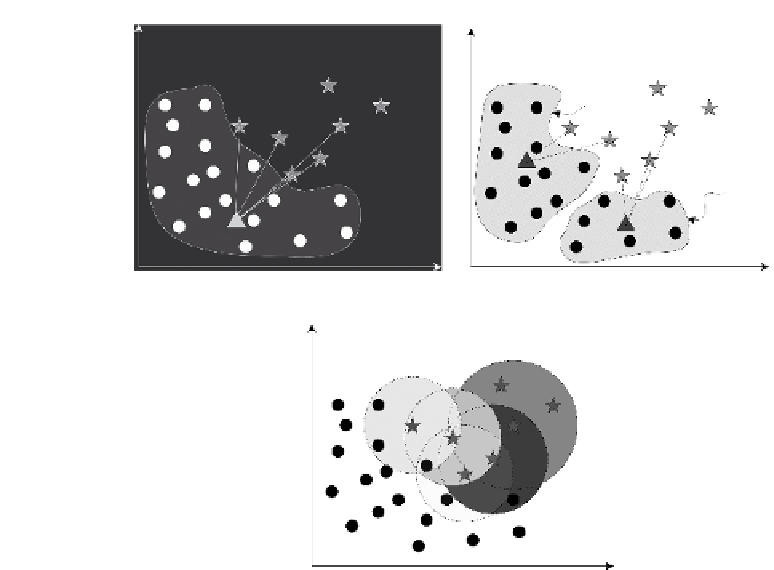

Figure 7.1

The selective accommodation mechanism: circles denote current minority

class examples and stars represent previous minority class examples. (a) Estimating sim-

ilarities based on distance calculation, (b) potential dilemma by estimating distance, and

(c) using the number of current minority class cases within

k

-nearest neighbors of each

previous minority example to estimate similarities.

knowledge of the class concept. However, sole maintenance of a hypothesis or

hypotheses on the current training data chunk is more or less equal to discarding

a significant part of previous knowledge, as knowledge of previous data chunks

can never be accessed again either explicitly or implicitly once they have been

processed.

One solution for addressing this issue is to maintain all hypotheses built on

training data chunks over time and apply all of them to make predictions on

datasets under evaluation. Concerning the way in which hypotheses combine,

Gao et al. [31] employed a uniform voting mechanism to combine the hypotheses

maintained this way as it is claimed that in practice the class concepts of datasets

under evaluation may not necessarily evolve consistently with the streaming train-

ing data chunks. Putting aside this debatable subject, most work still assumes that

the class distribution of the datasets under evaluation remains tuned to the evolu-

tion of the training data chunks. Wang et al. [32] weighed hypotheses according

to their classification accuracy on

current training data chunk

. The weighted

Search WWH ::

Custom Search