Thus

The interval includes zero. We are less than 95% certain that the two sets of measurements are

different! And indeed, this is taken from a set of runs that were done incorrectly. After modifying

the program, it was recompiled to a.out by mistake. The two sets of measurements actually

come from exactly the same binary!

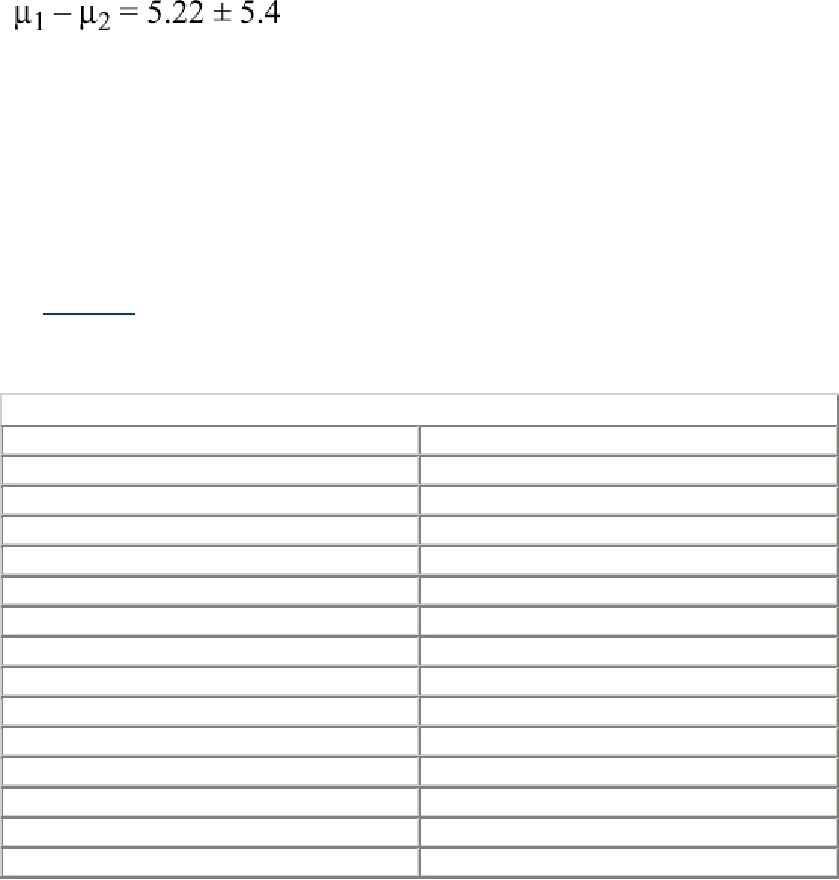

If you run only four measurements, the difference between the measured means must be greater

than (1.96 x std. error), or roughly twice the measured standard deviation. By running it ten times

(see Table 15-2) the 95% confidence level is obtained when the difference is greater than the

standard error. When the numbers are reasonably close together, you can eyeball the mean and

standard error fairly easily.

Table 15-2. Runtimes for Ten Trials

Run 1

Run 2

N_PROD = 1 N_CONS = 4

N_PROD = 1 N_CONS = 5

rate: 85.965975/s

rate: 89.984372/s

rate: 86.802915/s

rate: 91.710778/s

rate: 88.528658/s

rate: 91.075302/s

rate: 85.411582/s

rate: 91.741185/s

rate: 85.957945/s

rate: 87.995095/s

rate: 84.514983/s

rate: 93.661803/s

rate: 86.732842/s

rate: 89.505427/s

rate: 84.284994/s

rate: 89.262953/s

rate: 85.024726/s

rate: 89.611914/s

rate: 85.602694/s

rate: 91.972079/s

Mean rate: 85.88/s

Mean rate: 90.65/s

Standard deviation: 1.25

Standard deviation: 1.67

The difference between the means is about 5 and the standard deviation is about 1.5. The

difference, 5 1.5, is much greater than zero, so we can conclude with confidence that run 2 is

indeed superior to run 1. Doing this stuff well is not at all obvious, and doing it wrong is all too

common. We're not expecting you to do this carefully on every test, but you do have to be aware

of it.

General Performance Optimizations

By far the most important optimizations will not be specific to threaded programs, but rather, the

general optimizations you do for nonthread programs. We'll mention these optimizations but leave

the specifics to you. First, you choose the best algorithm. Second, you select the correct compiler

optimization. Third, you buy enough RAM to avoid excessive paging. Fourth, you minimize I/O.

Fifth, you minimize cache misses. Sixth, you do any other loop optimizations that the compiler

was unable to do. Finally, you can do the thread specific optimizations.

Search WWH :