Although obviously true, this fact is of no interest to many programs. Most programs with which

we have worked (client/server, and I/O intensive) see other limitations long before they ever hit

this one. Even numerically intensive programs often come up against limited memory bandwidth

sooner than they hit Amdahl's limit. Very large numeric programs with little synchronization will

approach it. So don't hold Amdahl's law up as the expected goal. It might not be possible.

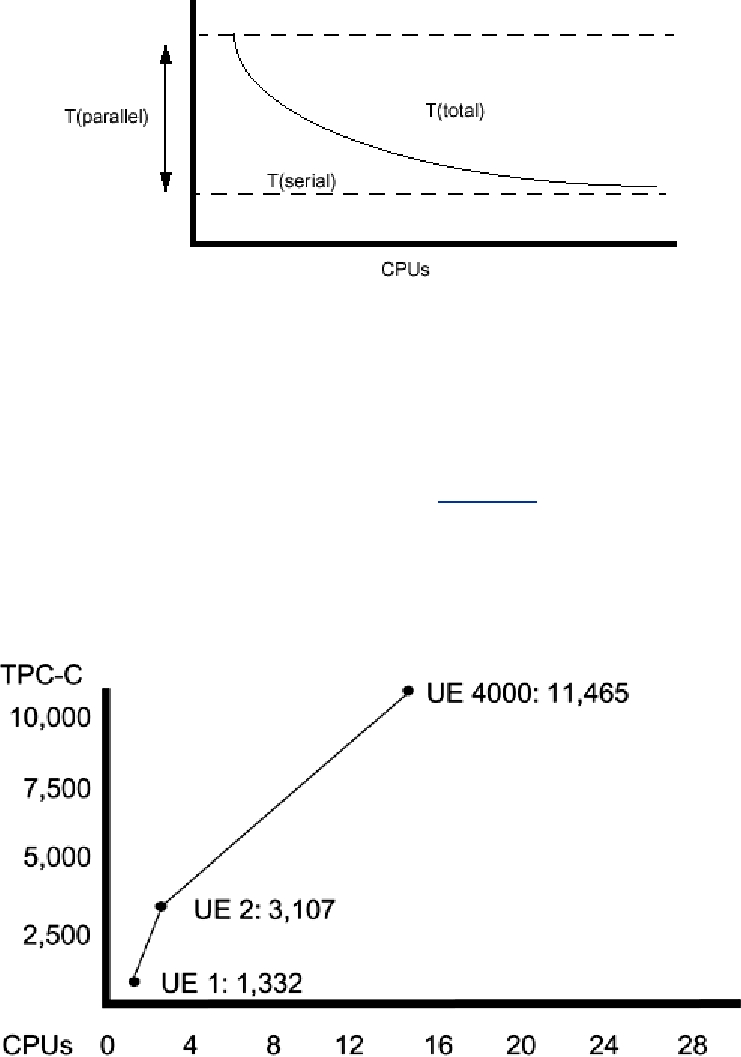

Client/server programs often show a lot of contention for shared data and make great demands

upon the I/O subsystem. Consider the TCP-C numbers in Figure 15-5. Irrespective of how

representative you think TPC-C is of actual database activity (there's lots of debate here), it is very

definitely a benchmark into whose optimization vendors put enormous effort. So it is notable that

on a benchmark as important as this, the limit of system size is down around 20 CPUs.

Figure 15-5. TPC-C Performance of a Sun UE6000

So what does this mean for you? That there are limitations. The primary limiting factor might be

synchronization overhead, it may be main memory access, it might be the I/O subsystem. As you

design and write your system, you should analyze the nature of your program and put your

optimization efforts toward these limits. And you should be testing your programs along the way.

Performance Bottlenecks

Wherever your program spends its time, that's the bottleneck. We can expect that the bottleneck

for a typical program will vary from subsystem to subsystem quite often during the life of the

Search WWH :